Citadel Data Engineer Interview Guide: Top 20 Questions, Skills & Salary (2026)

Introduction

The Citadel data engineer role sits at the core of a performance driven engineering organization where data infrastructure directly supports trading, risk, and research decisions. As financial markets become increasingly automated and latency sensitive, Citadel relies on robust, high throughput data pipelines to move information from raw market signals to production systems in real time. Data engineers are responsible for building systems that are fast, reliable, and correct under pressure, often operating at scales where small inefficiencies translate into real financial impact.

The Citadel data engineer interview reflects that responsibility. You are not evaluated on syntax alone. Interviewers look for strong fundamentals in SQL and programming, a deep understanding of distributed systems, and the ability to reason clearly about performance trade-offs, failure modes, and data quality. This guide outlines each stage of the Citadel data engineer interview, highlights the most common data engineer specific interview questions, and shares proven strategies to help you stand out and prepare effectively with Interview Query.

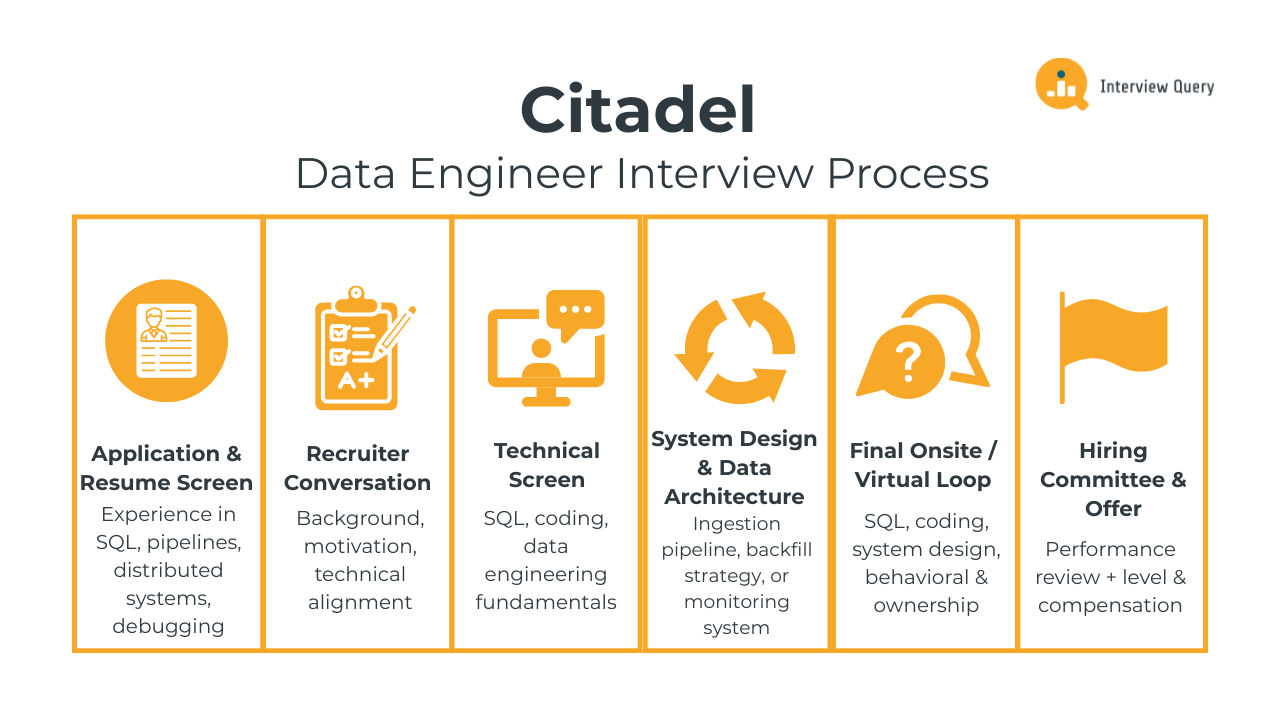

Citadel Data Engineer Interview Process

The Citadel data engineer interview process evaluates your ability to build reliable, high performance data systems under real production constraints. Each stage is designed to test core engineering fundamentals, system design judgment, and how clearly you reason through trade-offs that matter at scale. Expect a strong emphasis on correctness, latency awareness, and ownership, with interviews structured to mirror the kinds of decisions data engineers make in Citadel’s production environment. Most candidates complete the full loop within three to five weeks, depending on role level and team availability.

Application and Resume Screen

During the resume review, recruiters and hiring managers look for clear evidence that you have worked on data systems that operate at scale and under performance pressure. Strong resumes highlight experience with streaming or batch pipelines, SQL optimization, distributed systems, and production debugging. Impact matters more than tool lists, so measurable improvements to latency, reliability, throughput, or data quality stand out immediately.

Tip: Quantify system impact using concrete metrics like end to end latency reduction, pipeline failure rate improvements, or data freshness guarantees. This signals engineering rigor and an understanding of how system performance translates into real outcomes.

Initial Recruiter Conversation

The recruiter call focuses on your background, motivation for Citadel, and high level technical alignment. You will discuss your prior data engineering experience, the types of systems you have owned, and what attracts you to a performance driven environment. This conversation also covers role scope, team alignment, location preferences, and compensation expectations. While non technical, it sets the tone for how well you can articulate complex work clearly.

Tip: Be precise when describing past systems you owned. Clear explanations of scale, constraints, and trade-offs demonstrate communication discipline, which interviewers value as much as raw technical skill.

Technical Screen

The technical screen usually consists of one or two interviews focused on SQL, coding, and data engineering fundamentals. You may be asked to write queries that operate on large datasets, implement a core data structure, or reason through a pipeline design under time and memory constraints. Interviewers pay close attention to correctness, edge case handling, and how you explain performance trade-offs as you work.

Tip: Talk through your assumptions before coding and justify design choices out loud. This shows structured thinking and mirrors how senior engineers at Citadel evaluate production changes.

System Design and Data Architecture Interview

This round focuses on designing data systems that are scalable, fault tolerant, and predictable under load. You may be asked to design a real time ingestion pipeline, a backfill strategy for historical data, or a monitoring system for detecting data issues. Interviewers look for clear data flow, thoughtful failure handling, and realistic trade-offs between latency, consistency, and complexity.

Tip: Anchor your design in failure scenarios and recovery strategies. Demonstrating how you think about things breaking signals maturity and production readiness.

Final Onsite or Virtual Loop

The final loop is the most comprehensive stage of the Citadel data engineer interview process. It typically includes four to five interviews, each lasting around 45 to 60 minutes. These rounds evaluate how you apply fundamentals to real world data problems, reason through ambiguity, and communicate technical decisions clearly.

SQL And Data Processing Round: You will work through complex SQL or data transformation problems involving large, imperfect datasets. Tasks may include identifying data gaps, computing rolling metrics, or optimizing queries for performance. Interviewers assess logical correctness, efficiency, and your ability to reason about edge cases that commonly appear in production pipelines.

Tip: Explain how your query scales as data volume grows. This demonstrates performance awareness and an understanding of real production constraints.

Coding And Data Structures Round: This interview focuses on writing clean, efficient code in a shared environment. You may implement core data structures, process streaming inputs, or debug a performance bottleneck. Interviewers care about clarity, correctness, and how you reason through trade-offs between time, memory, and simplicity.

Tip: Prioritize readability and correctness before micro-optimizations. This reflects how production code is evaluated at Citadel.

System Design And Architecture Round: You will design a full data system end to end, often involving ingestion, processing, storage, and monitoring. Expect follow up questions that stress test your design under load, partial failures, or changing requirements. The goal is to evaluate judgment, not to arrive at a single perfect architecture.

Tip: Call out assumptions explicitly and discuss alternative designs. This shows flexibility and strong architectural reasoning.

Behavioral And Ownership Round: This round evaluates how you handle responsibility, pressure, and collaboration. Questions focus on system failures, difficult trade-offs, disagreements with peers, and owning outcomes over time. Citadel values engineers who take accountability and learn quickly from mistakes.

Tip: Use examples where your decisions had real consequences. Ownership and learning mindset are key signals interviewers look for.

Hiring Committee and Offer

After the final loop, interviewers submit written feedback independently. A hiring committee reviews your performance across all rounds, focusing on technical strength, judgment, communication, and consistency. If approved, the team determines level and compensation based on scope and impact expectations. Candidates may be matched to a specific team depending on system experience and business needs.

Tip: If you have strong preferences for certain types of data systems, communicate them clearly. Alignment between your strengths and team needs improves both offer fit and long term success.

If you want to master SQL interview questions, join Interview Query to access our 14-Day SQL Study Plan, a structured two-week roadmap that helps you build SQL mastery through daily hands-on exercises, real interview problems, and guided solutions. It’s designed to strengthen your query logic, boost analytical thinking, and get you fully prepared for your next data engineer interview.

Challenge

Check your skills...

How prepared are you for working as a Data Engineer at Citadel Llc?

Citadel Data Engineer Interview Questions

The Citadel data engineer interview focuses on how well you design, reason about, and operate data systems that must be fast, correct, and resilient under real market conditions. The questions span SQL and data processing, coding and data structures, system design, and behavioral judgment. Interviewers are less interested in academic trivia and more focused on how you think through production constraints, failure modes, and performance trade-offs in high stakes environments.

SQL and Data Processing Interview Questions

In this part of the interview, Citadel evaluates whether you can reason about data correctness and performance in environments where incomplete or delayed data can materially affect downstream systems. These questions are designed to mirror real production challenges in market data, research pipelines, and analytics workflows, where writing SQL is not just about syntax but about judgment, assumptions, and scale.

How would you write a query to identify missing time intervals in market data for a given instrument?

This question tests your ability to reason about data completeness in time series, which is critical at Citadel because gaps in market data can invalidate research results or trigger incorrect signals. To answer, you would describe generating a reference table of expected time intervals based on trading hours, then left joining observed data to that reference and flagging intervals with no matches. A strong answer also explains excluding weekends or market holidays and handling partial trading days to avoid false positives.

Tip: At Citadel, always explain how your logic adapts to different instruments or venues. That shows systems thinking and an understanding that market data is not uniform across sources.

-

This question evaluates whether you understand query-level bottlenecks rather than blaming infrastructure by default. Citadel asks this to see if you can isolate issues like poor join order, skewed partitions, unbounded scans, or inefficient window functions. A strong answer walks through inspecting the query plan, identifying expensive stages, checking cardinality assumptions, and then applying targeted fixes like rewriting joins, filtering earlier, or pre-aggregating data.

Tip: Always talk through how you confirm the root cause before optimizing. That signals discipline and prevents premature changes in production systems.

-

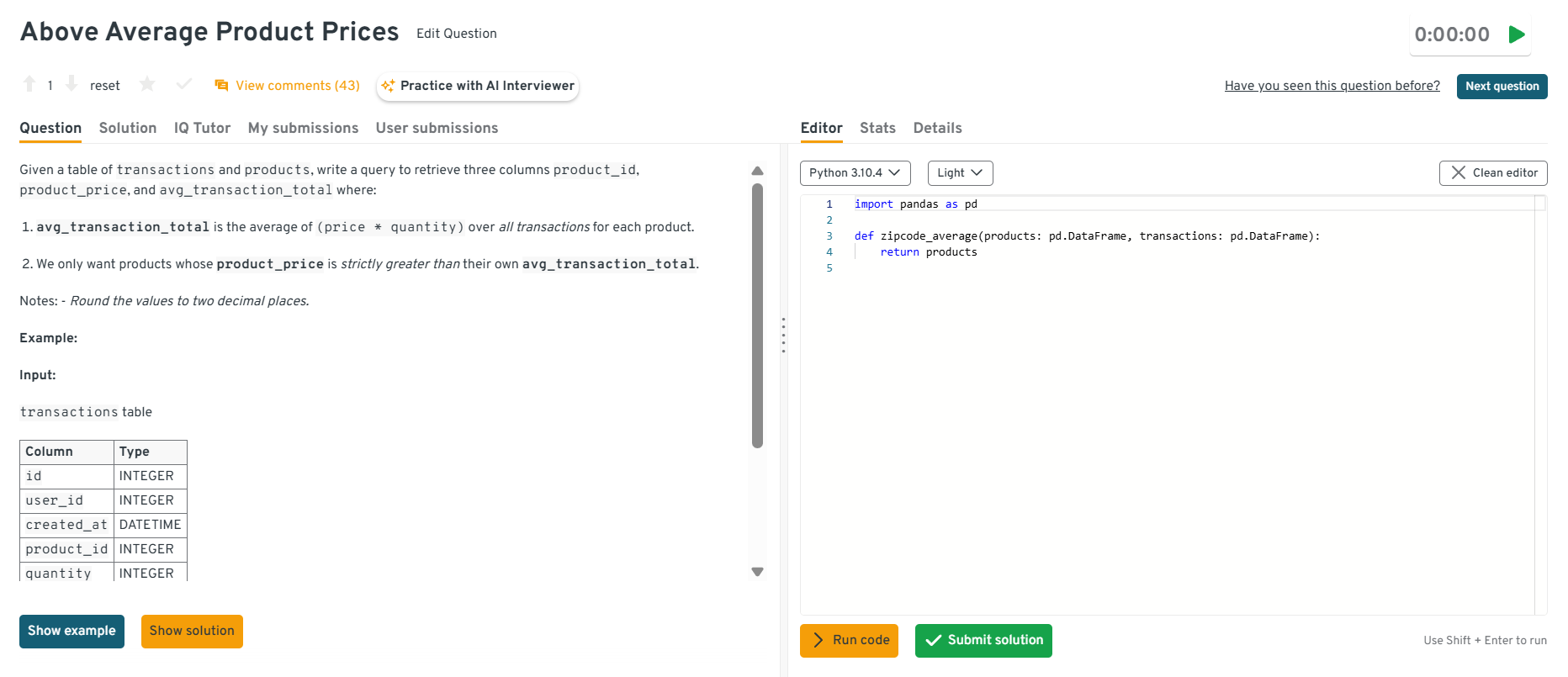

This question tests aggregation logic, join correctness, and numerical precision. At Citadel, similar patterns appear when comparing reference values against realized trading or transaction behavior. You would explain computing the average transaction total per product using price times quantity, joining that result back to the products table, filtering where the product price exceeds the computed average, and applying rounding at the final projection to avoid comparison errors.

Tip: Call out where rounding should and should not occur. This shows attention to numerical correctness, which matters deeply in financial systems.

Head to the Interview Query dashboard to practice a broad set of interview questions that closely align with what Citadel evaluates for data engineers. With hands-on coding challenges, debugging-focused exercises, system design case studies, and behavioral prompts, plus built-in code testing and AI-guided feedback, it is one of the most effective ways to sharpen your problem-solving, reasoning, and production-minded decision-making skills for the Citadel data engineer interview.

How would you detect late arriving data in a daily batch pipeline?

This question evaluates how you reason about data freshness and pipeline reliability, both of which are essential at Citadel where downstream consumers often assume strict timing guarantees. A strong answer explains comparing event timestamps to ingestion or processing times, tracking lateness distributions over time, and flagging records that exceed defined thresholds. You should also mention storing lateness metrics so trends can be monitored, not just one-off violations.

Tip: Tie lateness detection to automated alerts rather than dashboards alone. That demonstrates ownership and an operational mindset expected of Citadel engineers.

-

This question tests window function mastery and your ability to translate temporal logic into correct SQL. Citadel asks this because similar logic appears in grouping bursts of activity or identifying contiguous behavior in market or system events. You would explain ordering events by user and time, using

LAG()to detect gaps greater than 60 minutes, flagging new sessions, and then using a cumulative sum to generate session IDs.Tip: Explicitly mention how you handle events with identical timestamps or out-of-order arrivals. That level of detail shows production awareness and sets you apart from candidates who only think in idealized data.

Want to get realistic practice on SQL and data engineering questions? Try Interview Query’s AI Interviewer for tailored feedback that mirrors real interview expectations.

Coding and Data Structures Interview Questions

In Citadel’s coding rounds, you are evaluated on whether you can write correct, efficient code under pressure and explain your trade-offs clearly. The problems below are intentionally stateful and performance oriented because Citadel data engineers spend a lot of time designing streaming logic, optimizing hot paths, and debugging systems where small inefficiencies compound at scale.

Implement a rolling window aggregator over a stream of events.

This question tests whether you can maintain state efficiently in a streaming setting, which is a daily reality at Citadel when aggregating tick or event data in near real time. To solve it, you typically maintain a queue of events within the window and a running aggregate. As new events arrive, add them, and evict old events until the earliest timestamp is within the window, updating the running sum or count as you go. You should also call out time ordering assumptions and how you handle late events.

Tip: Explain the exact invariants your data structure maintains and the complexity per event. That signals you can design predictable, low latency code paths, which is a key Citadel hiring bar.

-

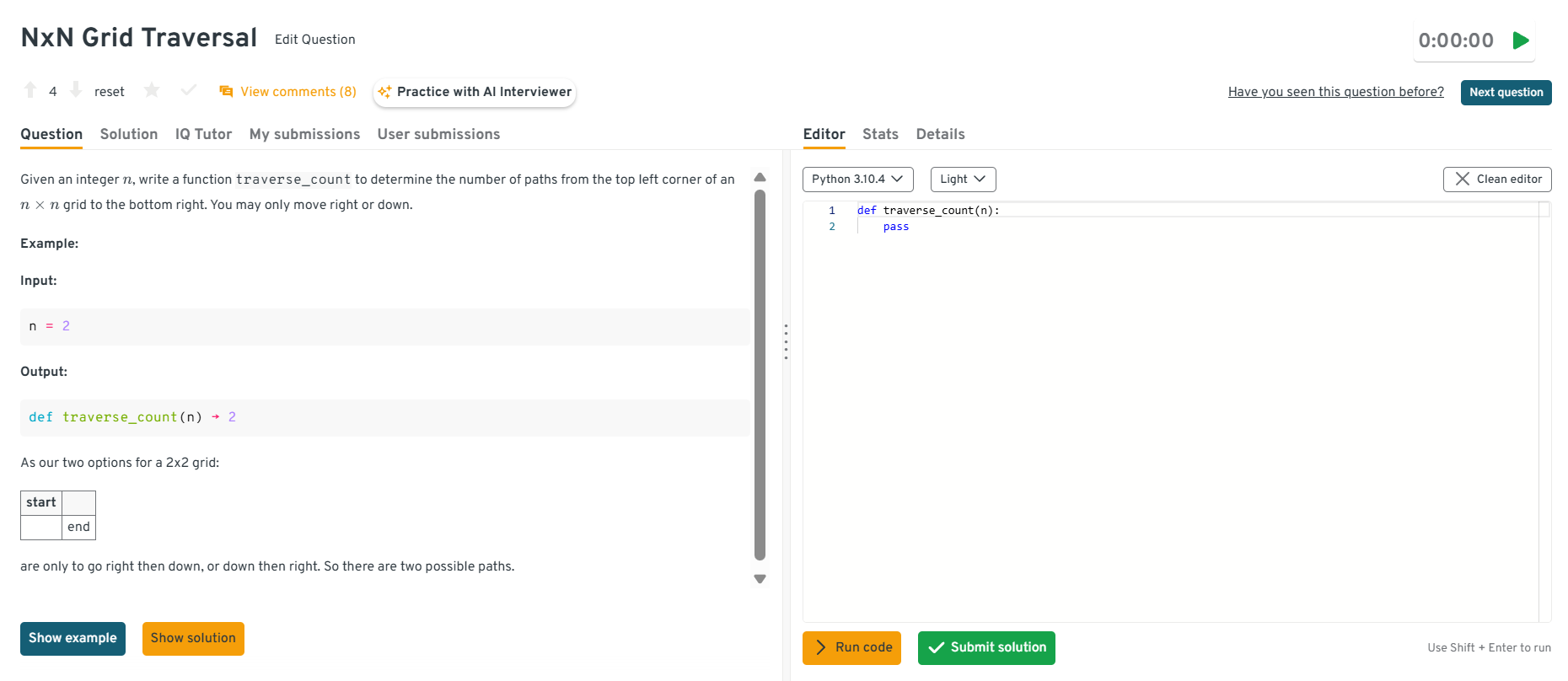

This tests fundamentals in dynamic programming and your ability to translate a recurrence into efficient code. Citadel asks this kind of question to see if you can build a clean solution, reason about complexity, and avoid unnecessary computation. The standard solution uses dynamic programming where

dp[i][j] = dp[i-1][j] + dp[i][j-1]with base cases along the top row and left column. You can optimize space to one array because each row only depends on the current and previous values.Tip: State why you choose a one-dimensional optimization and what complexity it achieves. That shows you actively manage memory and runtime, which matters in production data systems.

Head to the Interview Query dashboard to practice a broad set of interview questions that closely align with what Citadel evaluates for data engineers. With hands-on coding challenges, debugging-focused exercises, system design case studies, and behavioral prompts, plus built-in code testing and AI-guided feedback, it is one of the most effective ways to sharpen your problem-solving, reasoning, and production-minded decision-making skills for the Citadel data engineer interview.

How would you optimize a Python function that processes millions of rows per minute?

This question tests performance instincts and whether you know how to optimize responsibly. Citadel cares because a lot of data engineering work comes down to tight loops, serialization overhead, and memory pressure. The right approach starts with profiling to identify hotspots, then removing Python-level loops when possible through vectorization or batching, reducing allocations, and using efficient data structures. You should also discuss I/O considerations, avoiding repeated parsing, and using chunking to keep memory stable, not just fast.

Tip: Describe how you would prove the optimization worked using profiling before and after. That signals engineering rigor and reduces the risk of “fast but wrong” changes in critical systems.

Debug a pipeline that slows down as data volume grows. Where do you start?

This question evaluates your debugging approach and whether you can stay systematic when systems degrade under load, which is a common Citadel scenario during spikes or backfills. Start by defining the symptom precisely, then instrument each stage to measure throughput, latency, and queue depth. Identify where the slowdown begins, test hypotheses like data skew, contention, or increased join cardinality, and reproduce the issue on a smaller slice if possible. A strong answer also includes checking for silent retries, backpressure, and downstream dependencies before changing code.

Tip: Walk interviewers through how you isolate the bottleneck step by step. This demonstrates calm operational thinking, which is exactly what Citadel expects during production incidents.

-

This question tests careful handling of edge cases and whether you can implement a spec exactly without drifting. Citadel asks these because production data code often fails on “small” details like filtering rules and normalization. To solve it, you iterate through the string, skip spaces and discarded characters, maintain a frequency dictionary for the remaining characters, and build the annotated output as you go. You should clarify whether counting is case sensitive and how to treat punctuation, then implement accordingly.

Tip: Repeat the rules back before coding and test with a couple of tricky examples. That shows precision and reduces avoidable mistakes, which is a strong signal in Citadel’s correctness-first environment.

Watch next: Top 10+ Data Engineer Interview Questions and Answers

For deeper, Citadel-specific preparation, explore our curated set of 100+ data engineer interview questions with detailed answers. These problems map closely to the skills Citadel evaluates, including SQL under scale, distributed systems reasoning, pipeline reliability, and data modeling trade-offs. In the accompanying walkthrough, Interview Query founder Jay Feng breaks down more than 10 core data engineering questions, showing how to think through performance constraints, failure modes, and system design decisions in a way that mirrors Citadel’s interview expectations.

System Design and Data Architecture Interview Questions

Citadel’s system design rounds test whether you can build data systems that stay correct and predictable under real load, messy inputs, and partial failures. The prompts below are intentionally practical because Citadel data engineers are expected to make architecture decisions that impact latency, reliability, and downstream confidence in the data.

Design a real time data ingestion system for market data feeds.

This question tests throughput, ordering, and fault tolerance because Citadel cannot afford data gaps or unpredictable delays when markets move fast. A strong answer lays out producers, ingestion gateways, durable buffering, and stream processing with clear contracts around ordering and deduplication. You should describe how you handle bursty traffic, late messages, and replay, plus monitoring for lag, drop rates, and schema drift. Make it clear where you enforce idempotency and where you accept eventual consistency to keep latency stable.

Tip: Talk through what happens when a feed spikes, a consumer falls behind, or a partition leader fails. That shows production readiness and the ability to design systems that recover cleanly.

-

This question tests data modeling judgment and query performance. Citadel asks it because the same skills apply to modeling high volume event streams for research and monitoring. To answer, describe an append-only events table with a stable event schema, partitioning by date and possibly user or session, and clustering or indexing on common filters like event type and timestamp. Call out a separate dimension model for users, pages, or campaigns, and explain how you would support both raw event queries and aggregated rollups for common dashboards.

Tip: Name the top three queries you are optimizing for before you propose partitions and keys. That signals you design for real workloads, not generic best practices.

-

This question tests whether you can build a reliable ingestion workflow when the source is messy and timing is unpredictable. Citadel asks this because vendor and partner feeds often behave like this and you still need correctness guarantees. A strong design includes file arrival detection, checksum validation, schema validation, and a staging area that stores raw files immutably. Then you parse into a normalized format, run data quality checks, and load into curated tables with idempotent processing so reruns do not duplicate data. Include alerting for missing files and late deliveries.

Tip: Explain how you guarantee idempotency using file fingerprints and run IDs. This demonstrates operational maturity and prevents silent duplication, a common production failure mode.

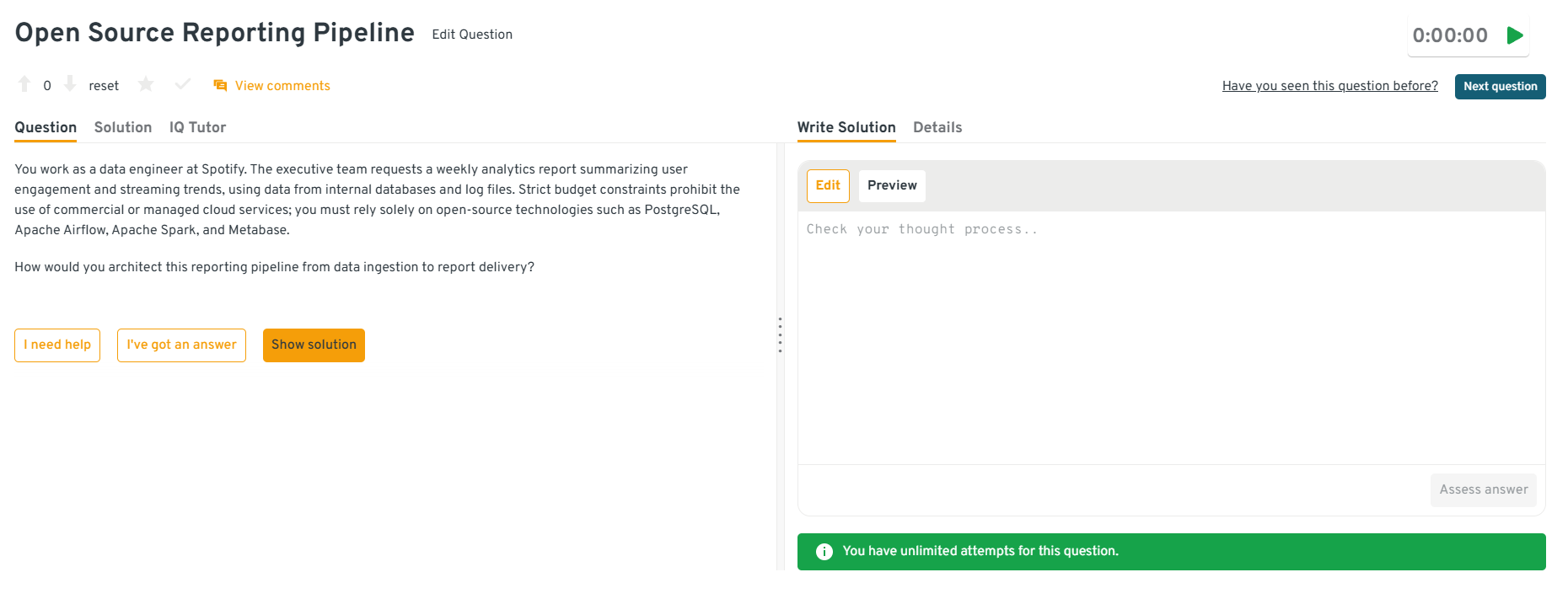

-

This question tests your ability to prioritize, simplify, and still deliver reliable reporting. Citadel asks variants of this to see if you can balance cost, complexity, and correctness without overengineering. A strong answer chooses a minimal stack, uses batch scheduling, stores raw and curated layers, and builds weekly aggregates that are incremental rather than full recomputes. You should describe how you keep compute predictable, how you validate data quality, and how you deliver outputs in a form analysts can trust. Make clear where you trade real time freshness for budget.

Tip: Explicitly state what you are not building and why, like real time streaming or complex orchestration. This shows judgment, which is a core signal Citadel evaluates in senior engineers.

Head to the Interview Query dashboard to practice a broad set of interview questions that closely align with what Citadel evaluates for data engineers. With hands-on coding challenges, debugging-focused exercises, system design case studies, and behavioral prompts, plus built-in code testing and AI-guided feedback, it is one of the most effective ways to sharpen your problem-solving, reasoning, and production-minded decision-making skills for the Citadel data engineer interview.

Design a low latency analytics store for downstream consumers.

This question tests storage and query design because Citadel consumers often need predictable response times for analytics and decision support. To answer, start with the access patterns: what entities are queried, by which keys, and with what latency targets. Then propose a storage layer optimized for reads with appropriate partitioning, indexing, and caching, plus a write path that supports incremental updates without blocking reads. You should discuss consistency expectations, cache invalidation strategy, and observability for p99 latency and freshness. Tie every design choice to a concrete query workload.

Tip: Give one example query and walk through exactly how it is served end to end. That demonstrates you design from workloads and latency budgets, not from tool preferences.

Want to master the full data engineering lifecycle? Explore our Data Engineering 50 learning path to practice a curated set of data engineering questions designed to strengthen your modeling, coding, and system design skills.

Behavioral Interview Questions

Citadel’s behavioral interviews focus on how you think and act when systems matter, stakes are high, and pressure is real. Interviewers are listening for ownership, judgment, and how you learn from mistakes, not rehearsed leadership language. Your answers should reflect real responsibility, clear decision-making, and the ability to operate calmly when things break.

Tell me about a time a data pipeline you owned failed in production.

This question evaluates accountability and how you respond when systems break, which is unavoidable in Citadel’s environment. Interviewers want to see whether you take ownership, diagnose issues methodically, and put durable fixes in place.

Sample answer: A batch pipeline I owned began delivering incomplete data after an upstream schema change, which impacted a downstream analytics report used daily by researchers. I identified the issue within 30 minutes by comparing record counts against historical baselines, paused the pipeline, and backfilled corrected data within two hours. After the incident, I added schema validation checks and automated alerts on record volume, which prevented similar failures and reduced detection time from hours to minutes.

Tip: Focus on what you changed permanently after the failure. Citadel values engineers who reduce future risk, not just restore service.

Describe a technical decision you made that involved significant trade-offs.

This question tests judgment under constraints, a daily reality at Citadel where latency, correctness, and complexity often compete. Interviewers want to hear how you weighed options and accepted consequences.

Sample answer: I had to choose between a more complex streaming solution with strict ordering guarantees and a simpler batch approach that introduced slight delays. Given the downstream use case, I chose the batch design to reduce operational risk and cut failure rates by 40 percent. I documented the latency trade-off clearly and set thresholds to revisit the decision if requirements changed, which ultimately avoided unnecessary system complexity.

Tip: Explicitly name the downside of your choice. Acknowledging trade-offs signals senior-level judgment.

How would you convey insights and the methods you use to a non-technical audience?

This question assesses communication clarity and whether you can drive alignment without overwhelming stakeholders. Citadel engineers frequently work with researchers and decision-makers who care about outcomes, not implementation details.

Sample answer: When presenting pipeline results to non-technical stakeholders, I start with the decision the data supports, then explain only the assumptions that affect confidence. For example, I summarized a data freshness issue as a potential timing risk rather than a schema mismatch, which helped the team adjust expectations quickly. This approach reduced follow-up questions and led to faster agreement on next steps.

Tip: Lead with impact, not implementation. That shows you understand how to translate engineering work into actionable decisions.

Head to the Interview Query dashboard to practice a broad set of interview questions that closely align with what Citadel evaluates for data engineers. With hands-on coding challenges, debugging-focused exercises, system design case studies, and behavioral prompts, plus built-in code testing and AI-guided feedback, it is one of the most effective ways to sharpen your problem-solving, reasoning, and production-minded decision-making skills for the Citadel data engineer interview.

Why should we hire you as a data engineer at Citadel?

This question evaluates self-awareness and alignment with Citadel’s engineering culture. Interviewers want a grounded answer tied to evidence, not generic strengths.

Sample answer: You should hire me because I’ve consistently owned data systems end to end, from ingestion to monitoring, in environments where correctness and timing mattered. In my last role, I reduced pipeline failure rates by 35 percent and improved data freshness guarantees by tightening validation and alerting. I’m comfortable making trade-offs under pressure and taking responsibility when systems impact real outcomes, which aligns closely with how Citadel operates.

Tip: Anchor your answer in outcomes you personally owned. Citadel looks for engineers who deliver and take responsibility, not general contributors.

How do you ensure long term reliability of systems you build?

This question evaluates whether you think beyond initial delivery. Citadel values engineers who design systems that stay stable over time, even as usage grows.

Sample answer: I focus on reliability from day one by defining clear success metrics, adding monitoring tied to data quality and latency, and documenting operational playbooks. On one pipeline, this approach cut incident frequency by half over six months and made on-call response faster because failure modes were well understood. I also schedule periodic reviews to reassess assumptions as data volume changes.

Tip: Emphasize proactive maintenance over reactive fixes. Long-term reliability thinking is a strong signal of ownership at Citadel.

Looking for hands-on problem-solving? Test your skills with real-world challenges from top companies. Ideal for sharpening your thinking before interviews and showcasing your problem solving ability.

What Does a Citadel Data Engineer Do?

A Citadel data engineer builds and maintains the data infrastructure that feeds trading, risk, and research systems across the firm. The role focuses on designing pipelines that ingest high volume market and internal data, process it with strict correctness guarantees, and deliver it with predictable latency to downstream consumers. Data engineers operate close to production, where reliability, performance, and data quality are not abstract goals but daily requirements tied directly to firm outcomes.

| What They Work On | Core Skills Used | Tools And Methods | Why It Matters At Citadel |

|---|---|---|---|

| Real time data ingestion | Streaming systems, concurrency, backpressure handling | Kafka style pipelines, custom ingestion services | Ensures trading and risk systems receive fresh market data with minimal delay |

| Batch and hybrid pipelines | SQL optimization, ETL design, dependency management | Scheduled jobs, incremental processing, backfills | Powers research workflows and historical analysis without data gaps |

| Data quality and validation | Schema design, anomaly detection, defensive programming | Validation checks, reconciliation jobs, alerts | Prevents silent data issues from propagating into critical decisions |

| Performance optimization | Profiling, memory management, algorithmic efficiency | Query tuning, parallelization, caching strategies | Reduces latency and cost at scale where inefficiencies compound quickly |

| Reliability and monitoring | Failure analysis, observability, incident response | Metrics, logging, automated recovery | Keeps systems stable under market volatility and traffic spikes |

Tip: When discussing past work, focus on how you measured and improved pipeline reliability or latency over time. This signals engineering judgment and ownership, which Citadel interviewers value more than one-off technical wins.

How to Prepare for a Citadel Data Engineer Interview

Preparing for a Citadel data engineer interview requires a different mindset than standard data engineering prep. You are preparing for a role where data systems operate under strict latency, correctness, and reliability constraints, often during periods of market stress. Strong preparation blends deep technical fundamentals with sound engineering judgment, clear communication, and an understanding of how data infrastructure directly supports trading and research workflows.

Read more: How to Prepare for a Data Engineer Interview

Below is a focused framework to help you prepare effectively without repeating the question specific material already covered.

Build intuition for latency sensitive data systems: Citadel data engineers are expected to think in milliseconds, not minutes. Practice reasoning about how ingestion, serialization, storage, and query layers contribute to end to end latency. Review how small inefficiencies compound at scale and how to identify the slowest stage in a pipeline quickly.

Tip: Be ready to explain how you would measure and improve latency in an existing system. This shows performance awareness and production readiness, which strongly differentiates candidates.

Practice designing for failure first, not success: Many Citadel systems are designed around what happens when data is late, duplicated, or partially missing. Prepare by thinking through failure modes before ideal workflows. Focus on backfills, replay strategies, alerting thresholds, and safe recovery mechanisms.

Tip: During interviews, explicitly describe how your design behaves when upstream data breaks. This signals operational maturity and ownership, not just design fluency.

Refine your ability to explain trade-offs clearly: Interviewers care less about perfect solutions and more about how you choose between competing constraints like latency versus completeness or complexity versus reliability. Practice articulating why you made a decision and what you would revisit with more time or data.

Tip: State one conscious trade-off in every answer. This demonstrates judgment, which is a core signal senior Citadel engineers look for.

Prepare structured narratives for past systems you owned: Review your past data engineering projects and organize them around problem context, constraints, decisions, outcomes, and lessons learned. Emphasize long term ownership, not just initial delivery. Citadel values engineers who think about systems over time.

Tip: Highlight one mistake you caught early or corrected later. This shows accountability and a learning mindset, both critical in high pressure environments.

Simulate full interview loops under realistic conditions: Practice moving between SQL reasoning, coding, system design, and behavioral explanations in a single session. Time pressure and context switching are intentional parts of the interview. Mock interviews help surface where your explanations lose clarity.

Use Interview Query’s mock interviews and coaching sessions to rehearse Citadel style scenarios with targeted feedback.

Tip: After each mock, write down one answer you would shorten or restructure. Clear, concise communication is often what separates strong candidates from average ones.

Struggling with take-home assignments? Get structured practice with Interview Query’s Take-Home Test Prep and learn how to ace real case studies.

Salary and Compensation for Citadel Data Engineers

Citadel offers some of the most competitive compensation packages in the industry for data engineers, reflecting the performance critical nature of its systems and the direct impact engineering decisions have on firm outcomes. Compensation is structured to reward engineers who can design low latency data pipelines, maintain exceptional reliability, and operate effectively under real market pressure. Total pay typically includes a strong base salary, annual performance bonus, and meaningful discretionary incentives. Most candidates interviewing for data engineering roles are evaluated at mid level or senior bands, particularly if they have experience with distributed systems and production data infrastructure.

Read more: Data Engineer Salary

Tip: Confirm your target level with your recruiter early in the process since level strongly determines compensation range and performance expectations at Citadel.

Citadel Data Engineer Compensation Overview (2026)

| Level | Role Title | Total Compensation (USD) | Base Salary | Bonus | Equity / Incentives | Signing / Relocation |

|---|---|---|---|---|---|---|

| Mid Level | Data Engineer | $180K – $260K | $140K–$180K | Performance based | Discretionary incentives | Offered selectively |

| Senior | Senior Data Engineer | $230K – $350K+ | $160K–$210K | Above target possible | Larger discretionary awards | Common for senior hires |

| Staff | Staff / Lead Data Engineer | $300K – $450K+ | $180K–$240K | High performance driven | Significant incentives | Frequently offered |

Note: These estimates are aggregated from publicly available data on Levels.fyi, Glassdoor, TeamBlind, industry compensation reports, and Interview Query’s internal salary benchmarks.

Tip: Pay close attention to bonus structure at Citadel. Variable compensation can be a substantial portion of total pay and is closely tied to impact and reliability of the systems you own.

Average Base Salary

Average Total Compensation

Negotiation Tips That Work for Citadel

Negotiating compensation at Citadel is most effective when you approach the conversation with data, clarity, and professionalism. Recruiters expect candidates to understand market benchmarks and to articulate their value through measurable engineering impact.

- Confirm your level early: Citadel’s leveling significantly affects base salary and bonus potential. Clarifying scope and expectations upfront prevents misalignment later in the process.

- Anchor with credible benchmarks: Use trusted sources like Levels.fyi and Interview Query salary data to frame expectations. Tie your ask to concrete outcomes such as latency reduction, reliability improvements, or large scale pipeline ownership.

- Understand location and team impact: Compensation can vary by office and business unit. Ask how team scope and system criticality influence bonus expectations.

Tip: Ask for a full breakdown of compensation, including base, bonus targets, payout variability, and any signing incentives. Understanding how performance translates into pay signals maturity and helps you negotiate from an informed position.

FAQs

How long does the Citadel data engineer interview process take?

Most candidates complete the Citadel data engineer interview process within three to five weeks. Timelines can extend if multiple teams are evaluating your profile or if additional system design calibration is needed. Recruiters typically share clear next steps after each round.

Does Citadel use online coding assessments for data engineers?

Citadel does not rely heavily on generic online assessments. Most candidates move directly into live technical screens focused on SQL, coding, and system design. Early career roles may include lighter screening exercises, but interviews emphasize real-time problem-solving.

How important is low latency or finance experience for Citadel?

Direct finance experience is not required, but experience with low latency, high reliability systems is highly valued. Candidates who have worked on performance sensitive pipelines or production infrastructure tend to ramp up faster and stand out during interviews.

What programming languages should I prioritize for Citadel data engineering roles?

Python and SQL are essential for most roles, with Java or C++ being valuable depending on the team. Citadel evaluates fundamentals over specific languages, so clarity, correctness, and performance awareness matter more than syntax familiarity.

How difficult are Citadel’s SQL interview questions?

Citadel’s SQL questions are challenging and scenario driven. Expect time series logic, window functions, deduplication, and data quality checks. Interviewers focus on correctness, scalability, and how you reason through imperfect data.

Are system design interviews required for all data engineer levels?

Yes, system design is evaluated at all levels, though depth increases with seniority. Mid level candidates focus on core data flow and failure handling, while senior candidates are expected to reason about scalability, trade-offs, and long term reliability.

How much weight does the behavioral interview carry at Citadel?

Behavioral interviews carry significant weight because Citadel values ownership and judgment. Interviewers look for evidence that you can handle pressure, learn from failures, and make sound decisions when systems impact real outcomes.

Can I interview for multiple Citadel teams at the same time?

Yes, candidates are often considered by multiple teams if their skill set fits. Team matching typically happens later in the process, and strong performance can open opportunities across different data platforms or business units.

Become a Citadel Data Engineer with Interview Query

Preparing for the Citadel data engineer interview means developing strong fundamentals, sharp system design judgment, and the ability to reason clearly about performance, reliability, and failure under pressure. By understanding Citadel’s interview structure, practicing real-world SQL, data pipeline design, and production focused architecture scenarios, and refining how you communicate trade-offs, you can approach each stage with confidence.

For targeted preparation, explore the full Interview Query’s question bank, practice live reasoning with the AI Interviewer, or work one on one with an experienced mentor through Interview Query’s Coaching Program to refine your approach and position yourself to stand out in Citadel’s highly selective data engineering hiring process.

Discussion & Interview Experiences