Data Engineer Case Study Interview Questions + Guide in 2025

Getting Started with Data Engineer Case Studies

Case study interviews for data engineers are one of the most challenging stages of the interview process.

During a data engineer case study interview, candidates are asked scenario-based questions that deal with architecture or a data engineering problem. They then have to brainstorm solutions and walk the interviewer through a hypothetical design and build the solution.

Data engineer interviews test a variety of skills. They provide insights into your technical abilities, especially in ETL architecture and design. But they’re also used to assess your problem-solving ability, communication style, command of design best practices, and ability to think of potential trade-offs to a solution.

How do you go about solving a data engineer case study? We’ve highlighted what you can expect, as well as provided sample data engineer case study interview questions in this overview guide.

How Do Data Engineering Case Studies Work?

During the data engineer case study interview, you’ll be provided a scenario-based problem. Typically, these questions focus on architecture and require you to develop a solution and walk the interviewer through how you would design and build the solution.

For example, you might be asked the following:

“You’re tasked with building a data pipeline for POS data from a store like Walmart. This data will be used by data scientists. How would you do it?”

With a case study question, the first step is to ask clarifying questions. You should gather as much information as you need. Then, you would propose your solution.

A few tips for data engineer case interviews include:

- Problem-Solving Approach: When you’re presented with a problem, interviewers want to know the steps you take to solve it.

- Thoroughness: Before you jump into an answer, get clarification. You should understand exactly what they’re looking for. Then, you can jump into an answer.

- Ability to Communicate: Think out loud and walk the interviewer through the process. Say exactly why you would make a particular choice.

- Design Patterns: With architecture problems, you should have a strong grasp of design patterns, as well as the technologies and products that can be used to solve the problem.

- Forward Thinking: Every data engineering solution includes trade-offs. Interviewers want to see that you can assess a solution in terms of pros and cons, as well as potential weaknesses of a solution.

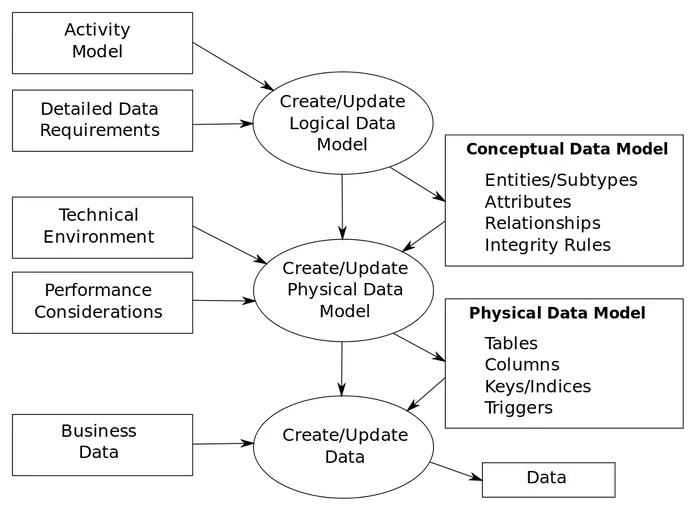

Ultimately, these questions focus on a range of subjects including database design, data warehousing, ETL pipelines and data modeling.

Data Engineer Case Study Example

Let’s go through an example data engineer case study.

The majority of case studies for data engineers are multi-faceted. They build on top of one another. For example, you might start with a basic question about database design, and then progress to more challenging questions about your initial design.

This makes data engineer case interviews so difficult to pass, especially if the baseline solution is wrong.

Here’s a sample case question:

You’re tasked with building a notification system for a simple Reddit-style app. What would the backend and data model look like?

Step 1: With Engineering Case Studies, Start with a Hypothesis

Hint: Always start with a clarifying question. For our sample, we might want to know the goals of the notification system, what type of notification they’ll be, etc.

First, define how you’ll want to think about notifications. Notifications have two general types:

- Trigger-based notifications: For example, let’s say a user gets a comment reply on one of their posts. We want to send an email notification to the user in real-time.

- Scheduled notifications: The app wants to push engagement and chooses to send out targeted content notifications to users.

The key difference here is that one is event-triggered and the other is user-triggered.

Step 2: Describe the Architecture & Data Model

Next, you can walk the interviewer through how you would design a simple database for notifications. This database might include two tables:

Notifications: This provides details about different types of notifications.

- Name: the name of the notification

- Type: the type of the notification; in this case, “user” or “event”

Notification Metrics: This would provide metrics about the notifications

- Time sent

- Events: like reads, clicks, deliveries, etc.

All notifications that get triggered would be sent to a task manager to run the task asynchronously from the actual application. We don’t want to log these notifications within the app given that if we were to suddenly have to send an influx of notifications, it could bring down the application.

When we log the notification, we could insert a value into the notification_metrics table to record a value

Step 3: Consider Design Implications

Let’s say that we also want to track reads and opens and follow-through rates.

Would we update the notification_metrics table in real-time?

If yes, we could set up a webhook to run an update command. However, we need to verify the performance of an update in the notifications_metrics table versus just an insert into another events-style table.

Let’s assume for design reasons that we mainly want to do an insert.

What’s the refresh cadence for the ETL to pipe-out notification analytics?

In this specific cadence, we have to make some assumptions about how important it is that notifications analytics are checked. More often than not, when handling a huge amount of data, we care just the same about data quality as we do performance.

Ultimately, more questions would come up, like “what if notifications are delayed? How would we come up with a backfill?” and others. Plus, the interviewer will likely ask questions along the way.

Your goal should be to methodically walk the interviewer through a solution, discuss the trade-offs, make assumptions, and ask questions if needed.

Database Design Case Study Example

Here’s another similar data engineer case study question focusing on database design:

Design a database to represent a Tinder-style dating app. What does the schema look like and what are some optimizations that you think we might need?

Step 1: Make Assumptions About the Case Question

First, you want to approach this problem from the product perspective. It’s a dating app, and we have to know what user functionalities we must design around.

It’d be good to start by listing some of the key capabilities of a Tinder-style app:

- Onboarding: User opens the app, adds preferences, adds pictures, and starts swiping on users.

- Matching: If the user matches with another user, we notify them and create a messaging system.

- Messaging: Users can message other users, but only if both have been matched. Users can also leave conversations at any time.

Next, we should identify if we have specific feature goals that we need to account for within engineering or system design. For example, if we look at swiping, we might ask:

- Do we care about how advanced the matching algorithm works? Do we need to account for a similarity algorithm or could we just do a dumb matching algorithm?

- Which preferences by users are hard versus soft?

- For example, distance filters and gender filters are usually hard, while race and age could be softer.

When you make assumptions, be sure to ask if you’re on the right track.

The interviewer will likely provide additional information to help refine your solution like this:

Let’s say the matching will be based on hard filters for now, but you should design a database that makes it easier to increase the complexity of our matching algorithm.

Your priorities should include efficiency for users to quickly onboard and retrieve matches.

Step 2: Propose a Database Design

We will need a range of tables to represent users, swipes, and messages between them.

| Users |

|---|

| id |

| name |

| location_fk |

| gender |

| ethnicity |

| bio |

| profile_picture |

| Swipes |

|---|

| id |

| user_a |

| user_b |

| created_at |

| swipe_state |

| Messages |

|---|

| id |

| created_at |

| body |

| sender_id |

| receiver_id |

| Locations |

|---|

| Id |

| City |

| State |

| Country |

| Zipcode |

| Centroid_long |

| Centroid_lat |

Step 3: With Database Design Case Studies, Consider the Design Implications

Let’s walk through the design.

The swipes table is the most challenging one. We know we have to make sure that we save each state of each user. We have two users, user_a and user_b, that we can denote by whichever user swiped on the other user first.

But since this table will be massive and a log of the actions that follow each user’s swipe, we need to make sure we save each specific state and action from the two-user combination.

Therefore, the states are:

- User_a swipes right - User_b swipes left

- User_a swipe left - User_b swipes right

- User_a swipes right - User_b swipes right

- User_a swipes left - User_b swipes left

Each of these states could be represented by a numerical categorization in the database for simplicity and space saving. And not only could we have these states but we could also have states for when the other user hasn’t swiped yet.

For example, if User A swipes right on User B, but User B hasn’t swiped on User A, then we would save a state that could be updated later on.

This relational format allows us to easily query for common metrics around total user matches, the total number of swipes per user, and the number of matches per swipe ratio.

Additionally, we separated the locations table from just the user profile.

This is given the definite need for improving matching with hard filter set. While something like gender could be pretty easily done with a simple query, computing geo-location coordinates might be difficult in SQL. But can be done if we can at least narrow the result set down to the zip code level.

Step 4: Consider Trade-Offs and Optimizations

Lastly, let’s look at database engineering optimizations that we can perform.

The biggest beneficiary of optimizations would likely be increasing the speed and performance of the locations and swipes table.

While we can easily add an index to the locations table on something like zip code (US-only assumptions), we can’t add one to the swipes table given the size of that table.

One option is to implement a sharded design for our database. While indexing does a table copy and rearranges records to allow you to read off of a table sequentially, sharding gives you capability to add multiple nodes where the specific record you want is only on one of those nodes. This allows for a more bounded result in terms of retrieval time.

Lastly, the ultimate optimization we can make is to remove the entire swipes table from the database and treat the swipes we get as a log. We would scale this out by using a streaming service.

More Data Engineer Case Study Questions

Try these additional case questions, covering database design, data architecture and data debugging:

1. Database Design Case Study: Music Database

Let’s say you work at Spotify.

We want to design a relational database for storing metadata about songs.

We want to include metadata like song title, song length, the date the song was added to the platform, artist, album, track number (on the album), the song’s release year, and its genre.

How would you go about designing this database?

2. Data Architecture Case Study: Click Data Schema

How would you create a schema to represent client click data on the web?

3. Data Engineer Case Study: Crossing Bridges

Let’s say we want to run some data collection on the Golden Gate Bridge.

What would the table schema look like if we wanted to track how long each car took coming into San Francisco to enter and exit the bridge? Let’s say we want to track additional descriptives like the car model and license plate.

4. Data Engineer Case Study: Data Debugging

Suppose you are analyzing auto insurance data. You find that in the demographic information for all insurance clients the marriage attribute column is marked TRUE for all customers.

How would you debug what happened? What data would you look into and how would you find out who is actually married and who is not?

5. Data Engineering Case Study: Decreasing Tech Debt

Suppose that you work at a fintech startup. Recently, management has raised the issue of the increased amount of developer hours needed to implement relatively simple features, citing tech debt as the primary cause. How would you go around decreasing tech debt and decreasing developer turnaround time?

Prep for Your Data Engineering Interview

In addition to data engineer case study questions, your interview will likely include questions on SQL, data structures and algorithms, data modeling and database design. See helpful sample questions from our guide: Top Data Engineering Interview Questions.