Walmart Data Scientist Interview Guide (2025 Process + Questions)

Introduction

Preparing for a Walmart data scientist interview in 2025 means stepping into one of the most ambitious and data-rich environments in retail. Walmart is not just collecting data—it is turning it into action through AI-powered tools that empower over 1.5 million associates, generative AI models like Wallaby, and predictive systems that drive everything from personalized recommendations to optimized delivery routes. As a data scientist, you will directly contribute to innovations like immersive commerce and inventory systems that have already reduced stockouts by 16 percent and boosted online sales by up to 15 percent. You are not just filling a role. You are helping shape the future of a company using data to redefine global retail.

Role Overview & Culture

As a Walmart data scientist, you will work at the intersection of massive-scale data and real-world impact. Your responsibilities span developing machine learning models for personalization, pricing, and forecasting, as well as extracting insights from structured and unstructured data to inform strategic decisions. You will also collaborate with engineering, product, and business teams to deploy solutions across Walmart’s retail ecosystem, powering everything from store operations to customer experience. Expect to work with billions of rows of data and experiment using A/B testing frameworks to validate business outcomes. The culture values collaboration and innovation, giving you space to learn and experiment, though timelines and time zones can be demanding. Still, you are contributing to the future of retail on an extraordinary scale.

Why This Role at Walmart?

Choosing a data scientist role at Walmart means stepping into a high-impact, high-reward position where your work directly influences millions of customers and associates. You will earn a competitive total compensation package—often exceeding $290,000 for experienced roles—and gain access to performance bonuses, stock options, and generous benefits like full health coverage and paid parental leave. Beyond pay, Walmart supports your long-term growth through programs like Live Better U, which covers 100% of education costs. If you are preparing for a Walmart data science interview, you are already exploring a path that offers stability, scale, and opportunity. For those eyeing a Walmart senior data scientist interview, the rewards only grow, with leadership tracks and technical advancement baked into your career trajectory.

What Is the Interview Process Like for a Data Scientist Role at Walmart?

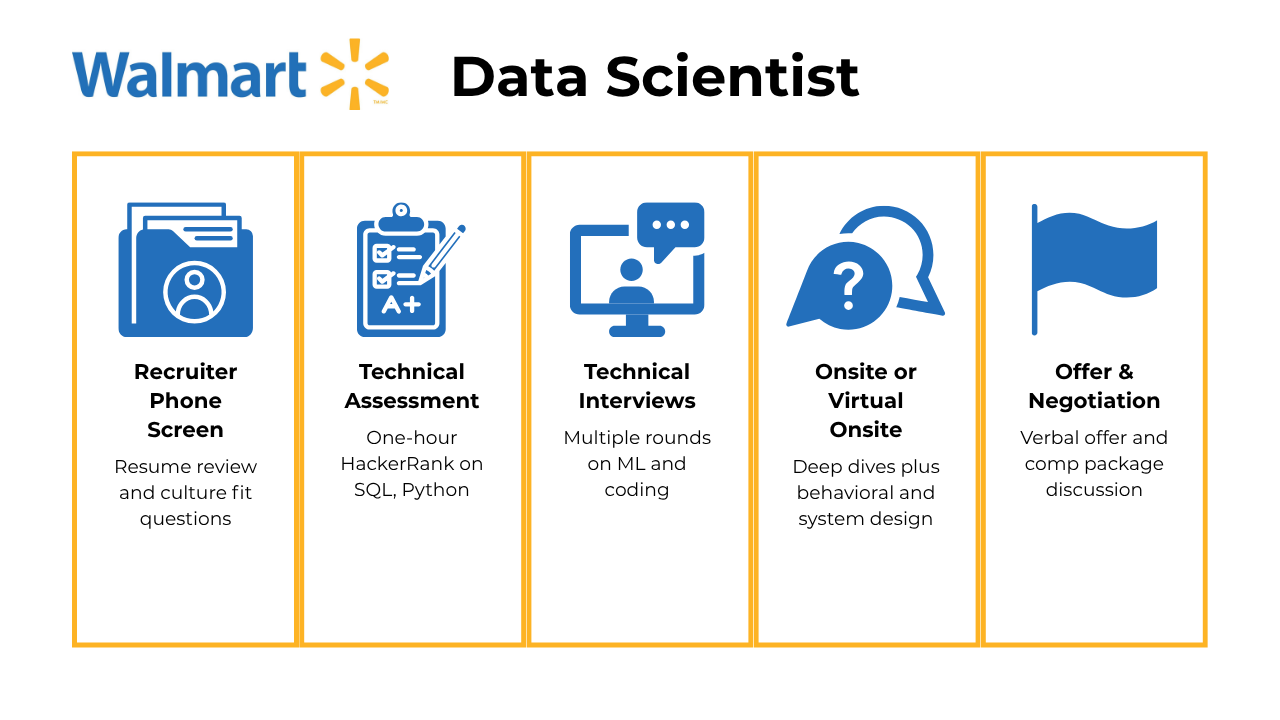

The Walmart data scientist interview process is a comprehensive multi-stage evaluation designed to assess both technical competencies and cultural fit. The entire process typically spans 4-8 weeks and consists of five primary stages, including:

- Recruiter Phone Screen

- Technical Assessment

- Technical Interviews

- Onsite or Virtual Onsite

- Offer & Negotiation

Recruiter Phone Screen

The initial recruiter phone screen is a 30-45 minute conversation with HR representatives designed to assess your background, experience, and basic fit for the role. The recruiter will discuss your resume, highlighting relevant projects and accomplishments, while also asking about your motivations for joining Walmart and expectations from the role. This stage may include simple technical questions related to your data science experience, such as your familiarity with machine learning algorithms or SQL. Some candidates report a secondary culture fit interview with a hiring manager immediately following the recruiter screen, which focuses on values alignment and behavioral fit. Preparation involves researching Walmart’s business model and being ready to articulate how your skills align with their data-driven objectives.

Technical Assessment

Following the phone screen, candidates complete a technical assessment, typically conducted through HackerRank. This one-hour coding challenge consists of 1-2 questions of medium to hard difficulty, focusing on Python and SQL proficiency, data structures and algorithms, and concepts like arrays and binary search. For data science roles specifically, the assessment includes SQL queries, statistical analysis problems, and may feature a take-home assignment involving time series forecasting or machine learning problems. Some candidates encounter case studies during this phase, such as predicting customer behavior from shopping history or implementing algorithms like TF-IDF for web page analysis. The assessment evaluates your ability to manipulate and analyze data effectively while demonstrating coding proficiency under time constraints.

Technical Interviews

The technical interview stage comprises multiple rounds, typically 2-3 sessions of approximately 30 minutes each. These interviews are conducted by Walmart data scientists and engineers who evaluate your knowledge in statistics, coding, machine learning concepts, and domain expertise. Common topics include bias-variance tradeoff, overfitting prevention, ensemble methods comparison (random forest vs. gradient boosting), handling imbalanced datasets, and model evaluation techniques. Coding components often involve medium-level problems such as finding the kth largest element in an array or SQL session stitching. Candidates may also face ML modeling scenarios where they must design end-to-end solutions, select appropriate algorithms, handle categorical variables, and discuss evaluation metrics. This stage requires demonstrating both technical depth and the ability to communicate complex concepts clearly.

Onsite or Virtual Onsite

The final Walmart interview stage consists of 3-5 one-hour rounds conducted virtually with various team members. This comprehensive evaluation includes multiple technical rounds covering machine learning concepts, coding challenges, ML modeling, data engineering, and system design. Candidates face behavioral interviews assessing leadership capabilities, conflict resolution skills, and deadline management. For senior roles, expect questions about designing databases, ETL processes, batch processing with Spark, and distributed systems architecture. The onsite may include case study presentations where you discuss past projects and demonstrate your ability to reproduce results. Advanced topics like neural networks, deep learning, and AI applications in retail are common for higher-level positions. This stage evaluates not only technical competence but also cultural fit and ability to collaborate with cross-functional teams.

Offer & Negotiation

Successful candidates typically receive a verbal offer within one week of completing the final interview round. The formal offer process involves discussions with HR about compensation, benefits, stock options, and start date logistics. Walmart’s competitive packages can range from $145,000 to $350,000+, depending on experience and level, with additional performance bonuses and equity components[Previous conversation]. Negotiation opportunities exist for salary, stock grants, and other benefits, though candidates should be prepared with market research and clear justification for requests[Previous conversation]. The official offer packet is usually sent within 1-10 business days of verbal acceptance, followed by onboarding coordination and team assignment.

Challenge

Check your skills...

How prepared are you for working as a Data Scientist at Walmart?

What Questions Are Asked in a Walmart Data Scientist Interview?

Most Walmart data scientist interview questions focus on solving real business problems with data, and these often overlap with broader Walmart data science interview questions that test your skills in SQL, machine learning, system design, and communication.

Coding / Technical Questions

In this section, you will encounter questions that evaluate your ability to write efficient SQL queries, manipulate large datasets, and solve algorithmic problems in Python—all while grounding your answers in retail and product use cases:

1. Find the second longest flight between each pair of cities

To solve this, create a Common Table Expression (CTE) to normalize the source and destination locations, ensuring that each city pair is treated as the same regardless of order. Then, calculate the duration of each flight and rank them within each city pair using ROW_NUMBER(). Finally, filter for the second-ranked flights and order the results by flight ID.

To solve this, use the LAG window function to create a column that shows the previous role for each user. Filter the rows where the current role is “Data Scientist” and the previous role is “Data Analyst”. Finally, calculate the percentage by dividing the count of such users by the total number of users.

To solve this, calculate the average commute time for each commuter in New York using TIMESTAMPDIFF to find the time difference between start_dt and end_dt. Use a GROUP BY clause to group by commuter_id and calculate the average commute time for each commuter. Then, calculate the overall average commute time across all commuters in New York without grouping. Finally, join the two results to display the commuter-specific and overall averages in one table.

To solve this, split the address column in df_addresses into street, city, and zipcode using the split method. Merge the resulting dataframe with df_cities on the city column, then concatenate the street, city, state, and zipcode columns into a single address column. Finally, drop the intermediate columns to return the desired dataframe.

To determine if a shipment was delivered during a customer’s membership period, join the shipments and customers tables on customer_id. Use a conditional statement like IF(ship_date BETWEEN membership_start_date AND membership_end_date, 'Y', 'N') to calculate the is_member column. The final query will include the shipment details along with the is_member status.

System / Product Design / ML Case Questions

These questions test your ability to design scalable machine learning systems and product features that reflect real-world business logic, user experience goals, and deployment constraints:

6. How would you design a data warehouse for a new online retailer?

To design a data warehouse for a new online retailer, start by identifying the business process, which in this case focuses on sales data for analytics and reporting. Define the granularity of the data (e.g., each distinct product sale as an event), identify dimensions such as customer details, product attributes, time, and payment methods, and determine the facts like quantity sold, total amount paid, and net revenue. Finally, organize these elements into a star schema, with a central fact table connected to dimension tables for efficient querying.

To design this system, start by identifying the key factors contributing to wrong or missing orders, such as user input errors, restaurant errors, or delivery issues. Collect and preprocess relevant data, including order details, user behavior, and restaurant performance. Use this data to train a supervised learning model that predicts the likelihood of an order being wrong or incomplete. Implement real-time validation checks and feedback loops to improve the model’s accuracy over time.

8. How would you build a machine learning system to generate Spotify’s discover weekly playlist?

To build Spotify’s Discover Weekly playlist, you can use collaborative filtering, content-based filtering, or a hybrid approach. Collaborative filtering leverages user behavior data to recommend songs based on similar users’ preferences, while content-based filtering uses song metadata to suggest tracks similar to those a user already likes. A hybrid model combines both methods, incorporating additional features like user demographics, listening history, and contextual data to improve recommendations. Regular evaluation using metrics like precision, recall, and user feedback ensures the system remains effective.

9. How would you build the recommendation algorithm for type-ahead search for Netflix?

To build a type-ahead search recommendation algorithm for Netflix, start with a prefix matching system using a TRIE data structure for efficient lookups. Address dataset bias by focusing on user-typed input and incorporating Bayesian updates to improve recommendations based on user behavior. Enhance the system with context matching by leveraging user preferences and clustering profiles into feature sets. Finally, scale the system using Kubernetes for mapping user profiles and caching mechanisms to handle global Netflix users efficiently.

To address this, you can use NLP techniques like word embeddings (e.g., Word2Vec, GloVe, or BERT) to represent job titles and descriptions as vectors in a high-dimensional space. Precompute embeddings for the existing pool of jobs and store them in a vector database for efficient similarity searches. For each new job, compute its embedding and use approximate nearest neighbor (ANN) algorithms like FAISS or ScaNN to quickly retrieve the top 10 most similar jobs. This approach balances efficiency and scalability, even with millions of jobs.

11. How would you create a recommendation engine for a rental listing website?

To create a recommendation engine for a rental listing website, you can use either a content-based filtering approach or a collaborative filtering approach. Content-based filtering would match user preferences (e.g., demographics, interests) with property metadata (e.g., amenities, price, location). Collaborative filtering would leverage user behavior, such as past interactions or reviews, to recommend listings based on similar users’ preferences. A hybrid model combining both approaches could improve accuracy.

Behavioral or “Culture Fit” Questions

Behavioral questions explore how you collaborate, communicate, and respond to challenges—especially in large, cross-functional environments like Walmart’s. Some levels, like senior data scientist interview questions or Walmart data scientist 3 interview questions, go deeper into leadership, ownership, and stakeholder alignment:

12. What are your three biggest strengths and weaknesses you have identified in yourself?

In a Walmart data science interview, this question is an opportunity to connect personal attributes to business impact. When discussing strengths, focus on qualities that help you thrive in a fast-paced, data-rich retail environment—such as problem-solving, cross-functional collaboration, or consumer behavior analysis—and use specific examples. For weaknesses, be honest and choose areas you are actively working on, like prioritizing tasks during peak retail cycles or communicating complex analyses to non-technical store leaders.

13. How comfortable are you presenting your insights?

As a Walmart data scientist, you will often need to present insights to both technical and operational stakeholders, such as store managers or supply chain leaders. Your response should show confidence in making data relatable and actionable, whether through dashboards, storytelling, or business-focused visualizations. Provide an example of how you translated predictive modeling results into a recommendation that drove changes in store inventory or customer engagement.

14. Describe an analytics experiment that you designed. How were you able to measure success?

Walmart values experimentation that leads to measurable business improvements, such as increased sales, better customer experience, or operational efficiency. Talk about a project where you tested a new pricing strategy, promotional campaign, or stocking model, and describe how you defined success using metrics like conversion rates, revenue impact, or reduced waste. Be sure to explain how you validated results statistically and ensured the test was scalable across regions or store formats.

Clear communication is critical at Walmart, where data scientists often collaborate with merchandising, logistics, and store operations teams. Describe a time when your analysis was misunderstood or too technical for the audience, and how you adapted by simplifying visuals, using business terms, or involving stakeholders earlier in the process. Emphasize how the experience helped you become a more effective bridge between data and action.

16. Tell me about a project in which you had to clean and organize a large dataset.

Retail data can be messy and massive, especially across Walmart’s vast network of stores, warehouses, and digital platforms. Share a project where you wrangled data from multiple sources—such as transaction logs, supply chain systems, or online behavior data—and explain how you handled missing values, outliers, or inconsistent formats. Discuss the tools and logic you used and how data cleaning improved model performance or led to more trustworthy insights.

How to Prepare for a Data Scientist Role at Walmart

To succeed in your Walmart data scientist interview, focus on sharpening both your technical skills and business thinking. Start by mastering SQL and Python, since most assessments include tasks like writing optimized queries, working with joins, and cleaning large datasets. Practice problems that reflect real-world scenarios such as customer segmentation, demand forecasting, or recommendation systems. You should also be ready to discuss model evaluation techniques like AUC-ROC, precision-recall tradeoffs, and handling imbalanced data. Since Walmart values experimentation, review how to design A/B tests, interpret results, and link them back to measurable business outcomes.

For behavioral rounds, prepare with AI Interviewer and STAR-format stories that show how you solve problems, communicate across teams, and work under tight timelines. Familiarize yourself with Walmart’s AI initiatives—such as its use of generative AI in product substitutions or predictive tools for supply chain optimization—so you can tie your answers to what the company is already doing.

Mock interviews that simulate the Walmart data science interview process can help you build confidence, especially for technical and case study portions. Don’t forget to study the business side too—understand Walmart’s retail model, its shift toward omnichannel personalization, and how data science supports operational efficiency. With the right preparation, a clear understanding of the company’s vision, and confidence in your skills, you can stand out and land your ideal walmart data scientist interview opportunity.

FAQs

What is the average salary for a Data Scientist at Walmart?

Average Base Salary

Average Total Compensation

How many rounds are there in a Walmart Data Scientist interview?

The number of rounds varies by level. Entry-level candidates typically go through 1 to 2 rounds, while mid-level applicants can expect 2 to 3. For more advanced roles, the staff data scientist Walmart how many rounds of interviews question often comes up. The answer is usually 3 to 5 rounds, which include technical deep-dives, system design discussions, and leadership interviews focused on cross-functional collaboration and stakeholder influence.

Are there senior-level variations in the interview?

Yes, there are notable differences. A Walmart senior data scientist interview includes more advanced topics such as system architecture, scalable model deployment, and technical leadership. You may also be asked to critique model performance in production, propose business-aligned improvements, or demonstrate mentorship capabilities through collaborative scenario questions. These interviews are longer and more specialized to evaluate your readiness for higher responsibility.

Conclusion

The Walmart data scientist interview is a gateway to one of the most influential data science roles in global retail. By preparing strategically, you can navigate a process that tests technical depth, business understanding, and communication skills. If you want to build your foundation before applying, start with our learning path for data science at Walmart. To see what success can look like, check out Nathan Fritter’s success story. And when you’re ready to dive into practice, explore our full set of Walmart data scientist interview questions to sharpen your skills. With the right prep, confidence, and curiosity, you’ll be ready to stand out and take the next step in your data science career at Walmart.

Walmart Data Scientist Jobs

Walmart Interview Questions

| Question | Topic | Difficulty | ||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

SQL | Easy | |||||||||||||||||||||||

We’re given two tables, a Write a query that returns all neighborhoods that have 0 users. Example: Input:

Output:

| ||||||||||||||||||||||||

SQL | Medium | |||||||||||||||||||||||

SQL | Medium | |||||||||||||||||||||||

SQL | Easy | |

Machine Learning | Medium | |

Statistics | Medium | |

SQL | Hard | |

Machine Learning | Medium | |

Python | Easy | |

Deep Learning | Hard | |

SQL | Medium | |

Statistics | Easy | |

Machine Learning | Hard |

Discussion & Interview Experiences