Citadel Machine Learning Engineer Interview Guide: Most Asked Questions in 2026

Introduction

Machine learning engineering roles are among the most in-demand jobs right now, with a reported growth rate of 53% since 2020. Did you know that among the industries where machine learning engineers are sought after is finance, where Citadel is known for its research-driven culture in hedge funds and financial services. At Citadel, machine learning engineers are integral to the financial firm’s investment in advanced modeling, infrastructure, and automation; they help power systematic trading strategies, portfolio risk modeling, and ultra-low-latency execution.

The Citadel machine learning engineer interview thus blends applied machine learning, software engineering, and financial decision-making. You will face deep evaluations across algorithms, probability, model design, and system architecture, with an expectation to navigate noisy data, shifting market regimes, and strict performance constraints. This guide explains the Citadel machine learning engineer interview process and provides expert tips, while also sharing the most asked questions that you can practice here on Interview Query.

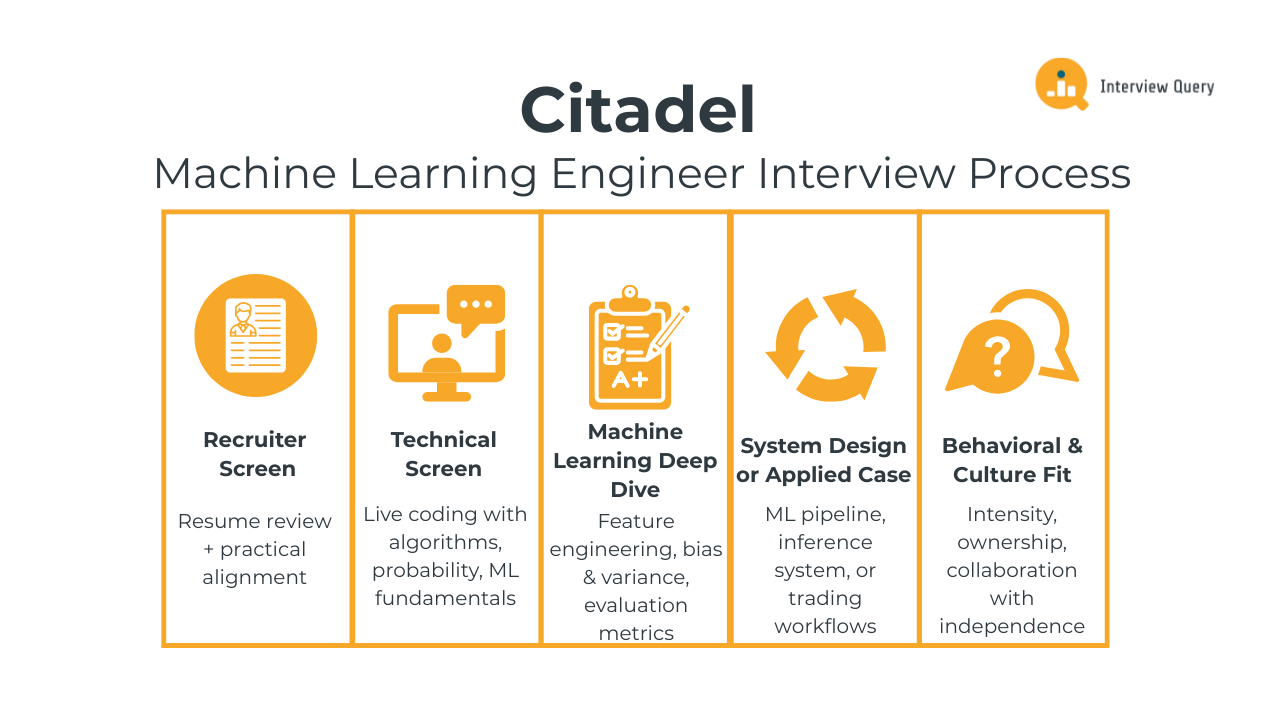

Citadel Machine Learning Engineer Interview Process

The Citadel machine learning engineer interview process typically spans four to six weeks and includes five distinct stages, moving from high-level screening to deep technical and behavioral evaluation. Across every round, interviewers assess three core dimensions: theoretical strength in machine learning and probability, practical engineering judgment, and clarity of reasoning in time-constrained, high-stakes environments.

Unlike many technology companies, Citadel places less emphasis on generic product intuition and more on correctness, robustness, and performance. Interviewers expect precise reasoning, clean implementations, and thoughtful discussion of trade-offs.

Each stage below builds on the previous one, narrowing the funnel quickly and deliberately.

Recruiter screen

The recruiter screen is a short but focused conversation that validates baseline fit before technical interviews begin. Recruiters review your resume in detail, paying close attention to depth in machine learning, statistics, and software engineering rather than surface-level exposure. Expect targeted questions about the models you’ve built, the data scale you’ve worked with, and whether you owned decisions or simply executed tasks.

This call also aligns on practical details such as role scope, team focus, location preferences, and compensation expectations. While it is not technical, vague answers or unclear motivation can stall progress early.

Tip: Be explicit about why finance-oriented ML appeals to you. Tie your background to problems involving noisy data, uncertainty, optimization, or real-time decision-making, instead of expressing generic “interest in trading.”

Technical screen

The technical screen is the first true filter and is intentionally demanding. This round typically combines live coding with questions on algorithms, probability, and core machine learning fundamentals. You may be asked to implement data structures, reason through stochastic processes, or analyze the behavior of an algorithm under edge cases.

Interviewers care deeply about correctness and efficiency. Writing code that works for simple examples is not enough. You are expected to discuss time and space complexity, numerical stability, and failure scenarios as you go. Communication matters, but precision matters more.

This round is usually timed tightly, reflecting the pace expected in later interviews.

Tip: Practice solving problems end to end: write clean code, justify why it’s optimal, and proactively call out edge cases. Interview Query’s curated and timed ML Engineering 50 study plan can help mirror the pace and depth of Citadel’s technical screens.

Machine learning deep dive

The machine learning deep dive focuses on how you design, evaluate, and stress-test models. Interviewers may ask you to walk through a past project or design a model for a hypothetical trading or prediction problem. Topics commonly include feature engineering, bias and variance trade-offs, evaluation metrics, and handling non-stationary data.

You are expected to reason beyond textbook answers. Interviewers probe how models fail in production, how you detect degradation, and how you would adapt to changing data distributions.

Tip: Explicitly discuss failure modes, monitoring signals, and retraining strategies to show that you think like an owner, not just a model builder. Interview Query’s real-world ML challenges help you practice articulating these decisions under interview-style probing.

System design or applied case

This stage evaluates your ability to translate models into production-grade systems. You may be asked to design a scalable machine learning pipeline, a real-time inference system, or an applied case tied to trading workflows. Interviewers look for structured thinking around data flow, latency constraints, fault tolerance, and system observability.

Unlike traditional system design interviews, financial constraints matter. Small delays or silent failures can have outsized impact, so designs must prioritize reliability and speed alongside accuracy.

Tip: Start with constraints, such as latency targets, data freshness, failure tolerance, before proposing architecture. You can use Interview Query’s question bank for system design questions that train you to emphasize performance trade-offs and real-world constraints seen in finance.

Behavioral and culture fit

The final stage focuses on behavioral alignment and long-term fit. Citadel values intensity, ownership, and the ability to operate independently while collaborating with equally driven peers. Interviewers ask about moments where you made high-impact decisions, handled pressure, or took responsibility for difficult outcomes.

Stories should demonstrate not just success, but also accountability and learning. Shallow or overly polished answers tend to fall flat; interviewers want to understand what you did, what went wrong, and how you adapted.

Tip: Prepare examples where you owned a problem end to end, especially when timelines were tight, data was messy, or the outcome was uncertain.

Want to simulate this level of rigor before the real thing? Interview Query’s mock interviews help you practice under realistic pressure, effectively preparing you for Citadel’s interview intensity.

Challenge

Check your skills...

How prepared are you for working as a ML Engineer at Citadel Llc?

What Questions Are Asked in a Citadel Machine Learning Engineer Interview?

Citadel machine learning engineer interview questions are designed to test depth, precision, and applied reasoning rather than surface-level familiarity. Across machine learning, coding, and behavioral rounds, Citadel consistently probes for candidates who combine theoretical rigor with strong engineering judgment and personal ownership.

The following video walks through how a real-world machine learning interview question should be approached, emphasizing structured thinking, tradeoff analysis, and clear communication, which are exactly the skills Citadel interviewers are evaluating.

Watch Next: 7 Types of Machine Learning Interview Questions!

In this video, Interview Query cofounder Jay Feng highlights the value of clarifying assumptions, choosing appropriate evaluation metrics, and explaining why a solution makes sense under real constraints. These strategies are appropriate for the Citadel interview questions below, which often mirror the conditions of systematic trading environments and require you to reason from first principles and justify every design choice.

Coding and algorithms interview questions

Coding and algorithms questions focus on problems that resemble real-time computation and streaming scenarios, where naive solutions fail quickly. Strong candidates are able to write clean code, reason about complexity, and explain optimizations without relying on trial and error.

Implement logistic regression from scratch using gradient-based optimization.

This question evaluates core coding ability, numerical reasoning, and understanding of optimization fundamentals. Walk through defining the log-likelihood, deriving gradients, and iteratively updating parameters using gradient descent or Newton’s method. Clear handling of convergence criteria and vectorized operations is essential to demonstrate both correctness and efficiency.

Tip: Candidates stand out by narrating how they would validate intermediate results (e.g., gradient checks or sanity tests on small data) before trusting the final model output.

-

Here, the interviewer is assessing your grasp of evaluation metrics and their real-world implications. Start by explaining how adjusting decision thresholds shifts precision and recall, then tie those shifts to asymmetric costs such as false positives versus false negatives. Showing how to compute and compare these metrics reinforces practical understanding.

Tip: Explicitly calling out how metric choices affect capital allocation or risk limits signals strong business awareness beyond textbook definitions.

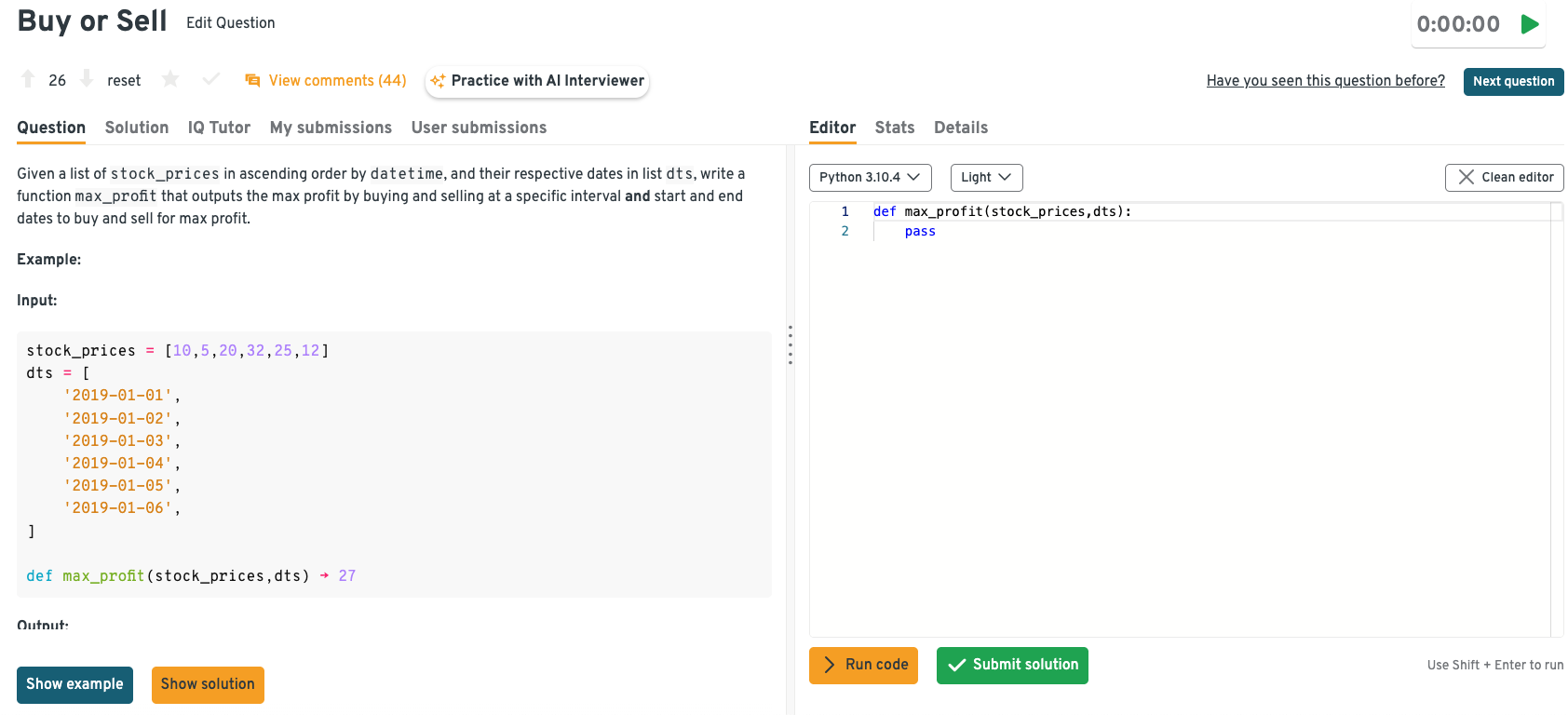

Find the maximum profit and optimal buy/sell dates from a time-ordered stream of stock prices.

This question tests algorithmic efficiency and the ability to reason about streaming data. A solid approach maintains a running minimum price while tracking the best achievable profit in a single pass. Explaining why this achieves linear time complexity helps demonstrate optimization awareness.

Tip: Mentioning how the logic adapts to real-time feeds or partial data availability shows readiness for production trading systems.

Head to the Interview Query dashboard to solve additional coding and algorithms questions like this using a built-in code editor. You can also instantly validate your approach with step-by-step solutions designed to mirror real interview expectations.

Optimize a rolling metric computation under strict latency limits.

The focus here is on performance optimization and data structure choice. A good answer discusses using incremental updates, windowed aggregates, or deque-based techniques instead of recomputing metrics from scratch. Addressing time–space tradeoffs and cache efficiency adds depth to the solution.

Tip: Strong candidates quantify latency targets or throughput assumptions up front, demonstrating an instinct for performance constraints common in low-latency environments.

Debug a performance bottleneck in a time-critical algorithm.

This question evaluates systematic debugging and performance profiling skills. A strong response outlines identifying hotspots through profiling, analyzing algorithmic complexity, and validating assumptions about input size or distribution. Proposing targeted optimizations rather than premature rewrites signals practical engineering judgment.

Tip: Calling out the difference between algorithmic inefficiencies and systems-level issues (I/O, memory, threading) reflects the depth expected of Citadel MLEs working close to production.

Machine learning theory and modeling interview questions

Machine learning theory questions at Citadel focus on how models behave in high-stakes, low-tolerance settings. Rather than abstract definitions, interviewers push you to apply concepts like evaluation metrics, regularization, and model selection to trading-oriented constraints. You are expected to reason carefully about cost asymmetry, robustness, and when added model complexity introduces more risk than value.

-

This question evaluates your understanding of model complexity, generalization, and decision-making under uncertainty. Start by explaining how simpler models may underfit but offer stability and interpretability, while more complex models can capture nuanced patterns at the risk of overfitting. You should discuss using cross-validation, out-of-sample testing, and business constraints (e.g., regulatory or risk tolerance) to find the right balance.

Tip: Tie the tradeoff back to capital or risk exposure. Candidates stand out when they explicitly connect modeling choices to downside protection, not just predictive accuracy.

Go deeper on ML theory and modeling questions like this using the Interview Query dashboard. Get real-time guidance from IQ Tutor, an AI coach that helps you refine your reasoning, stress-test assumptions, and explain tradeoffs the way interviewers expect.

-

Here, the interviewer is testing whether you can translate business objectives into the correct modeling formulation. An effective response contrasts discrete decision outcomes (e.g., trade/no-trade, default/non-default) with continuous targets like returns or probabilities. Highlighting how evaluation metrics, interpretability, and downstream usage differ between the two approaches strengthens the explanation.

Tip: Mention how the model’s output is consumed by downstream systems (execution, risk limits, alerts), since Citadel places heavy emphasis on end-to-end decision pipelines.

-

This question probes your practical knowledge of regularization and robustness in real-world datasets. Mention limiting tree depth, enforcing minimum samples per split, and using ensemble methods like bagging or boosting. You can further emphasize validation strategies and feature selection to reduce sensitivity to noise and spurious correlations.

Tip: Strong candidates also reference monitoring feature importance drift over time, signaling awareness that financial signals degrade and models must be actively managed.

When would simpler models outperform deep learning in trading contexts?

This question assesses your judgment around model selection rather than raw technical complexity. Explain that linear or shallow models often excel when data is limited, noisy, or non-stationary, which is common in financial markets. You might also note advantages in interpretability, faster iteration, and reduced risk of overfitting compared to deep learning approaches.

Tip: Calling out latency, debuggability, or ease of stress-testing shows maturity; these factors often matter more than marginal gains in offline metrics.

How would you compare two models with similar performance but different stability profiles?

The interviewer is testing your ability to think beyond single-number metrics and evaluate models holistically. A good response discusses analyzing performance across time periods, market regimes, or bootstrapped samples to assess consistency. You can also mention prioritizing stability and robustness in production, especially for risk-sensitive or capital-intensive applications.

Tip: Emphasize preference for models that fail gracefully under stress. Demonstrating awareness of tail events and regime shifts aligns closely with Citadel’s risk-first engineering culture.

Ready to build end-to-end mastery? Follow Interview Query’s Modeling & Machine Learning Interview Learning Path to learn about core theory and production-ready judgment, using structured lessons and guided practice designed specifically for high-stakes ML interviews.

Behavioral and ownership interview questions

Behavioral questions at Citadel are tightly linked to technical accountability. Interviewers look for evidence that you take ownership of outcomes, especially when systems fail under pressure. Vague stories or deflected responsibility are red flags, so it’s important to provide concrete examples and quantify your impact.

Describe a time your model failed in production and how you fixed it.

Citadel asks this to assess ownership, resilience, and how candidates respond when real-world systems break under market pressure. Strong answers quantify impact by naming concrete symptoms (latency spikes, PnL drawdowns, error rates) and clearly tying corrective actions to measurable recovery.

Sample Answer: “A short-term signal model degraded after a regime shift, causing a 12% drop in hit rate over two trading days. Monitoring alerts flagged feature drift, and analysis showed a key liquidity proxy had become unreliable. After rolling back the feature and retraining on recent data, performance returned to baseline within 48 hours. A postmortem led to automated drift checks that reduced similar incidents by over 30%.”

-

This question evaluates communication clarity and the ability to connect technical work to business outcomes. Candidates can quantify impact by framing explanations around decisions enabled, risk reduced, or capital efficiency improved rather than algorithms used.

Sample Answer: “The model was positioned as a system that reallocates capital toward higher-confidence opportunities while limiting downside exposure. Its rollout reduced daily drawdowns by 15% and improved risk-adjusted returns without increasing turnover. Visual summaries showing before-and-after performance made the impact clear. Leadership focused on how the model changed decisions, not its internal mechanics.”

Practice behavioral questions like this in the Interview Query dashboard, where IQ Tutor helps refine clarity and impact, while the user comments section lets you compare your framing against real candidate answers and interviewer expectations.

-

This is asked to gauge collaboration, prioritization, and execution speed in high-stakes environments. Effective answers highlight tradeoffs made, timelines met, and outcomes delivered, ideally backed by delivery metrics or business results.

Sample Answer: “A risk model update needed sign-off from compliance and trading within a week due to a regulatory deadline. Tradeoffs between accuracy and interpretability were clearly laid out using concrete examples. Alignment was reached in three days, and the model shipped on time with zero post-launch issues. The update improved false-positive rates by 18% while meeting all constraints.”

Tell me about a high-pressure decision you owned end to end.

Citadel uses this to assess judgment, accountability, and comfort making decisions with incomplete information. Candidates should quantify stakes by referencing time pressure, financial exposure, or operational risk tied to the decision.

Sample Answer: “During a volatile market open, a decision was needed on whether to disable a model showing inconsistent behavior. Shutting it down reduced expected PnL by 5% for the day but avoided potential tail losses. After a rapid review, the model was paused and re-enabled later with safeguards. The call prevented further drawdowns during peak volatility.”

Describe a situation where your work directly impacted financial outcomes.

This question targets business impact and the ability to tie ML work to firm performance. Strong responses anchor on metrics like PnL, cost savings, or efficiency gains rather than abstract improvements.

Sample Answer: “An execution cost model was introduced to optimize order sizing across venues. Over the first quarter, it reduced slippage by 8 basis points, translating to roughly $4M in savings. Results were tracked daily against a control strategy. The model later became part of the default execution stack.”

Together, these coding and algorithms, machine learning theory, and behavioral questions reflect what Citadel looks for in machine learning engineers: the ability to write efficient, production-ready code, make sound modeling decisions under uncertainty, and clearly connect technical work to business outcomes. To go deeper, Interview Query’s question bank offers realistic, company-aligned practice that reflects the level of rigor and decision-making expected by interviewers.

How to Prepare for a Citadel Machine Learning Engineer Interview

Preparing for a Citadel machine learning engineer interview is less about memorizing finance trivia and more about demonstrating disciplined engineering judgment under real-world constraints. Citadel looks for candidates who can reason precisely, move quickly, and take ownership of high-impact systems, often with incomplete information and tight deadlines.

Master algorithmic thinking in constrained environments. Expect problems where brute force fails quickly. Focus on single-pass algorithms, rolling-window computations, and memory-efficient data structures that mirror streaming market data. During practice, explicitly state time and space complexity and explain how your solution scales as data velocity increases. Citadel interviewers care deeply about correctness and complexity, so always be ready to justify your choices.

Tip: When practicing, simulate “live” interviews by talking through tradeoffs aloud. Interview Query’s Data Structures and Algorithms Interview learning path is especially useful for pressure-testing complexity reasoning under realistic time constraints.

Treat machine learning as a decision system, not just a model. Go beyond knowing formulas by practicing how you’d choose models, metrics, and thresholds under asymmetric risk. Be ready to explain how you would detect feature drift, model decay, or regime changes, along with what action you’d take when performance degrades in production.

Tip: Frame every machine learning-related answer around business consequences (false positives vs. false negatives, latency vs. accuracy). You can use Interview Query’s real-world ML challenges to practice articulating these tradeoffs clearly and concisely.

Develop intuition for low-latency ML system design. Citadel values engineers who think end-to-end. Data ingestion, feature pipelines, inference speed, and monitoring all matter. When practicing system design, anchor your answers in concrete constraints like millisecond latency budgets, failover strategies, and real-time observability.

Tip: Practice system design with a stopwatch and explicit constraints. Interview Query’s question bank has ML system design prompts to help train you in scoping solutions quickly without overengineering.

Prepare stories that show ownership and financial impact. Behavioral questions at Citadel often probe how you respond when things break or stakes are high. Rehearse examples where you owned a decision end to end, quantified impact (PnL, risk reduction, latency improvement), and took accountability for outcomes.

Tip: Write your stories using a metrics-first framework (problem → action → measurable outcome). Then, refine them through behavioral mock interviews on Interview Query to get peer feedback and ensure clarity under pressure.

To prepare with the same rigor Citadel expects, explore Interview Query’s ML Engineering 50 study plan, which walks through high-signal coding problems, ML theory, system design, and behavioral scenarios modeled after real-world interviews. It’s designed to help candidates build the exact speed, depth, and confidence Citadel demands from its candidates.

What Does a Citadel Machine Learning Engineer Do?

The Citadel machine learning engineer role sits at the core of how quantitative ideas become production systems. This role goes beyond support and experimentation. Machine learning engineers build, deploy, and maintain models that influence trading signals, execution strategies, portfolio construction, and real-time risk controls.

The work is inherently production-focused. Models are deployed into environments where latency is measured in milliseconds, data distributions shift constantly, and errors have immediate financial consequences. As a result, success at Citadel depends as much on engineering discipline and systems intuition as it does on statistical sophistication.

While responsibilities vary by team, most Citadel ML engineers spend their time on some combination of:

- Designing end-to-end ML pipelines

- Building low-latency inference systems

- Evaluating models under realistic conditions

- Monitoring, retraining, and hardening models in production

- Collaborating with quantitative researchers and traders

Citadel’s culture is shaped by the scale and competitiveness of its trading operations. Compared to many peer firms, the environment is faster, more feedback-driven, and more tightly aligned with real-world outcomes. Engineers are expected to own systems end to end, making ownership and measurable impact a key indicator for advancement and compensation.

Feedback is also direct and frequent, with teams benefiting from deep capital, proprietary data, and strong infrastructure. Overall, Citadel values engineers who can balance innovation with discipline and who understand that robustness often matters more than marginal accuracy gains.

To learn more about how the role compares with other cross-functional roles and teams within Citadel’s financial ecosystem, explore the comprehensive Citadel company interview guide on Interview Query.

FAQs

How long does the Citadel interview process take?

Most candidates complete the full interview loop within four to six weeks. Timelines can move faster for experienced candidates or urgent team needs, but the overall structure remains consistent.

What background does Citadel look for in machine learning engineers?

Citadel hires candidates with strong foundations in algorithms, statistics, and machine learning, combined with production engineering experience. Advanced degrees are common but not required. Depth and rigor matter more than formal credentials.

Do I need finance or trading experience to pass the interview?

No prior finance experience is required. However, candidates are expected to reason clearly about uncertainty, risk, and trade-offs. Interviewers evaluate whether you can adapt machine learning techniques to high-stakes decision-making environments.

How technical are the interviews compared to big technology companies?

Citadel interviews are generally more technically demanding. Expect deeper probing into correctness, efficiency, and failure modes, with less tolerance for vague or high-level answers.

Is relocation required for Citadel machine learning engineers?

Most roles are based in core hubs such as New York, Chicago, or Miami. Relocation support is typically available, and location expectations are discussed early in the recruiter screen.

What differentiates strong candidates in the final rounds?

Top candidates demonstrate disciplined thinking, clear communication under pressure, and strong ownership of past work. Interviewers look for engineers who can operate independently and make sound decisions when stakes are high.

Become a Citadel Machine Learning Engineer with Interview Query

Overall, success in the Citadel machine learning engineer interview requires more than strong models or clean code. It demands disciplined reasoning, production-minded thinking, and the ability to perform under sustained pressure. With the right preparation strategy and a clear understanding of what interviewers value, the process becomes manageable and predictable.

Approach your preparation with intention using Interview Query’s prep resources. Explore the question bank to prepare for every stage of the loop, and supplement it with the ML Engineering 50 study plan for a curated set of ML problems, from algorithms to ML Ops and training pipelines. Doing real-time mock interviews on Interview Query can also help you prepare with confidence for Citadel through real-world scenarios and peer feedback.

Discussion & Interview Experiences