How Kshitij Kumar Passed Meta’s Technical Screen with SQL and Python Practice

Performing Under Pressure

For data professional Kshitij Kumar, the hardest part of technical interviews wasn’t the difficulty of questions, but the pace. He realized this midway through his preparation for data roles at Meta. He wasn’t struggling with fundamentals, as SQL queries, Python syntax, and data manipulation were all familiar territory. What he struggled with was executing quickly and accurately within strict time limits, especially as he was based in India and had to navigate late-night interviews.

“It doesn’t matter how easy the question is. When the clock is running for 25 minutes, it’s really hard,” Kshitij says, describing how even straightforward problems became challenging under pressure.

Wanting to bridge this execution gap, Kshitij used Interview Query to simulate real interview conditions with timed SQL and Python practice. This focused effort eventually led to Kshitij passing Meta’s technical screen and advancing to the full interview loop.

When Technical Knowledge Isn’t Enough for Interviews

Kshitij came from a technical background and had consistently worked in data-heavy environments. But over time, as he prepared for top-tier roles, he noticed a disconnect between common study methods and actual interviews. For instance, interviews compressed real-world thinking into artificial constraints, such as short time windows for parsing complex prompts and writing efficient code.

“In real life, you’re working with lists, dictionaries, and data manipulation,” he explains. “Why would they ask DFS or BFS if you’re never going to use that?”

That disconnect forced him to rethink how he was preparing. Instead of focusing on theory, what he needed was to prioritize applied data skills and showcase what truly matters in interviews at top companies like Meta.

Meta’s Screening: Where Speed Becomes the Bottleneck

Meta’s technical screening made the gap impossible to ignore. The format was unforgiving: two back-to-back rounds, one focused on SQL and the other on Python, each lasting about 25 minutes. While the questions weren’t necessarily complex, they were dense, characterized by long descriptions, multiple constraints, and large test cases.

“You get seven to eight lines of information. If you spend three minutes just reading, you’re left with four minutes to solve the problem,” Kshitij says, explaining the time constraints he must work with before even writing code.

Since the challenge wasn’t just about solving problems, he needed a new strategy for meeting Meta’s expectations for speed without sacrificing correctness.

This high-pressure situation meant he had to:

- Filter out irrelevant details quickly

- Identify patterns under pressure

- Write efficient, minimal code

Without practicing in that exact format, even strong candidates like Kshitij could fall behind.

The Turning Point for Kshitij: Practicing Like It’s the Real Interview

Realizing that no amount of theoretical knowledge would be enough for Meta’s technical interviews, Kshitij needed to structure his preparation accordingly.

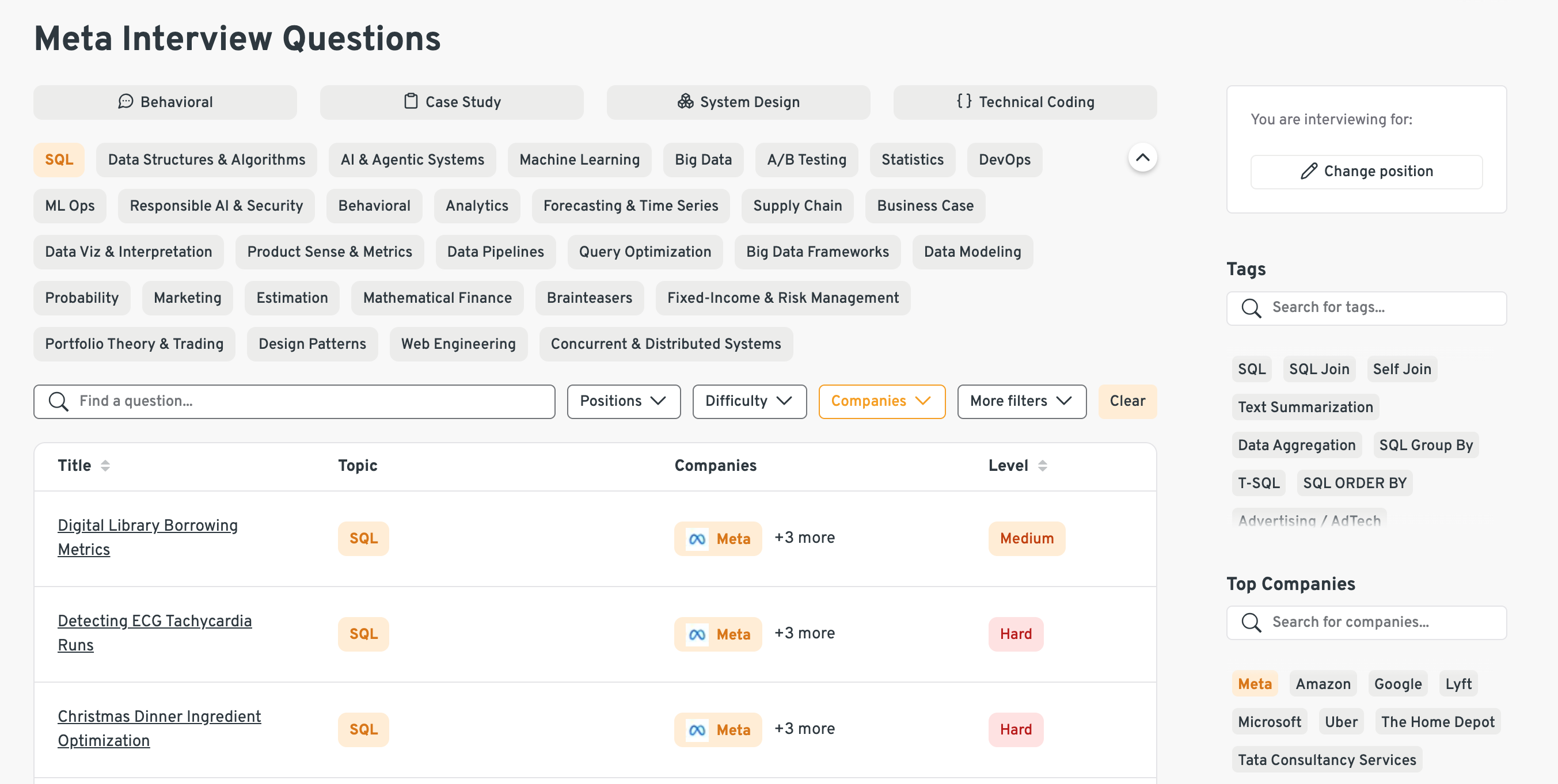

Instead of moving randomly between topics, he began using Interview Query as a filter.

He leveraged Interview Query’s question bank, based on real interview experiences, to focus only on questions that resembled Meta’s format: multi-step prompts, realistic datasets, and problems that required both reading and coding under time pressure. If a question didn’t reflect that structure, he skipped it, focusing his prep on SQL & Python questions that simulate both the pressure and technical difficulty of Meta interviews.

Practicing SQL With Real Schemas

Kshitij stopped doing standalone SQL questions and shifted to schema-based problems from Interview Query’s question bank.

He worked through 20 to 30 questions built around the same types of datasets he kept seeing:

- Authors, books, and transactions

- Event logs and user activity

- Normalized relational tables

Instead of solving each problem once and moving on, he reused the same schemas across multiple questions. That way, he wasn’t re-learning table structures every time.

“These schemas felt familiar. Once you understand the schema, you can write queries much faster,” he says, explaining how familiarity reduced the time spent decoding the problem.

The more he practiced, the better he recognized patterns across questions and moved faster when working with joins, aggregations, and filtering logic.

Focusing on Most Asked Python Problems

For Python, Kshitij stopped practicing broad problem sets and narrowed his scope to patterns he saw repeatedly in Interview Query questions.

He filtered for Python problems involving:

- Dictionaries for counting and grouping

- Sets for validation and deduplication

- Nested lists and JSON-like structures

When solving, he imposed an additional constraint on himself. He had to rewrite the solution in fewer lines after getting it working.

“If you write ten lines of code, it will take time. If you can reduce it to two lines, you’re saving half your time,” he says, highlighting how efficiency became a core part of his strategy.

Over time, this became a habit. Before moving on, he would:

- Replace loops with list or dictionary comprehensions

- Collapse multi-step logic into fewer operations

- Remove intermediate variables unless necessary

This trained him to default to faster implementations during the interview.

Turning Practice Into Simulation

The biggest shift came from how he used time. Kshitij began treating Interview Query’s question bank like a mock interview environment rather than a study tool.

Every question became a timed drill:

- Strict time limits

- No pauses to look things up

- Focus on completing within interview constraints

He also changed how he measured progress. Instead of tracking how many questions he solved correctly, he tracked:

- How quickly he could start writing code

- Whether he finished within the time limit

- How much time he spent reading vs. solving

If a problem took too long to understand, he reviewed the solution and revisited it later, forcing himself to parse it faster the second time.

Over repeated sessions, this created a feedback loop, ultimately changing how he approached new problems. He began recognizing familiar structures, like when a question was really a join-and-aggregate task in SQL or a dictionary-counting problem in Python, within the first few lines.

As a result, he spent less time decoding prompts and more time writing solutions. That shift directly improved his ability to start quickly, stay within time limits, and make decisions under pressure during the actual interview.

Inside the Meta Interview: How Practice Changed the Interview

When Kshitij entered the actual interview, the structure no longer felt new. He encountered questions that closely mirrored his practice. One Python problem involved maximizing a score by selecting from weighted categories. The logic wasn’t complicated, but it required quick pattern recognition.

“You just have to quickly look into it and find the pattern,” he says, emphasizing that speed mattered more than complexity.

Another question focused on validating event logs, ensuring that actions like returning an item didn’t occur before a checkout event. It reflected the real-world scenarios he was used to seeing, such as production data issues and business constraints. Even the way interviewers interacted matched his expectations.

“They help a lot, but the test cases are huge. You can’t understand the problem just by looking at the data. You have to understand the logic first,” he says, noting that clarity of thought mattered as much as coding ability.

The alignment between preparation and interview made a difference.

To see how this plays out in a real Meta-style interview setting, including how candidates think through ambiguous problems and communicate under pressure, watch the breakdown below:

In this example, the interview follows a familiar Meta pattern. You start with a loosely defined problem, clarify assumptions with the interviewer, and then iteratively refine your solution into something scalable. The candidate is expected to talk through trade-offs like time vs. space complexity, handle edge cases as they arise, and respond to hints without losing momentum.

What stands out is how the interviewer nudges rather than leads, similar to what Kshitij experienced. That’s exactly why practicing this format, and not just individual questions, made such a difference in his performance.

The Result: Advancing to the Full Interview Loop

Kshitij passed Meta’s technical screening: an elimination round where many candidates fall short, not because of difficulty, but because of time.

“It’s an elimination round. If you don’t solve enough questions, you’re out,” he says, attesting to how passing the round felt like a breakthrough.

More than validating his approach, this outcome gave him the confidence to move forward into the full interview loop, which included product sense, data modeling, and additional technical rounds. Knowing what lay ahead also motivated Kshitij to take more time before scheduling the next stage, this time with a preparation strategy that had already proven effective.

Why Interview Query Worked

Kshitij didn’t use Interview Query as a passive resource, instead using it to structure his preparation and align with Meta’s interview format.

What made the difference:

Practicing on schema-based SQL problems

Working with structured datasets helped him quickly identify relationships and write queries faster during interviews.

Focusing on high-frequency Python patterns

By narrowing his scope to dictionaries, sets, and common patterns, he eliminated unnecessary complexity and implemented faster under pressure.

Using timed drills

Simulating 25-minute constraints trained him to manage time and make decisions more effectively during real interviews.

Prioritizing relevant questions

Instead of covering everything, he focused on problems aligned with actual data interview formats.

Repeating realistic scenarios

By combining Interview Query’s scenario-based questions with timed practice, he was able to internalize the pace and format of real interviews.

What He’d Do Differently (and What You Should Take Away)

Looking back, Kshitij’s approach became clearer over time. If he were to start again, his focus would be sharper from the beginning:

- Practice under time constraints from day one, not at the end

- Focus on data manipulation and SQL schemas over abstract algorithms

- Learn to identify and ignore irrelevant details quickly

- Optimize for fewer lines of code, not more

- Treat every practice session like a real interview

“The more you practice, the better you perform,” he says. But in his case, the improvement comes not from mere repetition, but practicing the right way.

Prepare Confidently with Interview Query

Kshitij Kumar’s experience reflects a common reality in technical interviews: strong fundamentals aren’t enough if they can’t be applied quickly. What ultimately changed his trajectory was aligning his preparation with how interviews actually work.

By practicing under realistic conditions, focusing on relevant skills, and building speed through repetition, he turned a point of weakness into a competitive advantage, eventually earning his place in Meta’s interview process. If you’re also interviewing for data roles and want similar results, the strategy is clear. With Interview query, you can:

- Practice with realistic SQL schemas

- Focus on high-impact Python patterns

- Build speed through timed drills

- Simulate actual interview pressure

Practice realistically. Practice consistently. Practice under pressure. Start with Interview Query today.