Karat’s 2026 Survey Shows Why Technical Interviews Now Simulate Real Work

Technical Interviews Are Changing in 2026

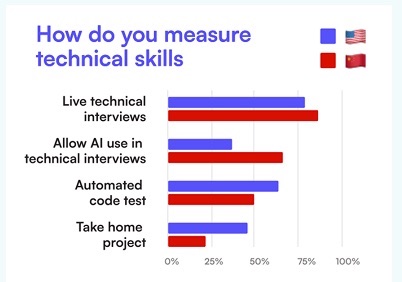

Technical interviews are getting harder to bluff, even as AI makes it easier to generate passable answers. In Karat’s 2026 survey of 400 engineering leaders, 71% said AI is making technical skills harder to assess, a sign that the old methods of screening for talent are losing predictive power.

That pressure is already changing the format. Karat argues that take-homes and automated code tests degrade fastest when candidates can lean on AI, while live interviews gain value because they show how someone reasons, makes decisions, and uses tools in real time.

CoderPad’s recent data points the same way. In its State of Tech Hiring 2026 coverage, 82% of developers said GenAI is useful, up from 76% a year earlier, and 54% said they would see a measurable productivity drop if AI tools disappeared.

If AI is now part of the job, the interview can no longer stop at whether a candidate produced an answer. It has to ask whether they understood it, improved it, and knew where it could break.

Why Technical Interviews Now Reward Explanation over Output

The underlying shift is simple. AI can help more candidates get to something that looks plausible. That makes the final artifact less informative by itself.

Source: Karat: Engineering Interview Trends in 2026

Source: Karat: Engineering Interview Trends in 2026

Karat’s survey found that 62% of organizations still prohibit AI in technical interviews, even though leaders estimate that more than half of candidates use it anyway. CoderPad reports a similarly split market, with 34% of hiring teams banning AI in assessments and 46% allowing it with constraints. The inconsistency matters less than the direction of travel. Whether companies allow AI or try to restrict it, they are being pushed toward formats that reveal process, not just output.

That is also why debugging and explanation are moving to the center. In an April framework drawn from 40,000-plus AI interviews, CoderPad said the strongest signal when AI is allowed is whether a candidate catches and fixes AI mistakes. That is not a side skill anymore.

In addition, Lightrun’s 2026 State of AI-Powered Engineering Report, released last week, found that 43% of AI-generated code changes still require manual debugging in production even after passing QA or staging. In other words, judgment is becoming more valuable now.

Real Interview Loops Are Now Meant to Simulate Jobs

This is not theoretical. Recent approved Interview Query interview writeups show companies already leaning into job-simulation formats.

One candidate described an online assessment that looked less like a standard coding test and more like a code review, with the real challenge being to spot uncommon issues, improve complexity, and explain the trade-offs behind the fixes.

Another described a data science onsite built around a case study readout, a product metrics round, a collaborative interview with a cross-functional partner, and even an AI assistant sitting silently in the room.

A third said a take-home explicitly allowed ChatGPT, but the real evaluation was whether the candidate could guide the tool, interpret its output, and catch what it missed.

The same pattern is showing up in Interview Query coaching calls. Recent sessions for senior analytics candidates emphasized storytelling, trade-off decisions, business goals, guardrail metrics, and structured frameworks over memorized answers.

That lines up with what companies seem to want from modern technical interviews: not just someone who can produce analysis, but someone who can explain what matters, where the risk is, and how they would make a decision under ambiguity.

The Shift Extends to Data Roles

It is tempting to read all of this as a software engineering story. But the pattern maps cleanly onto data hiring.

Data roles have always involved messy, semi-structured work, such as:

- Defining success metrics

- Judging experiment design

- Making trade-offs between speed and rigor

- Translating findings for product, marketing, or leadership stakeholders.

Those are exactly the tasks where AI can accelerate first drafts without owning the final decision.

Yet again, recent IQ coaching conversations reflect that pressure. Candidates preparing for roles at companies like Meta, Stripe, Netflix, and Uber are being pushed on:

- ROI framing

- Product prioritization

- Causal reasoning

- Cultural fit

- Communication with non-technical partners

In many loops, those are not peripheral rounds anymore, and are instead the rounds that decide whether a candidate looks senior enough to hire.

This helps explain why data interviews increasingly mix SQL or analytics exercises with collaborative rounds, behavioral depth, and case-study discussion. The employer is not just asking, “Can this person solve the prompt?” The employer is asking, “Can this person solve the prompt in the same way they would need to operate on the job?”

What Candidates Should Practice Now

The best response is not to avoid AI. It is to build the skills that still show up when AI is available.

First, candidates need to frame the problem before reaching for a tool. Stronger answers start with constraints, success criteria, and failure modes, not with a prompt window.

Second, they need to narrate trade-offs clearly. In both coaching sessions and approved interview writeups, candidates consistently run into follow-ups about why they chose a metric, what risk a launch creates, or what assumption could invalidate an analysis.

Third, they need to treat AI output as untrusted until it is checked. The candidate who can calmly say what is fragile, what needs validation, and what should not ship yet now looks much stronger than the candidate who arrives at a fast but opaque answer.

Finally, they need realistic speaking reps. Mock interviews and coaching fit this moment because they force candidates to defend a line of reasoning, not just submit a solution file.

The Bottom Line

AI is not making technical interviews easier in the way many candidates hoped. It is making them more demanding in the dimensions that are hardest to fake: explanation, debugging, prioritization, and judgment under ambiguity.

The market has not fully settled on one standard format yet. Some companies still ban AI, others allow it, and many are somewhere in the middle. But as AI lowers the cost of generating decent first-pass work, technical interviews are shifting toward real-time evaluation of how candidates think through imperfect information.

That is especially relevant for data and analytics candidates. The next wave of interview loops is likely to look less like isolated test taking and more like live job simulation: case readouts, collaborative problem solving, output validation, and explicit discussion of trade-offs. Candidates who prepare for that format early should be better positioned as the hiring bar keeps moving.