PwC’s AI Study Shows Why Business Judgment in Data Interviews Matters More

A New Hiring Signal

PwC’s new 2026 AI Performance Study offers a useful reality check for anyone assuming AI hiring is mostly about tool fluency. It also helps explain why business judgment in data interviews is becoming more valuable.

In a global study with 1,217 senior executives across 25 sectors, PwC found that 74% of AI’s economic value is being captured by just 20% of organizations. The companies pulling ahead are not the ones simply adding AI everywhere. They are the ones using it to chase growth, redesign workflows, and make more decisions inside clear guardrails.

This finding matters because hiring follows value creation. If companies care more about new revenue, better product decisions, and safer automation than about flashy demos, interview loops tend to screen for the same things. The candidate who can connect analysis to a business decision often looks stronger than one who can only talk about models.

Coaching sessions and interview experience submissions on Interview Query reflect the same insight. In data and analytics loops, candidates are still being tested on SQL, experimentation, metrics, product judgment, and narrative clarity. While AI remains present across interviews, it is sitting on top of business reasoning rather than replacing it.

Why Business Judgment in Data Interviews Matters

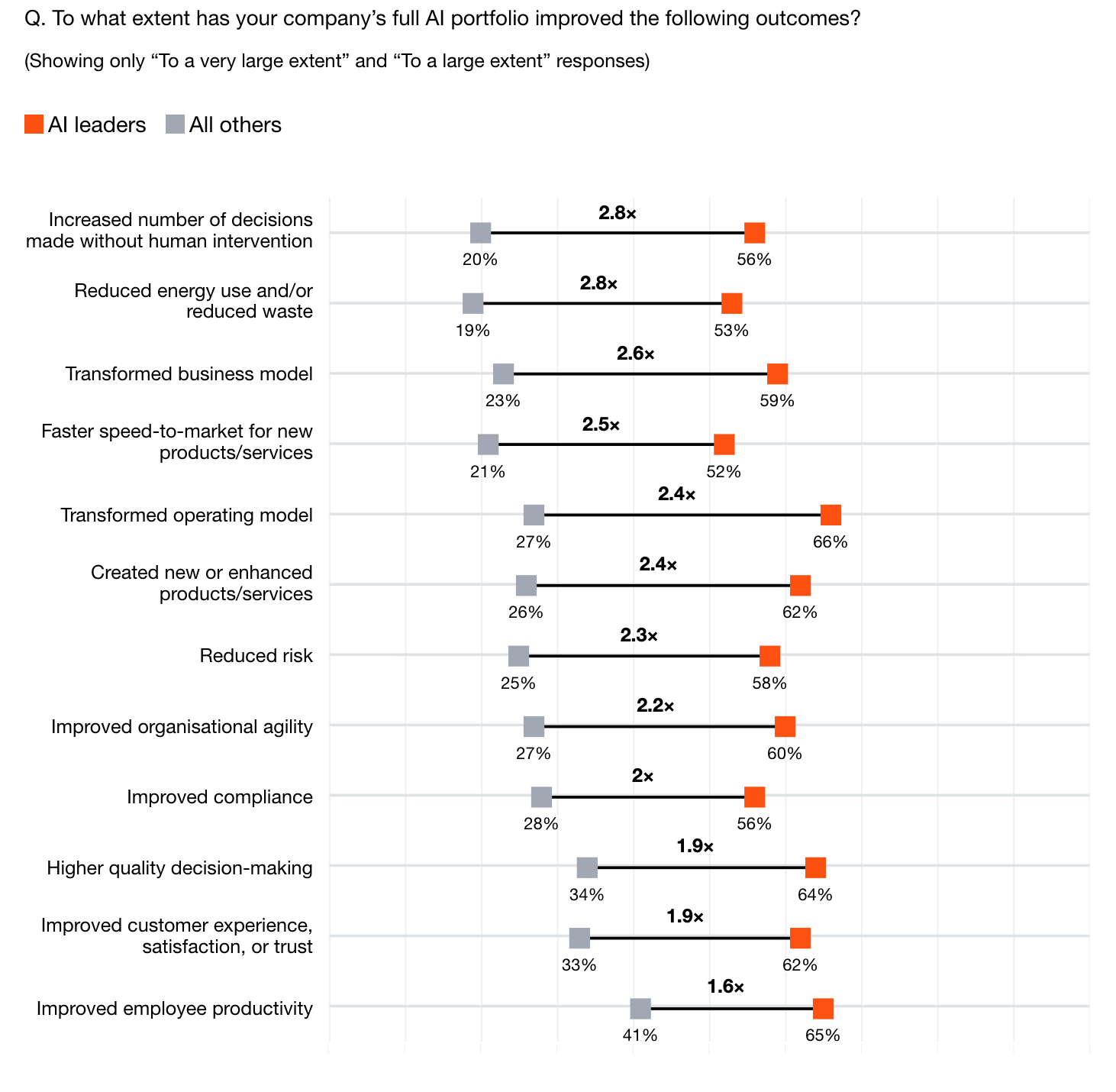

The most important line in PwC’s report is not about automation, but growth. PwC found that companies with the strongest AI performance were 2.6 times more likely than peers to say AI improved their ability to reshape business models, and two to three times more likely to use AI to identify new growth opportunities. In PwC’s analysis, capturing growth from industry convergence mattered more in driving financial performance than efficiency gains alone.

That shifts the hiring signal. When companies want analysts, data scientists, and product thinkers who can help find growth, the interview cannot stop at syntax or model trivia. It has to test whether a candidate can choose the right KPI, explain the tradeoff between speed and rigor, define sensible guardrails, and show how a recommendation changes a real business decision.

Source: PwC’s AI Performance Study

PwC’s other findings push the same way. The AI leaders were:

- 1.8 times more likely to use AI for multiple tasks within guardrails

- 1.9 times more likely to operate in autonomous and self-optimizing ways

- 1.9 times more likely to improve customer experience, satisfaction, or trust

- 2.8 times more likely to increase the number of decisions made without human intervention

That is exactly the kind of environment where weak judgment becomes expensive, and where data scientists and analysts who direct AI toward reinvention and growth become essential.

Recent Interview Experiences Demonstrate This Trend

The latest annotated interview experiences on Interview Query already reflect that broader bar. A recently published senior data science loop at a consumer fintech combined SQL joins, behavioral questions about influencing strategy, and a product-heavy round before the process moved forward. A recent senior scientist process at a ride-sharing company went deep on A/B testing failure modes, business use cases, and what to do when marketplace metrics conflict.

Another recent analytics leadership process went beyond analysis and required a full strategy presentation for stakeholders. Lastly, a recent data analyst loop at a large e-commerce company included a bar raiser style round and a strong emphasis on tying past work to business impact. None of that suggests companies are moving away from technical skill, as it signifies that they increasingly want technical skill plus decision quality.

In other words, business judgment in data interviews does not mean vague storytelling or generic leadership talk. It usually shows up in very concrete ways: metric selection, experiment design, tradeoff framing, prioritization, and the ability to explain why one recommendation is more credible than another.

The Shift from Syntax to Framing

Recent Interview Query coaching summaries show a similar change in emphasis. In one April coaching session for a marketplace role, the work centered on price elasticity, endogeneity, guardrail metrics, and when switchback testing makes more sense than a standard experiment. In another, a candidate preparing for a product data science loop still needed help on anti-joins and conditional logic, but the bigger advice was to explain the approach before coding so the interviewer could see the reasoning.

A separate recent session for an analytics-heavy role focused on p-values, multicollinearity, Power BI, and how to connect A/B test design back to a business goal. Another customer-facing interview debrief highlighted narrative delivery and cultural fit as real gaps at senior levels. The pattern is hard to miss: coaching time is still spent on technical foundations, but more of the marginal value now comes from framing, prioritization, and communication.

What Candidates Should Do Differently Now

The practical takeaway is not to prepare less technically. It is to pair every technical answer with a business reason.

- When answering SQL or Python questions, explain what decision the analysis supports.

- When proposing a metric, name the guardrail that prevents the wrong local optimization.

- When discussing experiments, say what would change your recommendation, not just what test you would run.

- When using AI tools, show how you verify output quality and where human judgment still matters.

This is especially important for product, analytics, and marketplace roles, where the hardest part of the interview is often deciding what matters, not writing the first query.

The Bottom Line

PwC’s latest study is nominally about AI returns, but it also says something useful about hiring. The companies getting outsized value from AI are not winning because they bolted on more tools. They are winning because they move beyond pilot mode, connect technology to growth, redesign workflows around it, and build enough trust to automate decisions safely.

As this same logic shows up in interviews, it means that technical fundamentals still matter, but they are no longer enough on their own for many data roles. The stronger signal now is whether a candidate can move from analysis to judgment, from metric to decision, and from experiment result to business recommendation. As more companies push AI into real operating workflows, that bar will probably get higher.