AI vs Human Mock Interviews: What Actually Improves Tech Interview Performance?

Introduction: The New Interview Prep Dilemma

According to a Boston Consulting Group survey, 70% of companies using artificial intelligence do so within human resources, with the top use case being talent acquisition. This has made tech hiring more competitive than ever, from increasing candidate pools and automating screening filters to shifting evaluation standards for interviews.

This trend is also captured in Interview Query’s State of Interviewing 2025, which reports that tech companies are leveraging AI to standardize candidate screening, rubrics, and interviews. So, whether you’re targeting data science, software engineering, machine learning, or product roles, hiring has become less forgiving of inconsistency not just for technical skill, but also structured thinking and communication.

At the same time, tech candidates now have access to AI tools that help optimize preparation. These include AI-powered mock interview platforms that simulate technical and behavioral interviews on demand.

Thus emerges the question: If AI is being used to screen candidates, should you also use AI to prepare?

While mock interviews traditionally meant practicing with a peer, mentor, or coach, candidates can now run dozens of simulated interviews per week with an AI interviewer.

But which approach actually improves performance faster for tech candidates?

To answer that, we need to define what “performance improvement” means in interview prep, in line with the changing industry-wide standards. These include a combination of technical and behavioral aspects, such as:

- Higher technical accuracy

- Faster problem-solving speed

- Stronger communication clarity

- Clearer answer structure

- Increased confidence under pressure

Instead of simply practicing more to improve faster, it’s essential to practice the right way.

In this guide, we’ll break down exactly how AI interviewers and human mock interviews differ across factors like feedback quality, technical depth, realism, and cost. You’ll see where each approach accelerates improvement, and where it creates blind spots.

More importantly, you’ll learn how to diagnose your specific bottleneck and decide which method will improve your performance fastest and help convert interviews into offers.

How AI Mock Interviews Work

For tech candidates, AI mock interviews can replicate technical and behavioral interviews while compressing the feedback loop. Instead of waiting days between practice sessions, candidates can generate targeted questions, respond in real time, and receive structured evaluation instantly.

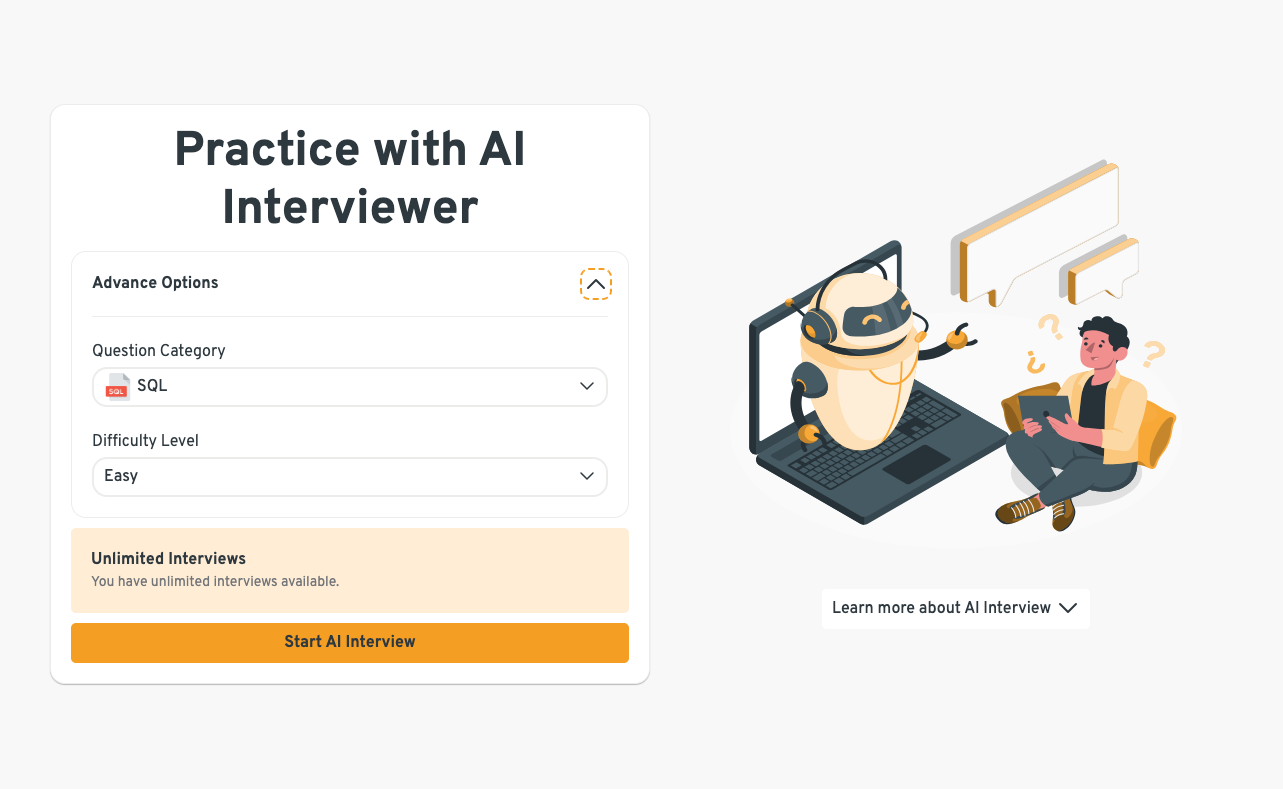

Most AI interview platforms support:

- Behavioral simulations

- Technical drills (SQL, Python, ML theory, system design)

- Timed coding environments

- Instant scores and feedback

Interview Query’s AI Interviewer allows candidates to select question type and difficulty, enabling deliberate practice for specific performance goals.

Typical Use Cases

AI mock interviews are particularly useful for:

- Early-stage prep when candidates are still learning frameworks

- High-volume repetition, such as drilling SQL joins or data structures

- Reducing anxiety by normalizing the interview format

For example, data science candidates can repeatedly practice explaining model evaluation metrics, walking through A/B testing logic, or solving SQL aggregation problems without scheduling a live session.

Where AI Falls Short

However, AI simulations typically lack:

- Adaptive, unpredictable follow-ups

- Social pressure and conversational friction

- Deep probing into ambiguous decisions

- Nuanced feedback on tone, persuasion, or executive presence

These gaps matter more as interviews become conversational, senior-level, or panel-based.

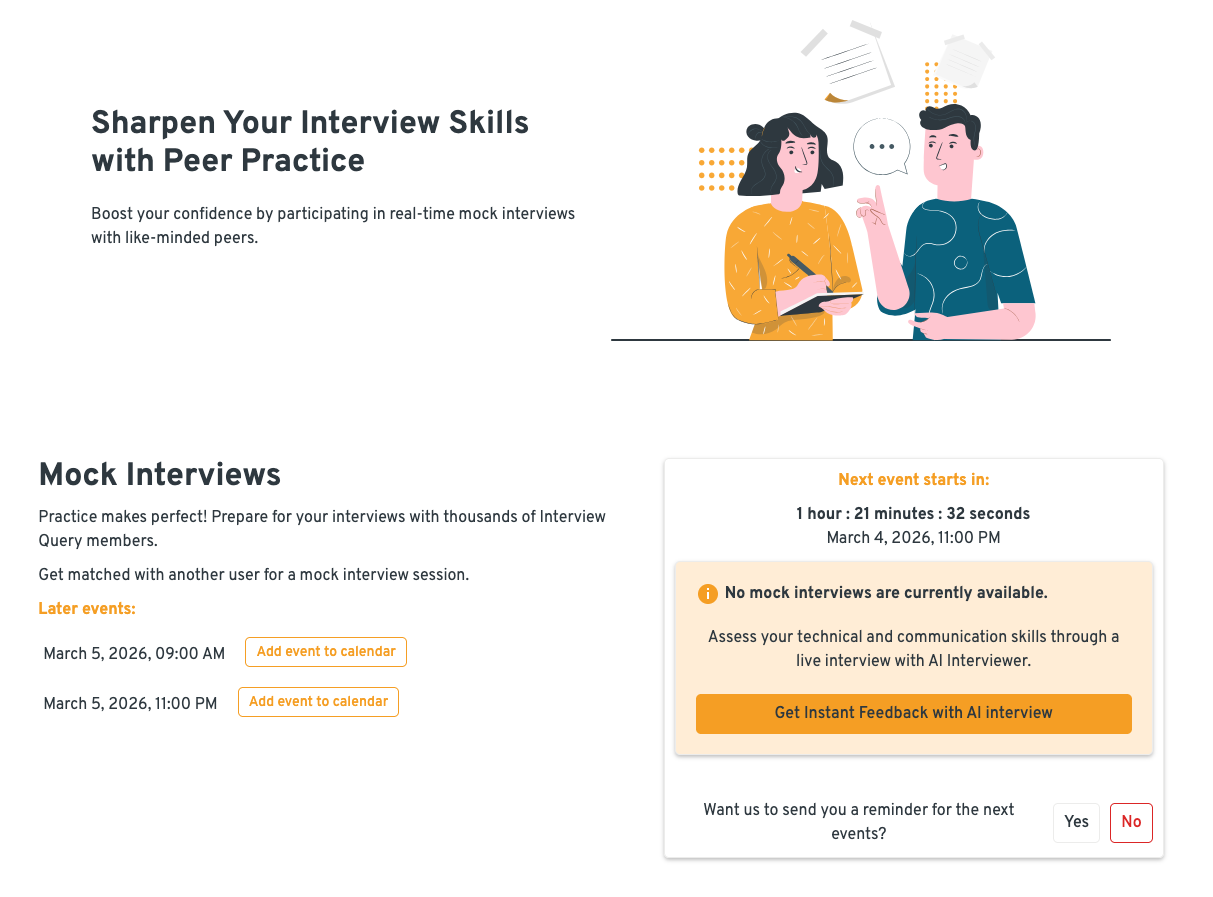

How Human Mock Interviews Work

A human mock interview is a live simulation with a peer, hiring manager, mentor, or professional coach designed to replicate the cognitive and social dynamics of a real interview.

Unlike AI-driven simulations, human mocks introduce variability, ambiguity, and conversational friction, which are factors that often determine pass/fail decisions in actual loops.

Common Formats

- Live coding with real-time probing

- Case interviews (analytics, product, ML system design)

- Behavioral rounds with follow-ups

At Interview Query, mock interviews focus on replicating realistic interview conditions, including clarifying questions, tradeoff discussions, and peer feedback.

Where Human Mock Interviews Create Leverage

Since human mock interviews capture the dynamics of personal connection and interactions, they provide:

- Realistic interruptions and ambiguity

- Nuanced feedback on communication

- Social pressure simulation

- Tailored advice based on experience

For example, a hiring manager conducting a mock ML system design interview might challenge tradeoffs in deployment decisions or ask follow-up questions that reveal shallow understanding.

Constraints to Consider

The tradeoffs are structural:

- Scheduling friction

- Lower repetition volume

- Higher cost (especially with experienced coaches)

Because of this, human mocks are rarely the highest-leverage tool for raw repetition, but they optimize realism and depth.

Comparing AI vs. Human Mock Interviews for Performance Improvement

Choosing between AI and human mock interviews is all about leverage. Each method accelerates different parts of interview performance. The comparison below breaks down where each approach creates the most improvement, and where it leaves gaps. Use it to identify which lever will move your performance fastest.

| Category | AI Mock Interview | Human Mock Interview | Improvement Is Faster Using: |

|---|---|---|---|

| Feedback Quality | Instant, structured, rubric-based | Context-aware, personalized, nuanced | AI (iteration speed) / Human (qualitative depth) |

| Volume & Repetition | Unlimited reps, 24 / 7 access | Limited scheduling | AI |

| Realism & Pressure | Low stress, predictable | Live pressure, interruptions, ambiguity | Human |

| Behavioral Coaching | STAR structure, keyword detection | Tone, presence, authenticity | Human (especially senior roles) |

| Technical Depth | Foundational drills, syntax correction | Edge cases, tradeoffs, probing | AI (fundamentals) / Human (advanced) |

| Bias & Objectivity | Consistent scoring | Variable but holistic | AI (consistency) / Human (realistic) |

| Cost & Accessibility | Subscription, scalable | High-touch, higher cost | AI (budget) / Human (high stakes) |

Since no single method dominates across all dimensions, let’s go through each category to help you match the tool to the performance constraint you’re trying to remove and the goal you’re trying to achieve.

1. Feedback Quality

| AI Interviewer | Human Mock Interviews |

|---|---|

| Structured and consistent | Context-aware and personalized |

| Instant turnaround | Can critique storytelling, tone, and logic gaps |

| Often rubric-based scoring | Provides career-level perspective |

AI accelerates feedback loops because you can iterate quickly. However, humans provide qualitative feedback by recognizing subtler cues and weaknesses, like defensiveness, lack of clarity, or executive presence, that AI may miss.

2. Volume & Repetition

| AI Interviewer | Human Mock Interviews |

|---|---|

| Unlimited reps | Limited attempts/sessions |

| Available 24⁄7 | Require scheduling |

| Ideal for drilling fundamentals | Inability to replicate high-speed repetition due to human fatigue |

For technical roles, repetition builds muscle memory. Practicing SQL joins, recursion patterns, or model evaluation explanations repeatedly improves fluency. In this category, AI clearly wins.

3. Realism & Pressure Simulation

| AI Interviewer | Human Mock Interviews |

|---|---|

| Low emotional pressure | Simulates real interviewer dynamics |

| Predictable interaction | Introduces interruptions and ambiguity |

Handling pressure is a skill. Candidates who only practice in low-stress environments often struggle during live interviews. Here, human mocks better prepare candidates for real-world unpredictability.

4. Behavioral & Communication Coaching

| AI Interviewer | Human Mock Interviews |

|---|---|

| Can analyze STAR structure | Evaluates authenticity and tone |

| Detects missing keywords | Adjusts based on role seniority |

| Flags vague language | Challenges shallow reflections |

In this category, tool effectiveness may depend on the candidates’ communication goals and seniority.

For instance, junior candidates who aim to better structure their answers may benefit from AI’s ability to analyze the structure and logic of their responses. Those aiming for senior roles especially benefit from human feedback, where leadership communication and strategic thinking are evaluated more holistically.

5. Technical Depth (Data/ML/Engineering Roles)

| AI Interviewer | Human Mock Interviews |

|---|---|

| Excellent for foundational drills | Pushes deeper into edge cases |

| Rapid syntax correction | Tests real-world reasoning |

| Covers broad question banks | Explores tradeoffs under ambiguity |

In other words, AI is efficient for strengthening fundamentals, which can be useful for earlier assessments/rounds. Meanwhile, human interviewers can better simulate advanced, layered discussions that are common further into the loop.

6. Bias & Objectivity

| AI Interviewer | Human Mock Interviews |

|---|---|

| Consistent scoring | Potential subjectivity |

| Less variability between sessions | But more holistic interpretation |

Consistency accelerates learning. However, real interviews involve human evaluators, so exposure to variability can also be beneficial.

7. Cost & Accessibility

| AI Interviewer | Human Mock Interviews |

|---|---|

| Can be subscription-based | Professional fees |

| Scalable features | High-cost, but high-impact |

For early-career candidates, AI often offers higher ROI. For senior professionals targeting competitive roles, selective human sessions may justify the cost of expert coaches.

If you’re a data professional aiming to connect with industry experts for tailored guidance, Interview Query offers 1:1 coaching sessions at different prices, depending on your practice session timeline.

How to Improve Interview Performance (By Career Stage)

Entry-Level / Career Switchers

Candidates at this stage benefit from structure and repetition. AI mock interviews often improve technical fluency faster because they allow daily practice.

However, before real interviews, human validation is crucial to ensure readiness.

Mid-Level Candidates

Mid-level professionals need both technical refinement and communication polish. A hybrid approach involving frequent AI reps plus periodic human mocks typically accelerates improvement the fastest.

Senior / Staff-Level

At this stage, leadership presence and ambiguity management matter more. Human mock interviews become more critical.

So, though AI remains useful for refreshing fundamentals, deep improvement often requires human feedback.

The Hybrid Model for Interview Prep

Rather than going for only one option over the other, the fastest improvement comes from balancing the speed and efficiency of AI-powered interviewers with the nuance and authenticity of human mock interviewers.

While AI can streamline the volume and repetition of simulated interviews, the human aspect cannot be overlooked. At the same time, mock interviews with peers, mentors, or coaches are not always widely accessible, making it synergistic to combine both approaches.

For most tech candidates, the hybrid model for interview prep can look like this:

- Weeks 1–2: Heavy AI reps to sharpen fundamentals

- Weeks 3–4: Add weekly human mock sessions

- Final Week: Focus on human pressure testing

This layered approach ensures technical fluency, depth, and real-world readiness.

Example Roadmap for Data Science Candidates

- Drill SQL and statistics daily using AI simulations.

- Practice structured ML explanations.

- Schedule a case-style mock with a human interviewer.

- Refine behavioral stories using targeted feedback.

Read more: How to Prepare for Data Science Interviews

Common Interview Prep Mistakes Candidates Make

| Mistake | How to Address It |

|---|---|

| Over-relying on AI feedback | Add at least 2–4 live human mock sessions before real interviews. |

| Avoiding human mocks due to discomfort | Schedule recurring mock sessions (weekly or biweekly) during active prep. |

| Practicing passively (watching solutions vs solving) | Solve under timed conditions first, then review solutions; redo missed problems 48–72 hours later. |

| Ignoring behavioral prep | Practice structured frameworks (e.g., STAR) and rehearse out loud, focusing on clarity and impact. |

| Not reviewing feedback deeply | Keep a running “mistake log”; track recurring gaps and turn them into focused drills. |

| Only practicing strengths | Identify lowest-scoring categories and deliberately over-train them |

Practical Framework: How to Decide What You Need

Your bottleneck determines your tool. The key is diagnosing the real constraint slowing your performance.

- Struggle with structure? → Start with AI.

- Struggle with nerves? → Add humans sooner.

- Failing onsite rounds? → Increase realism.

- Lacking technical speed? → Increase repetition with AI.

- Weak/generic behavioral answers? → Blend both.

- Inconsistent performance? → Structured hybrid plan.

The goal isn’t to default to one tool, but to identify what’s actually holding you back.

Final Verdict: Which Improves Performance Faster?

In the short term, AI mock interviews often accelerate skill gains faster due to volume and instant feedback.

But real interview readiness requires human validation.

The fastest overall improvement typically comes from a hybrid model that combines structured AI practice with realistic human simulations.

Ultimately, performance improvement requires deliberate, targeted practice, where the strength of the tool is aligned with your blind spots or weaknesses.

FAQs

Are AI mock interviews accurate?

They are generally effective for structural and technical evaluation, especially for coding correctness, algorithm choice, and clarity of frameworks. However, they may miss subtle cues like executive presence, persuasion, tone shifts, or how well you read an interviewer’s reactions.

Do recruiters use AI interviewers?

Many companies use AI-assisted screening tools, structured rubrics, and standardized scoring systems to reduce bias and improve consistency. However, final hiring decisions still heavily involve human judgment, especially for culture fit and leadership potential.

How many mock interviews should I do before a tech interview?

There is no universal number, but strong candidates often benefit from 15–30 focused technical reps (coding, system design, or cases) and at least 2–4 realistic mock sessions under timed conditions. The key is deliberate practice: reviewing mistakes, identifying patterns (e.g., weak edge-case handling or unclear explanations), and iterating. Quality feedback matters more than raw volume.

Are paid interview coaches worth it?

They can be especially valuable for senior-level roles, career pivots, or high-stakes transitions, such as targeting competitive companies like Meta or Netflix. A good coach provides tailored feedback on storytelling, executive communication, and strategy positioning—areas where generic practice often falls short. The ROI tends to increase when compensation jumps or role scope is significant.

Can AI help with data science case interviews?

Yes, particularly for practicing structured thinking, SQL queries, experiment design, and clearly explaining modeling trade-offs. AI can simulate product analytics scenarios, A/B test discussions, or machine learning system breakdowns on demand.

A Smarter Way to Prepare

To practice intentionally and maximize improvement speed, many candidates now combine AI-powered drills with realistic mock sessions. Tools like Interview Query’s AI Interviewer and mock interview features allow structured daily practice. Complement these with the question bank and company-specific guides to target your preparation for real roles.

If hiring is becoming more data-driven, your preparation should be too.