American Banker Says Bank AI Literacy Is Rising, But Interviews Still Focus on Core Skills

AI Literacy in Banking Is Rising

As the financial service industry has been adopting artificial intelligence in recent years, bank AI literacy is rising, at least on paper.

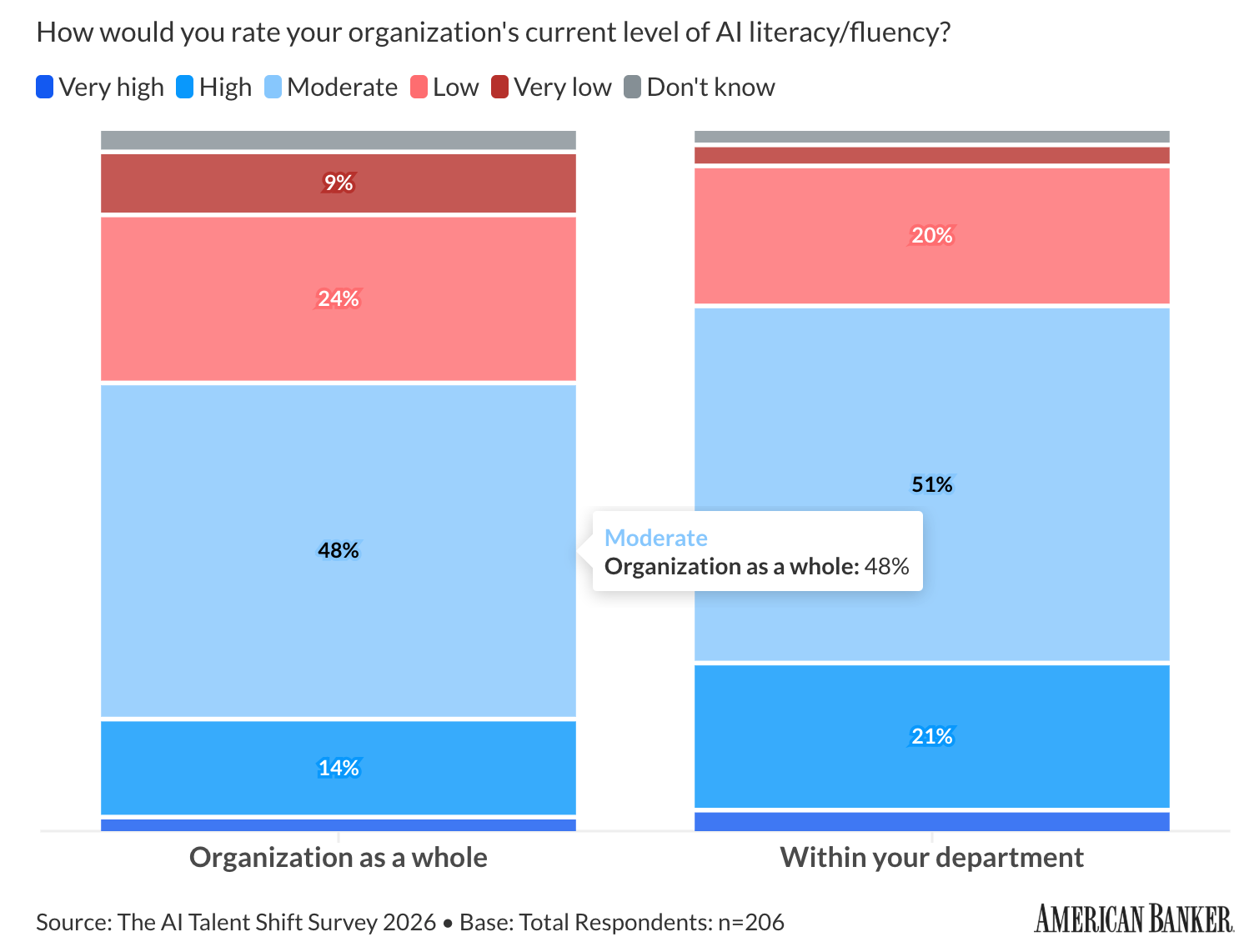

In American Banker’s 2026 AI Talent Shift Survey of 206 banking professionals, half of institutions said they are at least moderately literate in AI. General AI fluency ranked as the top skill in demand for 73% of respondents, and critical thinking and judgment followed at 64%.

While that shift is real, it does not mean banks and other regulated employers now care only about prompt quality or tool familiarity. The same survey found that 27% of respondents still do not use any metric to measure AI literacy. This aligns with the key insights in Grant Thornton’s 2026 AI Impact Survey, in which 78% of executives do not feel strongly confident they could pass an independent AI governance audit within 90 days.

When you put those findings together, a clearer hiring signal emerges. Employers want people who can use AI, but they also want people who can fill the governance gap by explaining what the system is doing, choosing sensible metrics, and defending decisions when the stakes involve fraud, risk, compliance, or customer money.

Why Interviews Still Screen Judgment, Not Just AI Literacy

Across 28 recent interview writeups in Interview Query’s database for finance, payments, insurance, and risk-heavy roles, 21 mentioned behavioral rounds, 21 mentioned case-based or business-heavy evaluation, 19 mentioned stakeholder communication, and 17 mentioned SQL. Only two centered on AI itself.

Such findings signify that despite the rise of AI literacy in the sector, interviewers are not treating AI fluency as a substitute for core analytical judgment. They still ask candidates to work through messy SQL, explain tradeoffs, frame recommendations, and talk through how they would communicate risk to business partners.

One recent candidate interviewing for a fraud-focused analyst role at a major bank described an online assessment focused on SQL, Python, Excel, and data cleanup, followed by case-style interviews that emphasized business thinking and structured answers. Another candidate interviewing at a large payments company faced a loop that mixed product metrics, a case-study readout, cross-functional collaboration, and behavioral evaluation. Those are not AI-only screens; they are judgment screens.

Regulated Sectors Need Candidates Who Can Defend Decisions

The governance backdrop helps explain why these interviews still look this way. Grant Thornton found that 46% of executives cite governance and compliance failures as a leading cause of AI underperformance. It also found that organizations with fully integrated AI are far more likely to report AI-driven revenue growth than those still piloting, 58% versus 15%.

For banks, insurers, and payments companies, that gap creates a practical hiring problem. These companies need analysts, data scientists, and product teams who can move quickly with AI tools without creating audit, compliance, or decision-quality risk. That pressure changes what a strong interview answer looks like.

A candidate who says they used AI to speed up analysis is no longer saying enough. Interviewers want to hear what the candidate verified, which metrics they watched, what failure modes they expected, and how they would explain a recommendation to a legal, risk, or operations stakeholder. In other words, AI literacy matters, but explainability and accountability still decide whether a team can trust the work.

Recent Interview Experiences Support This Trend

Recent customer and coaching transcripts with Interview Query show the same pattern. In one April customer-success call, a candidate described a bank interview that leaned heavily on conversational resume discussion, while another company assigned a two-hour take-home using a large dataset and requiring both SQL and Python. The candidate was not preparing for a niche research lab role, and was instead targeting specialized product data science roles where practical execution mattered.

A separate April coaching session for a senior candidate focused on case skills like defining the business problem, choosing tradeoffs, setting guardrail metrics, and making concise recommendations. Another coaching session for senior analytics roles pushed on storytelling, quantified impact, and clear ownership.

None of that sits outside technical prep anymore. In many regulated-sector interviews, that is the technical prep, requiring candidates to practice in realistic conditions that challenge their framing, prioritization, and communication under pressure.

How Candidates Can Strengthen Interview Prep

The strongest candidates are not treating AI as a separate prep category. They are folding it into existing interview skills. They can explain when they would use AI to speed up exploration or drafting, but they can also explain where they would slow down, validate outputs, and check for obvious failure cases.

This means preparing SQL, case, and behavioral stories together. Because a bank or payments company rarely isolates those skills in real work, recent interview loops do not isolate them either. A candidate may write a query, define the metric that matters, and then defend the tradeoff in the same round.

Finally, strong candidates practice saying the business consequence out loud. Rather than stopping at model lift or query correctness, what ultimately matters is the ability to connect the work to outcomes relevant to the financial services industry, such as fraud loss, approval quality, customer retention, claims handling time, or operational risk.

The Bottom Line: Traditional Prep Still Matters

American Banker’s new survey suggests banks and credit unions are getting more comfortable with AI, supporting Dice’s April 2026 jobs report that suggests regulated sectors are still hiring into that shift. Dice found that 67% of U.S. tech job postings now mention AI skills, with finance and banking leading the growth with a 66% rise in postings year-over-year.

But candidates should not read those numbers as a sign that traditional interview prep matters less. The opposite signal is showing up inside interview loops. As employers push AI into higher-stakes workflows, interviewers still screen hard for core skills like SQL, business judgment, stakeholder communication, and explainability. If bank AI literacy keeps rising through 2026, that combined bar will likely rise with it.