2026 Analytics Engineering Report: Why Trust Matters in Data Interviews

AI Is Raising the Bar for Data Teams and Interviews

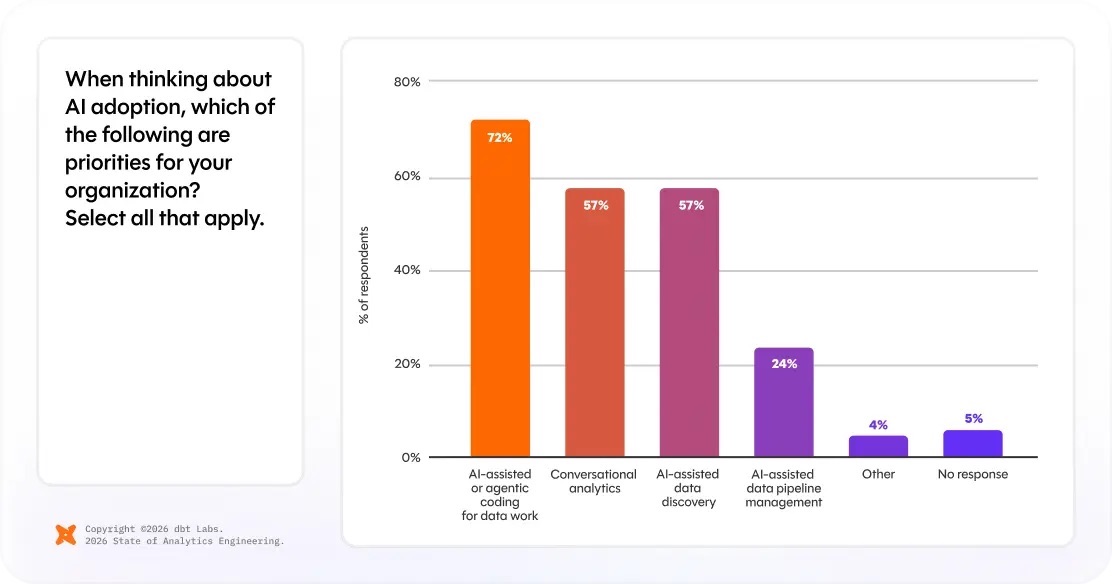

The 2026 State of Analytics Engineering Report from dbt Labs is nominally about how data teams work. It also reads like a hiring signal. The report found that 72% of respondents now prioritize AI-assisted coding, as teams move from experimentation to embedding AI into their analytics workflows.

Trust and speed are emerging as top performance priorities, with 83% saying trust in data and data teams is important, up from 66% in the previous year. Respondents are also placing more importance on speed, from 50% to 71%. At the same time, 71% worry about hallucinated or incorrect data reaching stakeholders.

Such findings matter because they capture the tension many candidates are walking into right now. Employers want faster output, more AI fluency, and fewer bottlenecks. They also want people who can explain why a dashboard should be trusted, how a pipeline should be validated, and what happens when a fast answer is wrong.

Priorities Move toward Trust and Validation

Source: dbt Labs’ 2026 State of Analytics Engineering Report

The most useful part of dbt Labs’ report is not the headline that AI is everywhere. Most people in data already knew that. The sharper signal is where teams still feel exposed. AI-assisted coding is now actively reshaping daily workflows, but only 24% prioritize AI-assisted pipeline management, which includes testing, observability, and quality controls.

That gap helps explain why trust jumped so sharply as a priority this year. Speed remains valuable, but cost priorities only increased marginally year-over-year. This signifies that efficiency is no longer the primary performance expectation, as speed without validation creates new failure modes.

If a company is using AI to ship models, transformations, and stakeholder-facing analysis faster, it needs to maintain trust and reliability. By extension, the people it hires need to reason through data quality, ownership, documentation, and downstream risk.

That is also why interview questions increasingly sound less like trivia and more like judgment calls. A candidate may still get SQL, Python, or product metrics questions, but the real evaluation is often whether they can notice bad assumptions, defend tradeoffs, and communicate uncertainty clearly.

Interview Query User Experiences Point the Same Way

Recent IQ coaching sessions and approved interview writeups suggest interview loops are moving in the same direction. Instead of replacing SQL, behavioral judgment, or systems thinking with AI prompts, data interviews are asking candidates to layer AI fluency on top of those fundamentals.

In 30 recent interview writeups from Interview Query’s database, 28 referenced behavioral evaluation, 13 referenced SQL, 11 touched systems, pipelines, or data modeling, and 10 involved product or business framing. Python still showed up, but it was less common than the combination of behavioral signal plus analytical rigor.

The qualitative pattern is even more useful. One candidate interviewing at a major tech company described a process that mixed SQL with leadership-principle style questioning. Another candidate in financial services went through a CodeSignal-style screen that combined SQL, Python, Excel, and general analytical reasoning. In both cases, the company was not just testing whether the candidate could get the right answer. It was testing whether they could be trusted in a live decision environment.

We have seen the same pattern in recent coaching sessions. Candidates are often less blocked by a single technical concept than by the handoff between technical work and business explanation: why a metric changed, which assumption is most fragile, how to explain a tradeoff to a non-technical stakeholder, or how to defend a recommendation when the data is incomplete.

AI Fluency Is Integrated into Fundamentals

The broader hiring data supports that read. Dice’s April 2026 Jobs Report says 67% of U.S. tech job postings now mention AI skills, up from 61% in February. CompTIA adds that more than 275,000 active U.S. job postings in January 2026 referenced AI skills, while dedicated AI roles grew 81% year over year.

That does not mean most data interviews are turning into pure LLM screens. It means employers increasingly assume candidates can work with AI in some capacity, integrating tool or model fluency into fundamentals. The differentiator moves elsewhere. Instead of asking, “Can this person use AI?” teams are more often asking, “Can this person use AI without lowering the quality of decisions?”

For candidates, that shift is subtle but important. While AI fluency helps open the door, it must be demonstrated in a way that prioritizes trust and reliability to get through the loop.

How Candidates Can Demonstrate AI Fluency and Trust

As interviews shift toward evaluating judgment and reliability, candidates need to show not just that they can use AI, but that they can use it responsibly. The strongest signals come from how well you validate, explain, and defend your work.

First, practice SQL and analytics questions in a way that forces explanation, not just execution. A clean query is useful, but the stronger answer is the one that explains why the logic is correct, what edge cases could break it, and how the result should be validated.

Second, prepare two or three stories that connect technical work to business consequences. In many coaching sessions, the gap is not raw skill, but framing. Candidates can describe the model or the dashboard, but not the cost of a bad prediction, a delayed metric, or an unreliable experiment readout.

Third, practice realistic interview formats instead of isolated drills. More loops now mix behavioral questions, practical SQL, and follow-up discussion about tradeoffs in the same hour. Candidates who only rehearse one skill at a time often look less prepared than they really are, making mock interviews more valuable as they can simulate messy, time-boxed conditions.

The Bottom Line

dbt Labs’ 2026 report suggests the biggest change inside data teams is not that AI arrived. It is that AI is speeding up output faster than trust systems are maturing. Hiring processes tend to follow that pressure. When companies worry more about reliability, ownership, and decision quality, interviews start rewarding candidates who can do more than produce code quickly.

For data candidates, that is the real signal to pay attention to. AI fluency is increasingly expected, but it is not enough on its own. The candidates who stand out in 2026 will be the ones who can write solid SQL, explain ambiguity, validate fast-moving analysis, and show that their judgment holds up when the stakes are real.