TikTok Data Engineer Interview Guide: Process, Questions & Prep

Introduction

The Tiktok data engineer interview is designed to evaluate your ability to build and maintain the robust data infrastructure that powers TikTok’s real-time analytics, recommendation engines, and reporting pipelines. You’ll face challenges spanning large-scale ETL design, data modeling, and performance tuning—skills essential for handling billions of daily events. TikTok’s “Always Day 1” ethos demands rapid iteration: engineers are empowered to propose and own improvements, from schema evolution to streaming ingestion. Cross-functional collaboration is key, as you’ll partner with data scientists, product teams, and ML engineers to ensure data quality and availability. This guide will walk you through the full process and the question types you need to master.

Role Overview & Culture

As a TikTok Data Engineer, your day-to-day involves designing scalable pipelines on platforms like Flink and Kafka, optimizing batch and streaming workflows, and implementing data quality checks in Airflow or Beam. You’ll architect data marts in cloud storage solutions—such as AWS S3 or ByteHouse—while enforcing schema governance and security policies. Close collaboration with analytics and ML teams ensures models and dashboards have reliable, timely inputs. The fast feedback cycles at TikTok mean you’ll often deploy hotfixes or schema migrations within hours, reflecting the company’s bias for action. If you thrive on ownership and solving high-impact data challenges, this Tiktok data engineer role is for you.

Why This Role at TikTok?

Joining TikTok as a Data Engineer means influencing the backbone of a platform with over a billion active users and shaping insights that drive product strategy. You’ll gain exposure to cutting-edge data infrastructure at scale, from real-time event processing to petabyte-scale storage optimization. Competitive compensation, generous RSUs, and clear career paths reward both technical excellence and leadership contributions. You’ll work in small, autonomous squads where your ideas move quickly from prototype to production. To become part of this high-velocity data organization, you’ll first navigate the comprehensive TikTok Data Engineer interview process detailed below.

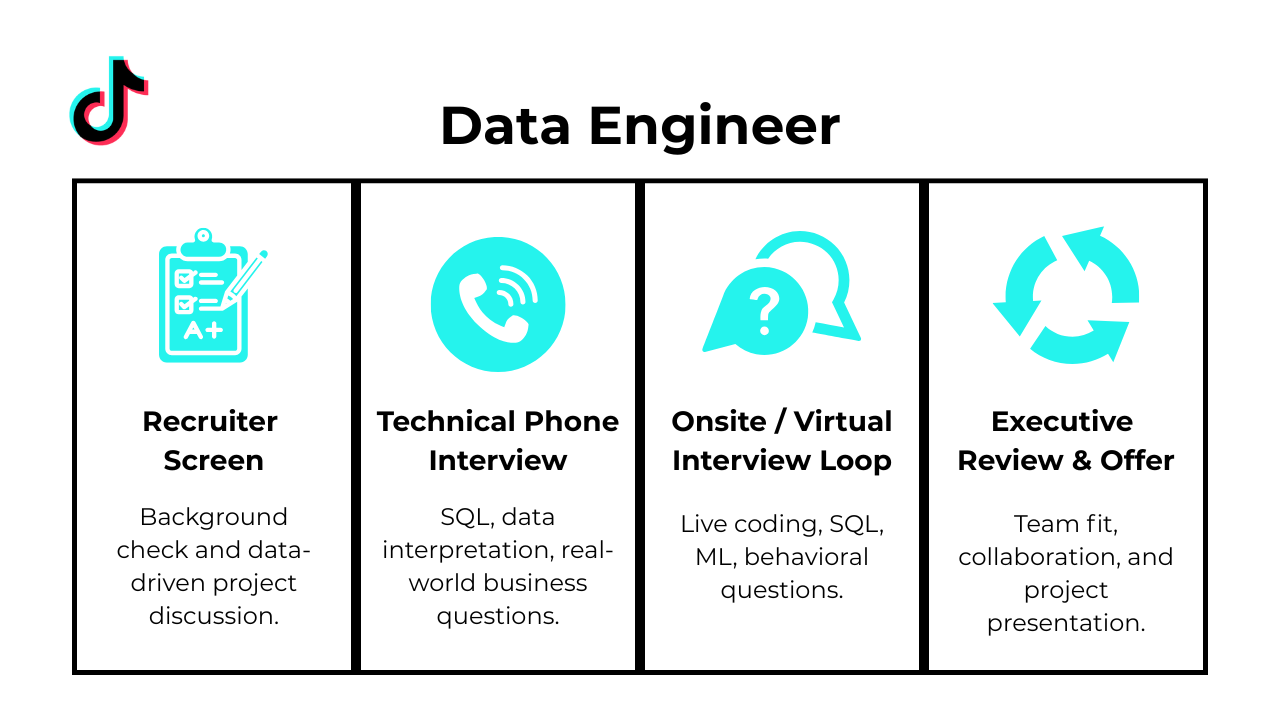

What Is the Interview Process Like for a Data Engineer Role at TikTok?

Navigating the TikTok Data Engineer interview involves demonstrating both hands‐on technical expertise and alignment with TikTok’s rapid‐iteration culture. You’ll progress through a structured sequence—each step designed to evaluate different skill sets, from coding and data modeling to system design and collaboration.

Application & Recruiter Screen

Your résumé and online profile are reviewed for relevant experience in large‐scale data systems, ETL pipelines, and cloud platforms. A recruiter call follows, focusing on your background with tools like Kafka, Airflow, or Spark, your familiarity with data modeling best practices, and your fit for TikTok’s “Always Day 1” mindset. Be ready to discuss past projects at a high level and your motivation for joining TikTok.

Technical Assessment (HackerRank / SQL Test)

Next, you’ll complete a timed online assessment that typically combines SQL queries on sample datasets with Python or Java coding problems. Expect tasks such as writing window‐function queries for sessionization, implementing core ETL transformations, and debugging small code snippets. This stage verifies your ability to write performant, production‐grade data logic under time constraints.

Onsite / Virtual Loop (Coding • System Design • Behavioral)

In a series of live virtual or in‐person interviews, you’ll rotate through:

- Coding Round: Live coding exercises in Python or Java, focusing on data structures, algorithms, and scripting for data ingestion.

- System Design Round: End‐to‐end architecture design for pipelines—covering data ingestion, processing frameworks, storage, and monitoring.

- Behavioral Round: STAR‐style questions that probe your experience with cross-team collaboration, handling production incidents, and driving data quality improvements.

Hiring Committee & Offer

After your interviews, feedback is consolidated by a cross‐functional hiring committee to ensure consistency and fairness. Upon committee approval, you’ll receive an offer detailing your role, compensation package, RSU grants, and next steps. TikTok aims to close loops within 2–3 weeks, so maintain timely communication throughout.

Challenge

Check your skills...

How prepared are you for working as a Data Engineer at Tiktok?

What Questions Are Asked in a TikTok Data Engineer Interview?

In the TikTok Data Engineer interview, you’ll encounter a mix of coding exercises, system design scenarios, and behavioral discussions—each reflecting the real-world demands of processing petabyte-scale event streams. Early in your prep, review typical Tiktok data engineer interview questions to understand the balance between hands-on SQL/stream processing tasks and higher-level architectural challenges.

Coding / Technical Questions

Expect take-home or live coding prompts where you implement ETL pipelines using SQL and Python or Java. Common tasks include writing window-function queries to compute session metrics, transforming nested JSON events into flat tables, and building streaming processors that handle out-of-order data. These exercises assess your ability to write efficient, maintainable code and troubleshoot data issues under time pressure.

How would you calculate first-touch attribution for each converting user?

You have two tables—

attribution, which logs individual session visits with a booleanconversionflag and an advertisingchannel, anduser_sessions, which maps sessions back to users. Your task is to label each user with the channel of their very first session in which they eventually converted. A common approach uses a window function partitioned byuser_idordered bysession_ts, filtering forconversion = TRUE, and then selecting the first value ofchannel. Handling ties or multiple sessions on the same timestamp may require a secondary ordering column. This metric is critical for understanding which marketing channels originally drove conversions in a user’s journey.How would you select a single random number from an unbounded stream using O(1) space?

You need a one-pass algorithm that, at each new value in the stream, maintains the probability of choosing any seen element as 1/N (where N is the count so far). The classic solution is reservoir sampling of size one: initialize your selection as the first element, then for the k-th element generate a random integer in [1, k] and replace the stored value if the random integer equals k. This guarantees uniform probability without storing the full stream. It’s a foundational technique for streaming data processing where memory is constrained and input size is unknown.

How can you detect whether any completed subscription periods overlap for each user?

Given a

subscriptionstable withuser_id,start_date, andend_date(NULL if ongoing), your goal is to flag users whose historical subscriptions overlap. A self-join onuser_idcomparing two different rows lets you check ifstart_a<end_bandstart_b<end_a, filtering to only completed subscriptions. Wrapping that in an EXISTS or boolean aggregation per user returns true/false. This query ensures users aren’t double-billed or that data integrity issues are surfaced, both vital for accurate subscription analytics.How would you find the second-highest salary in the engineering department?

Working with an

employeestable tagged bydepartment, you need the salary immediately below the top. Solutions often use a window function likeDENSE_RANK() OVER (PARTITION BY department ORDER BY salary DESC)filtered for rank = 2, or a correlated subquery selectingMAX(salary) WHERE salary < (SELECT MAX(salary)…). It’s important to handle ties at the top correctly so that duplicates don’t skew the result. This pattern demonstrates core skills in ranking and partitioned analytics essential for compensation benchmarking.What was the last transaction recorded on each calendar day?

With a

transactionstable containingid,transaction_value, andcreated_attimestamps, you must pick the single entry with the greatest timestamp per date. A typical approach usesROW_NUMBER() OVER (PARTITION BY CAST(created_at AS DATE) ORDER BY created_at DESC)and filters for row_number = 1. Casting or truncating the datetime to date ensures proper grouping, and ordering by timestamp captures the true “last” event. This query pattern is a staple in time-series reporting for financial or operational dashboards.-

You’re given two lists of four (x, y) pairs each, in arbitrary order, representing axis-aligned rectangles. Overlap occurs unless one rectangle is entirely to the left, right, above, or below the other. First normalize each rectangle to find its min_x, max_x, min_y, and max_y. Then return

NOT (r1.max_x < r2.min_x OR r2.max_x < r1.min_x OR r1.max_y < r2.min_y OR r2.max_y < r1.min_y). Edge and corner touches count as overlap. This function tests geometric reasoning and careful handling of inequality boundaries. Who are the top three highest-earning employees in each department?

Using

employeesjoined withdepartments, assign a rank per department viaDENSE_RANK() OVER (PARTITION BY dept_name ORDER BY salary DESC). Then filter for ranks ≤ 3 to include top earners—fewer if a department has less than three staff. Return the employee’s full name, department name, and salary, sorted by department ascending and salary descending. This query showcases window functions for segmented ranking, essential for hierarchical reporting in large organizations.

System / Architecture Design Questions

You’ll be asked to design end-to-end data infrastructures—such as a real-time metrics pipeline for TikTok’s For-You feed—that ingests, processes, and serves event data with low latency. Key discussion points include ensuring data quality (deduplication, watermarking), choosing between batch vs. stream frameworks (e.g., Spark vs. Flink), and scaling storage layers. Your answers should demonstrate a clear understanding of fault tolerance, idempotency, and monitoring in distributed systems.

-

Explicit foreign key constraints enforce referential integrity at the database level, ensuring that child records cannot reference nonexistent parents and preventing silent data corruption. Using

ON DELETE CASCADEis appropriate when child records have no meaning without their parent (e.g., order items), whereasON DELETE SET NULLsuits optional relationships where you want to retain the child but clear its link (e.g., orphaned comments). Interviewers look for discussion of the trade-offs between strict integrity and performance, as well as how constraint enforcement interacts with bulk loads and sharding. Mentioning index backing for foreign keys and implications for DML speed demonstrates practical schema-design savvy. -

Start by identifying the core entities—Users, Friendships (self-referencing junction table), and Interactions—and mapping nested documents into normalized tables with primary keys and foreign keys. Define schemas to eliminate duplication (e.g., separate tables for profiles, posts, and likes) while preserving query performance via appropriate indexes and possibly using JSON columns for truly unstructured fields. Plan a backfill ETL that extracts data from the document store, transforms it into relational rows, and validates referential links. Discuss strategies for zero-downtime migration, such as dual-writes and shadow tables, to keep the application live during cutover.

-

A typical schema includes

Users,Drivers,Vehicles, andRidestables, whereRidesreferencesUsers(rider_id) andDrivers(driver_id) as foreign keys, plus timestamps for pickup and drop-off. You might addLocationsfor geospatial queries, with latitude/longitude fields indexed by a spatial index to support nearest-driver lookups. Consider aRideStatusenum or separate status history table to track state transitions (requested, accepted, in-progress, completed). PartitioningRidesby date or region and denormalizing common aggregates can help scale high-volume traffic. How would you design a schema to capture client click events for a web application?

You’d typically create a wide fact table—

click_events—with columns likeevent_id,user_id,session_id,event_timestamp,page_url, andelement_id, plus optional JSON or key-value pairs for metadata. Dimension tables forUsers,Pages, andCampaignsallow for joins and faster lookups. To handle high write throughput, consider a write-optimized store (e.g., partitioned by hour) and later ETL into an analytical warehouse. Emphasize data retention policies, TTL on raw events, and the need for consistent timestamps (e.g., UTC) to align sessionization logic.How would you build an ETL pipeline to load Stripe payment data into your data warehouse?

Poll or subscribe to Stripe’s webhook events (charges, refunds, disputes) into a staging area, validating payloads against a schema and buffering retries for transient failures. Transform JSON fields into columns, enrich records with lookup tables (e.g., customer tiers), and handle idempotency by deduping on Stripe’s unique event IDs. Load cleansed data into fact and dimension tables daily or via micro-batches, using change-data-capture patterns for incremental updates. Implement monitoring and alerting on pipeline SLA breaches, schema changes, and data-quality checks (e.g., null rates, reconciliation with Stripe’s dashboard).

-

Establish a unified schema registry and enforce schema validation at each pipeline stage to catch field mismatches or type changes early. Use checksum-based lineage tracking to reconcile record counts and hash signatures across source and target stores, and compare translated text lengths or language-detection confidence scores to flag anomalies. Implement automated unit tests and data-quality rules (e.g., non-empty translated fields, valid geographies) with dashboards that surface drift or error rates. Regularly audit samples from each country’s pipeline to ensure that PII handling and regulatory masking remain compliant.

-

Core entities include

Students,Teachers,Classes,Messages, andAssignments, with relationships capturing enrollment and message threads. For ETL, stream raw interaction logs (login times, message events, file submissions) into a staging zone, aggregate counts per student-class-day, and load into an analytics schema optimized for time-series queries. Use a date-dimensional table to support rolling metrics and generate materialized views for top active students or late submitters. Finally, write a SQL query joiningAssignmentsandSubmissionEventsto show per-student submission trends over six months, grouped by class. -

First, collect labeled data on past orders noting incorrect, missing, and successful deliveries, including features like restaurant prep time, courier pickup delays, and address accuracy scores. Train a classification model (e.g., random forest or gradient boosting) to predict order risk, then integrate it into the order-processing pipeline to flag high-risk orders for manual review or enhanced confirmation. Implement feedback loops where delivery outcomes update your training set and retrain models periodically. Monitor model performance via precision/recall dashboards and A/B test mitigation strategies (e.g., automated address verification prompts) to quantify impact.

Behavioral / Culture-Fit Questions

TikTok values engineers who thrive in fast-moving environments and collaborate across disciplines. In behavioral rounds, you’ll share examples of working closely with product managers, ML engineers, and analysts to deliver data solutions. Be prepared to discuss how you handled ambiguous requirements, met tight deadlines, and advocated for data integrity practices—illustrating your alignment with TikTok’s “Always Day 1” ethos.

-

As a data engineer you often balance ETL rollouts, dashboard requests, and system maintenance under tight timelines. Explain how you assess each task’s impact—on data freshness, stakeholder needs, or downstream dependencies—to rank work using a framework like RICE or Eisenhower’s matrix. Describe your use of tools (e.g., JIRA boards, time-block calendars) to visualize deadlines and set clear milestones. Emphasize proactive communication with product and analytics teams to realign priorities when unexpected blockers arise. Showing disciplined organization and flexibility demonstrates you can deliver critical pipelines on time.

-

Choose strengths directly relevant to data engineering—such as building resilient data pipelines or optimizing query performance—and support them with concrete examples (e.g., reducing ETL runtime by 50%). For weaknesses, pick areas you’ve improved (like public speaking when presenting metrics) and describe steps you took (e.g., attending workshops, seeking feedback) to address them. Frame your manager’s feedback as constructive—perhaps noting your thoroughness as a strength but your tendency to over-document as a growth area. This approach shows self-awareness, coachability, and continuous improvement.

-

Pick a project that involved large-scale data ingestion, complex transformations, or tech-stack upgrades. Discuss specific challenges—such as handling schema drift in source systems, optimizing slow joins on terabyte tables, or ensuring zero-downtime deployments. Explain the technical solutions you applied, like introducing schema registry checks, partitioning strategies, or blue-green ETL deployments. Highlight collaboration with data scientists and ops to test end-to-end workflows. This shows you can navigate technical complexity and deliver reliable data infrastructure.

Tell me about a time you cleaned and organized a large, messy dataset—what steps did you take?

Start with data profiling to identify missing values, inconsistent formats, or outliers using tools like Pandas or Spark. Describe applying standardized parsing rules (e.g., date normalization, trimming whitespace), imputing or flagging nulls, and validating referential integrity against master tables. Mention how you automated these checks via unit tests and CI/CD to prevent regressions. Finish by explaining how you documented the cleaning logic for reproducibility and onboarded stakeholders to trust the sanitized data. This demonstrates your rigor in ensuring data reliability.

When an interviewer asks why you want to work with us, how do you frame your response?

Tailor your answer to the company’s data-driven culture and scale of impact—highlight how you’re excited by building low-latency pipelines for real-time analytics or enabling personalized feeds via robust data architectures. Connect your technical aspirations (e.g., mastering streaming ETL on Kafka or optimizing Snowflake workloads) with the company’s product vision. Show that you’ve researched recent engineering blog posts or open-source contributions and explain how your skills will help address their unique challenges. This alignment of your goals with their mission signals genuine interest and fit.

How would you explain your data engineering methods and insights to a non-technical audience?

Emphasize storytelling with visuals: use clear analogies (e.g., “a pipeline is like a factory assembly line”) and simple dashboards to illustrate data flow and bottlenecks. Describe how you translate technical metrics (latency, throughput, error rates) into business terms (e.g., “this cut our report refresh time from 2 hours to 10 minutes, so marketers can react faster”). Mention using slide decks or live demos to walk stakeholders through key steps and outcomes. Stress the importance of pacing—starting with high-level summaries before diving into specifics—and soliciting questions to ensure mutual understanding. This shows you can bridge the gap between engineering and business teams.

How to Prepare for a Data Engineer Role at TikTok

Landing a TikTok data engineer interview demands both deep technical chops and a strong sense of product impact. Below are five focused strategies to ensure you enter each stage—from online assessments to system design rounds—with confidence and clarity.

Master TikTok’s Preferred Tech Stack

Gain hands-on experience with the core platforms TikTok uses—Apache Kafka for streaming ingestion, Apache Flink or Spark for real-time processing, and Hive or ByteHouse for large-scale analytics. Building small prototype pipelines end-to-end will help you speak fluently about these tools during interviews.

Simulate the Technical Assessment

Use online coding platforms to recreate the types of problems you’ll face in the tiktok data engineer interview, such as writing performant SQL joins, window-function analyses, and Python or Java streaming solutions. Time yourself to build speed and accuracy under realistic constraints.

Practice System Design Frameworks

Develop a repeatable approach for architectural discussions: outline data ingestion, choose storage layers, define processing engines, and plan data serving patterns. Walk through trade-offs around latency, fault tolerance, and cost—this ensures you can confidently design pipelines that scale to TikTok’s billions of daily events.

Refine STAR Stories

Prepare Situation–Task–Action–Result examples that showcase your end-to-end ownership, problem-solving under ambiguity, and bias for action—TikTok’s “Always Day 1” ethos. Highlight moments where you improved data quality, optimized performance, or led cross-team collaborations.

Mock Interviews & Debriefs

Leverage Interview Query’s mock interview service to simulate TikTok’s data engineer loop, covering coding challenges, system design discussions, and behavioral scenarios. The detailed feedback from experienced peers will sharpen your technical explanations, solution structure, and cultural alignment—ensuring the real interview feels like a well-rehearsed conversation.

FAQs

What Is the Average Salary for a Data Engineer Role at TikTok?

Average Base Salary

Are There Job Postings for TikTok Data Engineer Roles on Interview Query?

Stay up to date with the latest Data Engineer openings and insider insights—browse our dedicated job board here.

Conclusion

A solid understanding of the TikTok data engineer interview process and diligent practice on key TikTok data engineer interview questions positions you above the competition. Sharpen your skills with our Data Engineering Learning Path and simulate real-loop scenarios using our mock interview service.

Looking to explore adjacent roles? Check out our guides for TikTok Software Engineer or TikTok Data Analyst to round out your preparation—and draw inspiration from success stories like Jeffrey Li’s.

Tiktok Interview Questions

| Question | Topic | Difficulty | ||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

SQL | Medium | |||||||||||||||||||||||||||

Given the Note: The output should include the full name of the employee in one column, the department name, and the salary. The output should be sorted by department name in ascending order and salary in descending order. Example: Input:

Output:

| ||||||||||||||||||||||||||||

SQL | Medium | |||||||||||||||||||||||||||

Data Structures & Algorithms | Medium | |||||||||||||||||||||||||||

SQL | Easy | |

Machine Learning | Medium | |

Statistics | Medium | |

SQL | Hard | |

Machine Learning | Medium | |

Python | Easy | |

Deep Learning | Hard | |

SQL | Medium | |

Statistics | Easy | |

Machine Learning | Hard |

Discussion & Interview Experiences