Top 40 Product Analyst Interview Questions (SQL, A/B Testing, Python, Analytics)

Introduction

A Product Analyst sits at the crossroads of data and business. You’re expected to crunch numbers like a data analyst, think strategically like a product manager, and communicate insights across teams. It’s a hybrid role, and that’s exactly why product analyst interviews trip up so many candidates.

The two biggest hurdles?

- Bridging the gap between raw data and actionable product decisions.

- Handling open-ended case questions where there’s no “right” answer—just how well you structure your thinking.

These interviews aren’t about memorizing SQL joins or definitions. They’re about showing how you use data to solve messy, real-world product problems. From SQL queries to product sense case studies, expect to be tested on both your analytical rigor and decision-making clarity.

In this guide, we’ve rounded up top real product analyst interview questions that companies are asking in 2025, so you can prep with purpose.

Product Analyst Interviews: What Should You Expect?

Product analyst interviews differ across companies, but certain question types appear consistently. After reviewing over 15,000+ real interview experiences, we’ve identified the core areas you’ll be tested on:

- Product Case Studies - You’ll be asked to evaluate new features, propose improvements to existing products, and reason through product trade-offs. These questions assess your product sense and decision-making process.

- SQL Questions - Expect hands-on SQL tasks where you’ll write queries to extract, join, and manipulate large datasets. Efficiency and accuracy matter.

- Analytics & Insights - These questions test how you interpret data to generate actionable insights. You might be given a dataset or scenario and asked to walk through your analysis approach.

- A/B Testing & Experimentation - You’ll be quizzed on experiment design, interpreting test results, and explaining trade-offs between different testing methods.

- Python for Data Manipulation - Some companies will expect you to write Python scripts for data wrangling, basic automation, or quick exploratory analysis.

- Statistics & Probability - These questions check your grasp of statistical concepts like distributions, hypothesis testing, and probabilities—essential for making sound data-driven recommendations.

- Behavioral & Resume-Based Questions - Prepare for conversations about your past projects, teamwork experiences, problem-solving approaches, and how you communicate insights effectively.

What Is the Interview Process for Product Analysts?

Product analyst interviews aren’t just a SQL test. They’re a layered evaluation of how you think, solve problems, and communicate your ideas. Whether it’s Google, Amazon, or a fast-growing startup, companies are looking for analysts who can blend technical know-how with sharp product sense.

But here’s the catch: The interview process isn’t a one-size-fits-all. Some companies will drill you on metrics case studies; others will throw in SQL-heavy technical screens followed by deep-dive product strategy rounds.

To simplify it, most product analyst interviews follow a structure that looks like this:

Step 1: Recruiter Screen

This is the initial conversation where the recruiter gauges your fit for the role. They’ll ask about your background, interest in the position, and assess your communication skills. It’s also a chance for you to clarify the role expectations and the interview process.

Step 2: Technical Screen

The technical round focuses on your SQL proficiency and product intuition. You’ll be asked to write SQL queries, manipulate datasets, and solve analytical problems. Expect product case study questions where you’ll analyze metrics, diagnose issues, or suggest improvements based on data insights.

Step 3: On-Site or Virtual On-Site Interviews

This round dives deeper into both technical and product-focused interviews. You’ll face more complex SQL challenges, case studies, and scenario-based product questions. Often, there’s also a 1:1 interview with a Product Manager (PM) to assess how well your product sense aligns with the team’s approach.

Some companies may add an additional behavioral or cross-functional round, but the core focus remains: Can you analyze data, reason through product decisions, and communicate your thought process clearly?

Product Case Study Questions (Product Sense & Strategy)

Product case study questions assess your ability to use data to influence product decisions. Typically, these questions ask about feature changes, metrics anomalies, measuring product success and/or product improvements.

1. How would you determine why the number of comments per user is decreasing at a social media company for the last three months?

To add context on why the question is being posed, even though comments per user is decreasing, overall the company has been consistently growing users month-over-month for three months.

With a question like this, start by modeling the scenario. Your model might look like this:

- Jan: 10000 users, 30000 comments, 3 comments/user

- Feb: 20000 users, 50000 comments, 2.5 comments/user

- Mar: 30000 users, 60000 comments, 2 comments/user

Using this model, you might also model churn as Month 1 - 25%, Month 2 - 20% and Month 3 - 15%. Knowing that some users are churning off the platform each month, what can you infer about the decrease in comments per user?

2. What’s the first change you would make or new feature that you would add to product X?

Interviewers ask this question to see that you have done your research and have knowledge of the company’s products. In particular, they want to know:

- If you understand what the product is and its features.

- If you understand who the target audience is.

- If you recognize the problem the product solves for the audience.

Prior to the interview, create some answers for a question like this. In particular, you should propose changes or new features that will enhance the product, address a problem and align with the company’s overarching objectives.

3. How can you use the Facebook app to promote Instagram?

This product question is more focused on growth and is actively in Facebook’s growth marketing analyst technical screen. With growth questions, we have to come up with solutions in the form of growth ideas and provide data points for how they might support our hypothesis.

One hypothesis we could propose is that implementing notifications to Facebook users of friends that have joined Instagram would help to promote Instagram. So if a user’s friend on Facebook decides to join Instagram, we could send a notification to the user that their friend joined Instagram. We can test this hypothesis by implementing an A/B test. We can randomly bucket users into a control and test group where the test group gets notifications on Facebook each time their friend joins Instagram, while the control group does not. At the end of the test, we can observe the sign-up rate on Instagram between the two groups.

4. You have ten experiment ideas for improving conversion rates on an ecommerce website. How do you choose which ideas to test?

One of the most effective ways is to conduct quantitative analysis. You can measure the opportunity size of each idea using historical data.

For example, if one of the ideas was to introduce cart upsells, you could analyze the number of multi-item orders historically. If only a small percentage of customers purchase multiple items, introducing upsells would be a sizable opportunity. You might then choose an A/B test related to cart upsells.

5. Which variables might Uber use to estimate pick-up ETA, besides the ETA from the GPS system?

With metrics questions, start by listing broad variables that could affect ETA. In this case, that would include things like:

- Driver speed - Some drivers may be faster than others.

- Finding the passenger - Locations that experience large crowds would make it harder to locate a passenger.

- Weather conditions - Poor weather conditions could slow pick-up times.

- Wrong turn rates - An area where drivers are prone to wrong turns could slow pick-up times.

- Construction Seasonality - Construction and street/housing improvement projects tend to occur at higher rates during certain times of year, and could impact road access in high density areas.

Once you’ve created a list of broad variables, you can then start to go deeper and choose which ones might have the greatest effect. Weather, how crowded a location is, and wrong turn rate could all help to improve the accuracy of the ETA model.

6. How would you decide whether updating the permanent deletion rate of Dropbox’s trash feature is a good idea?

Let’s say that Dropbox wants to change the logic of the trash folder from never permanently deleting items to automatically deleting items after 30 days. How would you validate this idea?

See a step-by-step solution to this question on YouTube:

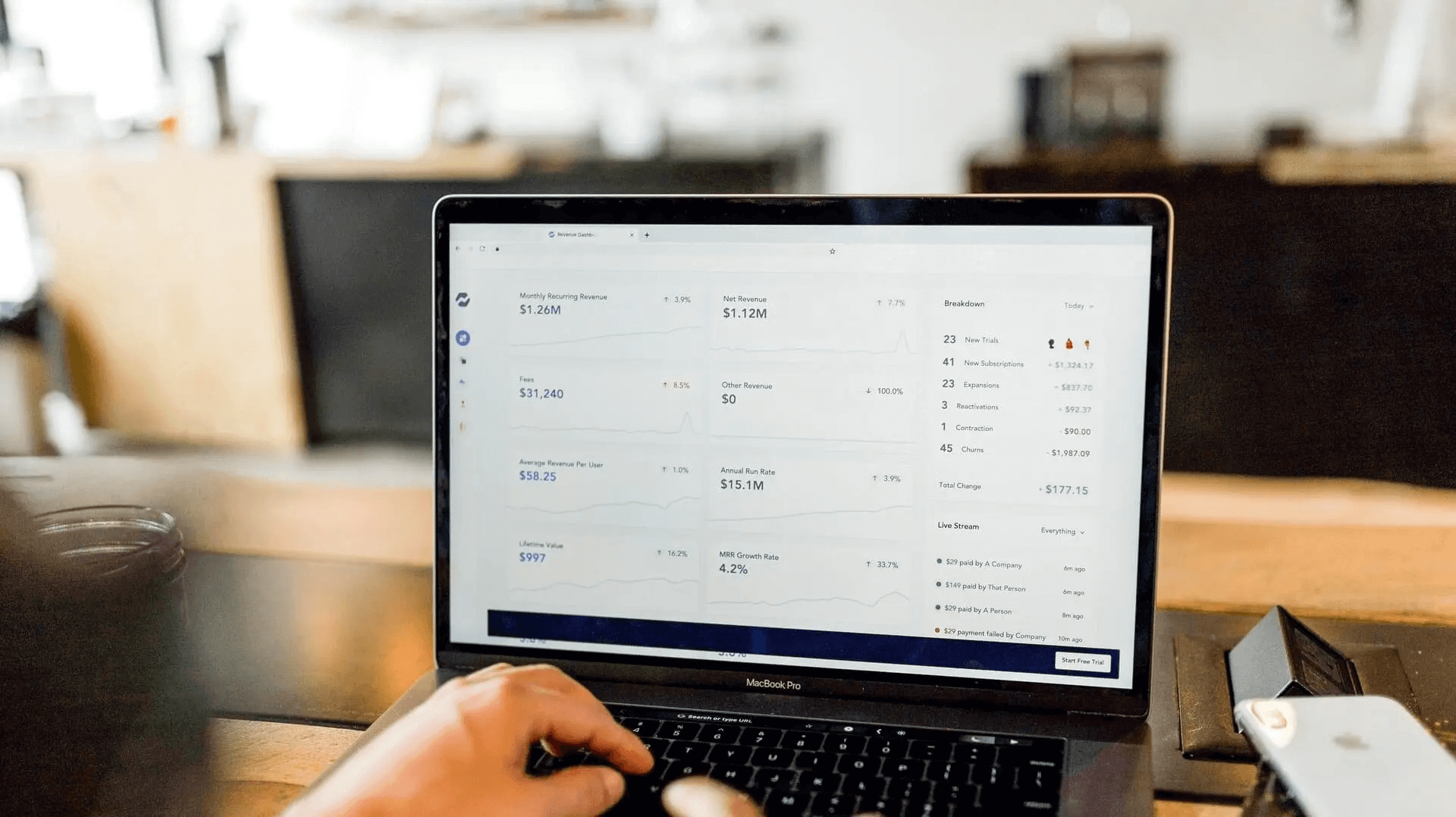

7. How can we measure Netflix’s success in acquiring new users through a 30-day free trial?

More context. Let’s say at Netflix we offer a subscription where customers can enroll for a 30-day free trial. After 30 days, customers will be automatically charged based on the package selected, unless they opt out. What metrics would you look at?

First step, think about Netflix’s business model. They want to focus on:

- Acquiring new users to their subscription plan.

- Decreasing churn and increasing retention.

How would this free-trial plan affect how Netflix might acquire new users or manage their customer churn?

Learn more about Product Case Interviews

This course will teach you how to leverage product metrics and analytics to affect your decision-making.

SQL Interview Questions for Product Analysts (Core Query & Data Extraction)

SQL is the backbone of data extraction for product analysts. Interviewers use SQL questions to assess whether you can pull the right data from relational databases, write efficient queries, and manipulate datasets to answer product-related questions. The types of SQL questions in product analyst interviews range from definition-based discussions, e.g. “When would you use DELETE vs TRUNCATE?”, to writing queries based on provided data. Multi-step SQL case studies are also common. These questions ask you to propose metrics, and then write SQL to pull those metrics.

Advice: Focus on writing clean, optimized queries, understand how GROUP BY and HAVING clauses affect data filtering, and practice real-world case studies like customer segmentation, funnel analysis, and event tracking in SQL.

8. What is the difference between the WHERE and HAVING clause?

Both WHERE and HAVING are used to filter a table to meet the conditions that you set. The difference between the two is shown when they are used in conjunction with the GROUP BY clause. The WHERE clause is used to filter rows before grouping (before the GROUP BY clause) and HAVING is used to filter rows after grouping.

9. What are the different types of joins? Explain them.

There are four different types of joins:

- Inner join: Returns records that have matching values in both tables

- Left (outer) join: Returns all records from the left table and the matched records from the right table

- Right (outer) join: Returns all records from the right table and the matched records from the left table

- Full (outer) join: Returns all records when there is a match in either left or right table

10. How would you isolate the date from a timestamp in SQL?

EXTRACT allows us to pull temporal data types like date, time, timestamp and interval from date and time values.

If you wanted to find the year from 2022-03-22, you would write EXTRACT ( FROM ):

SELECT EXTRACT(YEAR FROM DATE '2022-03-22') AS year;

11. Write a SQL query to get the last transaction for each day.

More context. Given a table of bank transactions with columns id, transaction_value, and created_at (date and time for each transaction), write a query to get the last transaction for each day. The output should include the id of the transaction, datetime of the transaction, and the transaction amount. Order the transactions by datetime.

Because the created_at column is in DATETIME format, we can have multiple entries that were created at different times on the same date. For example, transaction 1 could happen on ‘2020-01-01 02:21:47’, and transaction 2 could happen on ‘2020-01-01 14:24:37’.

To make partitions, we should remove information about the time that the transaction was created. But, we would still need that information to sort the transactions

Is there a way you could do both these tasks at once?

12. How do you create a histogram using SQL?

Let’s say you wanted to create a histogram to model the number of comments per user in the month of January 2020. Assume the bin buckets have intervals of one.

A histogram with bin buckets of size one means that we can avoid the logical overhead of grouping frequencies into specific intervals.

For example, if we wanted a histogram of size five, we would have to run a SELECT statement like so:

SELECT

CASE WHEN frequency BETWEEN 0 AND 5 THEN 5

WHEN frequency BETWEEN 5 AND 10 THEN 10 etc..

13. How will you write a query to get the post success rate?

More context. A table contains information about the phases of writing a new social media post. The action column can have values post_enter, post_submit, or post_canceled for when a user starts to write a post (post_enter), successfully posts (post_submit), or ends up canceling their post (post_cancel).

Write a query to get the post success rate for each day in the month of January 2020. You can assume that a single user may only make one post per day.

Let’s see if we can clearly define the metrics we want to calculate before just jumping into the problem. We want the post success rate for each day over the past week. To get that metric let’s assume post success rate can be defined as:

(total posts submitted) / (total posts entered)

Additionally, since the success rate must be broken down by day, we must make sure that a post that is entered must be completed on the same day. What comes next?

14. We have a table that represents the total number of messages sent between two users by date on messenger. What are some insights that could be derived from this table?

In addition to thinking through possible insights, what do you think the distribution of the number of conversations created by each user per day looks like? Write a query to get the distribution of the number of conversations created by each user by day in the year 2020. This visualization can also help you hone the insights to be gleaned.

See a step-by-step solution to this problem on YouTube:

Data Analytics & Hypothesis Testing Questions

These questions evaluate your analytical thinking and ability to test hypotheses using data. Companies want analysts who can not only pull data but also interpret it to uncover insights, validate assumptions, and recommend actions. Expect scenario-based problems where you’ll define metrics, test relationships (like CTR vs. rating), and explain patterns in data.

Advice: Practice structuring your approach with a clear hypothesis, define the right success metrics, and use SQL or visualization techniques to support your conclusions. Always explain why a particular insight is relevant to the product/business.

15. We have a hypothesis that the Clickthrough Rate (CTR) is dependent on the search result rating. Write a query to return data to support or disprove this hypothesis.

This is a classic data analytics case study type question, in that you are being asked to:

- Create a metric to analyze a problem.

- Pull the metric you created with SQL.

With this question, start by thinking about how we could prove or disprove the hypothesis. For example, if CTR is high when search ratings are high, and low when search ratings are low, then the hypothesis is supported. With that in mind, you can solve this problem by looking at results split into different search ratings buckets.

16. Given three tables representing customer transactions and customer attributes, write a query to get the average customer order value by gender.

Quick solution. For this problem, note that we are going to assume that the question states the average order value for all users that have ordered at least once. Therefore, we can apply an INNER JOIN between users and transactions.

SELECT

u.sex

, ROUND(AVG(quantity *price), 2) AS aov

FROM users AS u

INNER JOIN transactions AS t

ON u.id = t.user_id

INNER JOIN products AS p

ON t.product_id = p.id

GROUP BY 1

17. Given a table with customer purchase data, write a query to output a table that includes every product name a user has ever purchased.

More context. The products table includes id, name and category_id information for customers. In addition, your output should include a boolean column with 1 if the customer has previously purchased that product category, or 0 if they have not.

Additionally, the table should have a boolean column with a value of 1 if the user has previously purchased that product category and 0 if it is their first time buying a product from that category.

Your output should look like this:

| product_name | category_previously_purchased |

|---|---|

| toy car | 0 |

| toy plane | 1 |

| basketball | 0 |

| football | 1 |

| baseball | 1 |

A/B Testing & Experiment Design Questions

A/B testing questions assess your understanding of experimental design, statistical significance, and data-driven decision-making. Interviewers look for candidates who can set up controlled experiments, formulate strong hypotheses, and interpret p-values, confidence intervals, and test validity.

Advice: Be ready to discuss how you’d design an experiment from scratch, the statistical methods you’d use to analyze results, and how to handle edge cases like Simpson’s Paradox or sample bias. Always clarify assumptions before jumping into the solution.

18. What is the difference between a t-test and a z-test?

The biggest difference comes down to sample size. Z-tests are best performed when the experiment has a large sample size, while t-tests are best for small sample sizes.

Further, a z-test is a statistical test that is used to determine whether the means of two samples are different, a calculation which requires variance to be known as well as a large sample size. A t-test is a type of statistical test that is used to determine if the means of two samples are different, and the datasets that you have used must follow a normal distribution while potentially having unknown variance.

19. How do you structure a hypothesis for an A/B test?

A question like this assesses your foundational knowledge of A/B testing. A sample response might include that there are three components you need:

- The variable.

- The result.

- A rationale for why the variable produced the given result.

Then, provide an example. If you wanted to know what effect an upsell offer on a cart had on users (more personalized vs. best-sellers), you might say: If we personalize the upsell offer (variable), then customers will convert at higher rates (result), because the personalized upsells are more relevant for the audience (rationale).

20. What types of questions should you ask before designing an A/B test?

This question assesses your ability to design an A/B test. First, ask about the problem the A/B test is trying to solve. This will help you tailor the questions you would ask. Some examples you might use are:

- How big is the sample size?

- Is this a multivariate test?

- Are the control and test groups truly randomized?

21. You are A/B testing a feature to increase conversion rates. The results show a .04 p-value. How would you assess the validity of the result?

Let’s start out by asking some clarifying questions here:

- What details is the interviewer leaving out of the question?

- Are there more assumptions that we can make about the context of how the A/B test was set up and measured that will lead us to discovering invalidity?

- What rephrasing of the question would help us understand more about the problem at hand?

Basically, this type of question is asking: Was the A/B test set up and measured correctly? If it was set up and measured correctly, what could we say about the p-value?

22. How would you measure the impact that financial incentives have on user response rates?

More context. The results of an A/B test show that the treatment group ($10 reward) has a 30% response rate, while the control group without rewards has a 50% response rate. Can you explain why that happened? How would you improve the experimental design?

See a step-by-step solution for this question on YouTube:

Python Interview Questions for Product Analysts

For roles that require more technical depth, Python is often tested for data manipulation, exploratory analysis, and basic scripting tasks. You might be asked to write functions, process datasets, or solve algorithmic problems that involve data cleaning or transformation.

Advice: Focus on core Python skills—lists, dictionaries, loops, and libraries like pandas and NumPy. Practice coding simple automation tasks and think in terms of how you’d scale your scripts to handle large datasets.

23. What is a split in Python? Why is it used?

A split() is used to separate strings in Python. For example, if the string was “basic python,” the split function would break that into ‘basic’, ‘python’. Here’s an example:

string='basic python'

print(string.split())

Output:

['basic', 'python']

24. Write a Python function to sort a numerical dataset.

list = ['1', '4', '0', '6', '9']

list = [int(i) for i in list]

list.sort()

print (list)

Output:

[0, 1, 4, 6, 9]

25. Write a function that can take a string and return a list of bigrams.

At its core, bi-grams are two words that are placed next to each other. Two words versus one word feature in engineering for a NLP model that gives an interaction effect. To actually parse them out of a string, we need to first split the input string. We would use the python function .split() to create a list with each individual word as an input. Create another empty list that will eventually be filled with tuples.

Then, once we have identified each individual word, we need to loop through the list k-1 times (if

k is the amount of words in a sentence) and append the current word and subsequent word to make a tuple. This tuple gets added to a list that we eventually return.

def find_bigrams(sentence):

input_list = sentence.split()

bigram_list = []

# Now we have to loop through each word

for i in range(len(input_list)-1):

#strip the whitespace and lower the word to ensure consistency

bigram_list.append((input_list[i].strip().lower(), input_list[i+1].strip().lower()))

return bigram_list

26. Write a function to generate N samples from a normal distribution and plot the histogram. You may omit the plot to test your code.

This is a relatively simple problem because we have to set up our distribution and then generate n samples from it, which are then plotted. In this question, we make use of the scipy library which is a library made for scientific computing.

First, we will declare a standard normal distribution. A standard normal distribution, for those of you who may have forgotten, is the normal distribution with mean = 0 and standard deviation = 1. To declare a normal distribution, we use the scipy stats.norm(mean, variance) function and specify the parameters as mentioned above

| Question | Topic | Difficulty | ||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

SQL | Easy | |||||||||||||||||||||||

Write a SQL query to select the 2nd highest salary in the engineering department. Note: If more than one person shares the highest salary, the query should select the next highest salary. Example: Input:

Output:

| ||||||||||||||||||||||||

SQL | Medium | |||||||||||||||||||||||

SQL | Easy | |||||||||||||||||||||||

SQL | Easy | |

Machine Learning | Medium | |

Statistics | Medium | |

SQL | Hard | |

Machine Learning | Medium | |

Python | Easy | |

Deep Learning | Hard | |

SQL | Medium | |

Statistics | Easy | |

Machine Learning | Hard |

Statistics & Probability Interview Questions for Product Analysts

These questions test your statistical literacy and ability to interpret data rigorously. Topics include p-values, confidence intervals, estimators, distributions, and understanding probability scenarios. They’re critical for assessing whether you can draw valid conclusions from data, especially in A/B testing and modeling situations.

Advice: Brush up on statistical fundamentals and intuitive explanations, as interviewers often expect you to explain concepts like p-values or z-tests in layman terms. Be prepared to tackle hypothetical problems involving probability calculations and statistical paradoxes.

27. How would you explain p-value to a non technical person?

In the simplest terms, p-value is used to measure the statistical significance of a test. The higher the p-value, the more likely you are to accept the null hypothesis (typically that the two variables can be explained by random interaction). A smaller p-value would indicate that there was a statistically significant interaction between the variables, and that you are able to reject the null, which is to say, something more than randomness explains how the variables interact.

28. Determine the cause of drop in capital approval rates.

More Context: Capital approval rates have gone down for our overall approval rate. Let’s say last week it was 85%, but fell this week to 82%, a statistically significant reduction.

The first analysis shows that all approval rates stayed flat or increased over time when looking at the individual products.

- Product 1: 84% to 85% week over week

- Product 2: 77% to 77% week over week

- Product 3: 81% to 82% week over week

- Product 4: 88% to 88% week over week

This would be an example of Simpson’s Paradox, which is a phenomenon in statistics and probability. Simpson’s Paradox occurs when a trend shows in several groups but either disappears or is reversed when combining the data. This is often because the subgroups are offset from each other on the Y-axis, and when aggregated show only the movement between the groups, and not the trends within. For the original example, there could have been quite a few more sales of Product 2, which pulled the overall approval rate down, even though no drop actually occured for the product.

29. Given two fair dice, what is the probability of getting scores that sum to 4? to 8?

This is a simple calculation problem:

There are 4 combinations of rolling a 4 (1+3, 3+1, 2+2): P(rolling a 4) = 3⁄36 = 1⁄12

There are 5 combinations of rolling an 8 (2+6, 6+2, 3+5, 5+3, 4+4):

Solution: P(rolling an 8) = 5⁄36

30. What are the Z and t-tests? What are they used for? What is the difference between them? When should use one over the other?

In the simplest terms, Z-tests and t-tests are statistical tools used to determine if a sample mean is close to a known population mean. Both tests assume the data follows a normal distribution and involve similar hypotheses, but they differ in the probability distribution they use to calculate p-values. Z-tests rely on the standard normal distribution, making them more suitable for large sample sizes where the population standard deviation is known. On the other hand, t-tests use the t-distribution, which has “fatter tails,” making it more appropriate for smaller sample sizes where the population standard deviation is unknown. As the sample size increases, the t-distribution approaches the standard normal distribution.

31. What is an unbiased estimator and can you provide an example for a layman to understand?

In the simplest terms, an unbiased estimator is a statistical tool used to accurately estimate a population parameter. The idea is that, on average, the estimator will give you the correct value of the parameter you’re trying to measure. For example, if you’re trying to determine the average height of people in a city, the sample mean from a well-chosen sample would be an unbiased estimator of the population mean. This means that, if you were to take many different samples, the average of these sample means would converge to the true average height of the entire population, with no systematic overestimation or underestimation.

Product Analyst Behavioral Interview Questions

Behavioral interview questions are designed to evaluate your cultural fit, soft skills, and how you approach real-world problems. These questions dive deep into your past experiences, collaboration style, problem-solving mindset, and communication skills. Hiring managers want to know how you think, how you handle ambiguity, and whether your working style aligns with the company’s values and team dynamics.

Pro Tip: When answering behavioral questions, use the STAR method (Situation, Task, Action, Result) to structure your responses. Focus on your direct contributions and impact, not just the team’s achievements.

32. What are your favorite data visualization techniques?

Instead of rattling off chart types, approach this question with context. The “best” visualization always depends on the data and the story you’re trying to tell. For example, you might prefer heatmaps for user behavior flows or funnel charts for conversion metrics. Share examples from your past work—describe the visualization, its purpose, and how it added clarity or drove a key decision.

33. What are some of your favorite products and why?

This question gauges your product intuition and user-centric thinking. Choose a product you’re genuinely passionate about. First, explain what the product does and its key features. Then, describe the user problem it solves and why it stands out against competitors. The best answers also reflect on why this product resonates with you personally, connecting it to your perspective as a product analyst.

34. The product manager gives you vague directions for a project. How do you proceed?

Interviewers want to see how you handle ambiguity and cross-functional collaboration. Start by emphasizing the importance of asking clarifying questions:

- What is the end goal?

- Are examples available?

- Can you provide some more details?

- What overarching goal is this tied to?

Once you have a clearer understanding, proactively propose a structured plan and seek the PM’s feedback. Demonstrating that you can navigate unclear situations with thoughtful probing and initiative is key.

35. How do you stay updated on market trends?

This is a test of your curiosity and continuous learning mindset. Discuss how you keep up with product analytics trends through”

- Product-related podcasts

- Blogs or news sources

- People you follow on Twitter

- How do you acquire new skills

- Interesting case studies you have read

You can also mention online communities or courses you’ve taken to upskill. Tailor your answer to show that you are proactively sharpening your product sense and data skills.

36. How would you assess the quality of a dataset?

A strong answer showcases your attention to detail and data rigor. Mention specific data quality metrics you evaluate like

- Completeness

- Validity

- Timeliness

- Consistency

Talk about how you perform sanity checks, look for anomalies, and validate assumptions before trusting data for analysis. Highlighting a real scenario where you uncovered data issues would add extra weight to your answer.

37. What would your first 90 days on the job look like?

This question evaluates your onboarding strategy and proactive mindset. Structure your response into 30-60-90 day blocks:

First 30 days: Focus on onboarding, understanding product workflows, data infrastructure, and team dynamics.

Next 30 days: Start contributing to ongoing projects, shadow analyses, and provide quick-win insights where possible.

Final 30 days: Own a small project end-to-end, drive meaningful analysis, and align your outputs to key business objectives. Tailor your response to showcase how you’d bring value early while ramping up effectively.

38. Tell me about a time you disagreed with a product decision—how did you handle it?

This question evaluates your ability to navigate conflict constructively and influence product outcomes using data and collaboration. A strong answer explains that you respectfully challenged the decision by gathering relevant data or user insights, clearly communicated your perspective with evidence, and worked collaboratively with the team to test alternative solutions or reach consensus. It demonstrates critical thinking, communication skills, and flexibility, showing you can advocate for the best product outcome without creating friction.

39. Describe a time you automated or streamlined a repetitive data process

Interviewers ask this to understand your initiative, problem-solving skills, and technical ability to improve efficiency. Your answer should highlight identifying manual, time-consuming processes, designing an automated or streamlined workflow (using SQL, scripting, or tools), and quantifying the impact such as saved hours or error reduction. It reflects your drive to optimize workflows and enable teams to focus on higher-value analysis.

40. How do you prioritize requests from different stakeholders (PM, Marketing, Engineering)?

This question tests your ability to manage competing priorities and stakeholder expectations effectively. A strong answer describes a structured prioritization approach: evaluating the business impact, urgency, and effort required for each request; aligning with company goals; and communicating transparently with stakeholders to negotiate timelines or trade-offs. It shows you can balance cross-functional demands while focusing on initiatives that maximize value.

| Question | Topic | Difficulty | ||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

SQL | Easy | |||||||||||||||||||||||

Write a SQL query to select the 2nd highest salary in the engineering department. Note: If more than one person shares the highest salary, the query should select the next highest salary. Example: Input:

Output:

| ||||||||||||||||||||||||

SQL | Medium | |||||||||||||||||||||||

SQL | Easy | |||||||||||||||||||||||

SQL | Easy | |

Machine Learning | Medium | |

Statistics | Medium | |

SQL | Hard | |

Machine Learning | Medium | |

Python | Easy | |

Deep Learning | Hard | |

SQL | Medium | |

Statistics | Easy | |

Machine Learning | Hard |

FAQs on Product Analyst Interview Questions

1. What is the difference between product analytics and data analytics?

Product analytics focuses on tracking user interactions and product usage to improve product features and user experience. Data analytics is a broader discipline that involves analyzing datasets to derive insights for various business functions, including finance, marketing, operations, and product.

2. What makes a good product analyst?

A good product analyst possesses strong analytical and problem-solving skills, a deep understanding of user behavior, proficiency in tools like SQL and Excel, and the ability to communicate data-driven insights effectively. They should also have a product mindset, aligning data findings with business goals and user needs.

3. What are product analytics tools?

Product analytics tools are platforms that help businesses track, measure, and analyze user interactions with digital products. Common tools include Mixpanel, Amplitude, Google Analytics, Heap, and Tableau. These tools provide capabilities like funnel analysis, cohort tracking, retention metrics, and behavioral segmentation.

4. What are the five factors to be considered in product analysis?

The five key factors in product analysis are:

- User Engagement Metrics (DAU/WAU/MAU, session time)

- Feature Usage Trends

- Conversion Funnels

- Retention and Churn Rates

- Business Impact Metrics (LTV, ARPU, Revenue impact)

5. What are the 5 C’s of product strategy?

The 5 C’s framework in product strategy includes:

- Company – Aligning with company objectives and vision.

- Customer – Understanding target audience needs and pain points.

- Competitors – Analyzing market positioning and differentiation.

- Collaborators – Working with partners, vendors, or teams.

- Context – Considering external factors like regulations, technology trends, or economic shifts.

6. What are the best analytical interview questions?

Some of the best analytical interview questions for product analysts include:

- How would you diagnose a sudden drop in product engagement metrics?

- Design an A/B test for a new feature.

- How would you analyze user churn?

- Which metrics would you track to measure the success of a product launch?

- How do you prioritize which experiments to run?

These questions test your critical thinking, problem structuring, and data interpretation abilities.

7. How technical is a Product Analyst interview?

Product Analyst interviews can be moderately technical. You’re expected to write SQL queries, understand A/B testing and basic statistics, and sometimes code in Python. However, product sense, business problem-solving, and communication skills are equally important in assessing your fit for the role.

8. What types of case study questions are common in Product Analyst interviews?

Expect case study questions that involve:

- Investigating metric anomalies.

- Suggesting product improvements based on data.

- Designing and interpreting experiments (A/B tests).

- Measuring product success post-launch.

These questions evaluate your analytical approach, product intuition, and decision-making under real-world business scenarios.

Ready to ace your Product Analyst interviews? It’s not just about technical skills—top companies want to see how you think through product scenarios, interpret data, and collaborate cross-functionally. That’s why our Google Product Analyst Interview Guide and Amazon Product Analyst Interview Guide dive deep into real-world questions and strategic frameworks.

To level up your prep, check out our Product Metrics & Analytics Learning Path or explore broader Interview Paths on IQ. Bookmark this page, share it with peers, and start preparing with clarity—not confusion. You’ve got this.

More Resources for Product Analyst Interviews

Join Interview Query to prepare for your product analyst interview. Premium members have access to:

- 500+ Real Interview Questions

- 1,600+ Company Interview Guides

- Product Sense Data Science Course

- 35 Practice Take-Homes

Also, try reading through our blog if you want to learn more about what we cover here on IQ. Here are some examples of our recently published articles that you might want to look into: