Stanford's 2026 AI Index: Agentic AI Hiring Is Surging, But Data Interviews Still Test the Basics

Agentic AI Hiring Is Rising Fast

The newest AI hiring data makes it clear that employers are talking about AI skills far more often than they were even a year ago. In Lightcast’s contribution to Stanford’s 2026 AI Index, AI skills appeared in 2.5% of all U.S. job postings, up 55% year over year and 297% from a decade ago. Mentions of the “agentic AI” skill cluster jumped more than 280% from 2024 to 2025, reaching roughly 90,000 U.S. job postings.

That kind of headline is easy to misread. It can sound like candidates should spend less time on fundamentals and more time learning prompt tricks, workflow tools, and AI jargon. For data roles in particular, that would be the wrong takeaway.

Recent IQ coaching signals and interview writeups suggest a more grounded reality. AI fluency is increasingly part of the workflow, but the interviews that decide who gets hired still revolve around core skills: SQL, experimentation, Python, communication, and business judgment.

Why Agentic AI Job Postings Still Don't Replace the Fundamentals

The Stanford AI Index finding matters because it captures a real shift in employer demand. “Agentic AI” is no longer a fringe keyword, and the broader category of AI skills is showing up across more roles.

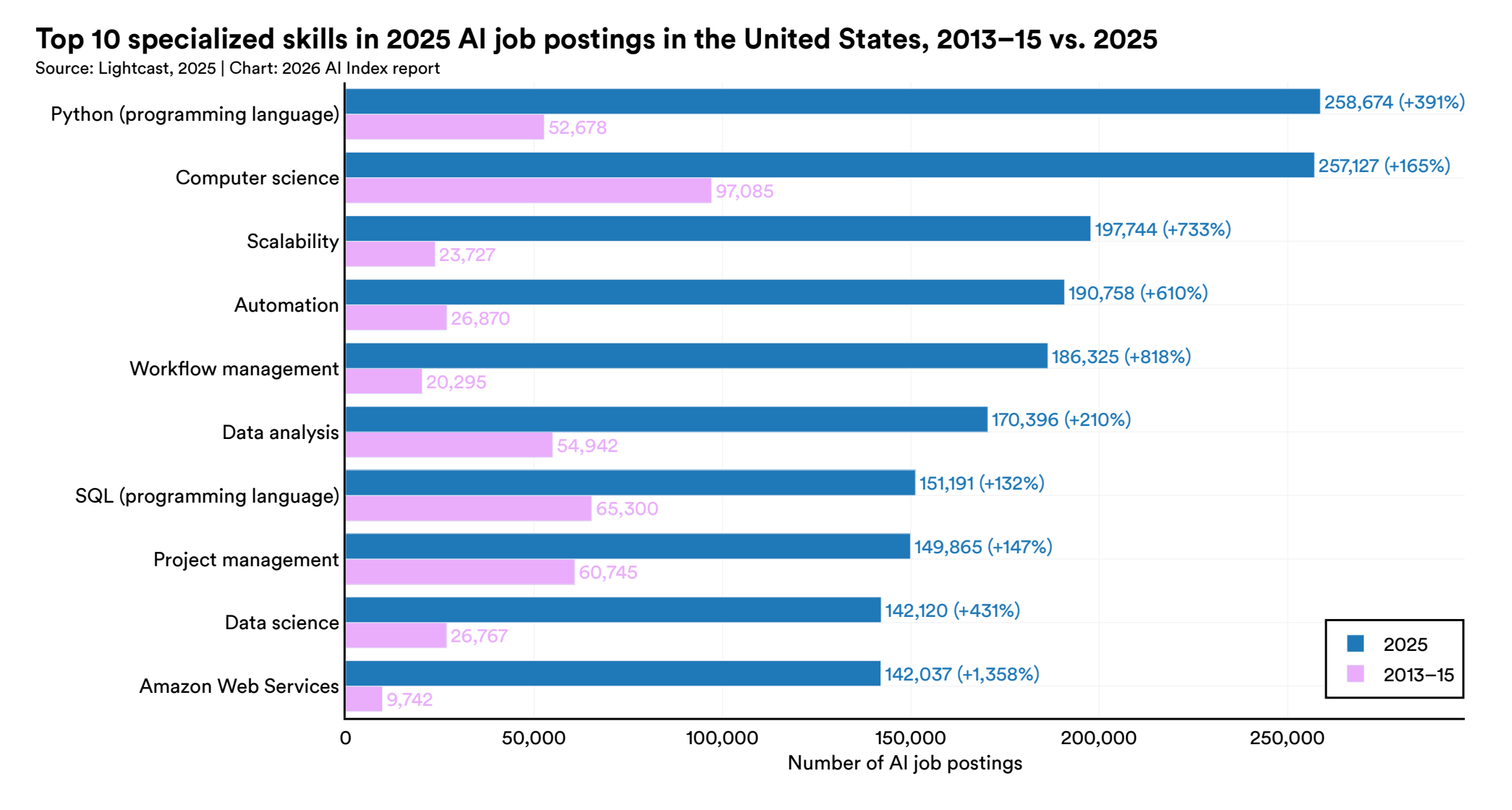

Lightcast also found that Python remained the most in-demand specialized skill in U.S. AI job postings, appearing in 258,674 postings in 2025, up nearly 30% from 2024.

That last detail is the part many candidates skip over. Instead of rewarding AI vocabulary in isolation, the market is prioritizing people who can use AI inside technical workflows that already matter.

In other words, hiring teams still want candidates who can write and debug SQL, reason through experimental design, and explain why an analysis is sound. AI just changes the environment in which those skills get tested.

That lines up with what IQ coaches have been seeing in recent sessions. In one product data science prep session this month, the focus was still Python, statistics, and SQL, with special attention on anti-joins, conditional logic, and explaining a solution before writing code. In another, a candidate preparing for an analytics-heavy role spent most of the session on p-values, multicollinearity, Power BI, and how to connect A/B test design to a business goal.

Recent Interview Loops Still Look Broad

The latest annotation-based interview experiences tell the same story. A recent data analyst loop at a large e-commerce company combined SQL, Python, statistics, behavioral rounds, and a final bar raiser style screen. Another candidate interviewing for a senior product data science role at a fintech faced SQL joins, product judgment, ROI framing, and experimentation in the same process. A senior scientist loop at a ride-sharing company went deep on A/B testing failure modes, not just textbook definitions.

Even the AI-native examples do not point to a shortcut. One recent candidate interviewing for a product data science role at a major AI lab reported that the take-home explicitly allowed ChatGPT’s data analysis tools. But the assignment still required experiment analysis, segmentation, and a clear slide-based narrative. The test was not “can the model do the work.” It was whether the candidate could guide the tool, interpret the output, and spot what was missing.

That is a useful distinction for candidates who assume AI-friendly interviews are inherently easier. In practice, they often ask for more judgment.

The Market Shift Is Toward Applied Work

The broader hiring data points in the same direction. CoderPad’s 2026 State of Tech Hiring report found that technical assessments are up 48% globally compared with mid-2023, while U.S. technical hiring activity is up 90%. The report also notes that teams are moving toward assessments that resemble real work, including debugging AI-generated output and explaining trade-offs.

CompTIA’s State of the Tech Workforce 2026 adds another layer of context. The group projects 1.9% growth in net tech employment this year and roughly 128,000 additional tech occupation jobs across industries. The opportunity is still there, but employers appear to be getting more specific about the work they want done.

That helps explain why data interviews are becoming broader instead of simpler. If companies are hiring for applied AI, analytics, experimentation, and operational decision-making at the same time, then the interview loop has to test more than one narrow skill. A candidate may need to move from SQL to metrics trade-offs to stakeholder communication in the span of a single round.

What This Means for Data Candidates in 2026

For job seekers, the practical takeaway is that AI fluency now works like spreadsheet fluency or dashboard fluency once did. It is expected, useful, and increasingly visible.

But the use of AI tools is rarely the whole job. The differentiator is still whether a candidate can turn messy information into a sound decision.

That means preparation should stay anchored to four areas: solid SQL and Python execution, comfort with experimentation and statistics, clear business storytelling, and the ability to use AI without outsourcing judgment to it. Candidates who skip the fundamentals in favor of AI buzzwords will likely feel modern, but not especially credible.

A better prep stack is less glamorous and more effective. Use AI tools to move faster, but keep practicing query logic, product sense, and verbal reasoning under pressure.

The Bottom Line

Stanford’s 2026 AI Index is a real signal that the labor market is absorbing AI skills fast. “Agentic AI” has clearly entered the hiring vocabulary, and companies are posting for work that assumes AI will be part of the job.

But recent data interviews suggest a more useful interpretation for candidates: AI is not replacing the fundamentals, it is stacking on top of them. The strongest candidates will be the ones who can use AI to accelerate analysis while still showing clean SQL, sound statistical thinking, and clear business judgment when the interview gets specific.