How to Answer A/B Testing Interview Questions: A 6-Step Guide

Introduction

A/B testing interview questions show up because companies want more than statistical vocabulary. They want to see whether you can connect a product change to a business decision, choose the right metric, spot experiment risks, and explain what you would do if the result is messy. In recent Interview Query coaching sessions, candidates who did well in Meta, Uber, and similar loops kept using the same habit: they answered in a clear sequence instead of jumping straight to p-values.

That is the approach you should copy. If you can move from product goal to hypothesis, metrics, randomization, and decision criteria without losing the thread, you will sound like someone who has actually run experiments, instead of someone simply reciting a textbook.

Why A/B Testing Interview Questions Feel Harder than They Should

The hard part is rarely the math alone. Interviewers use these questions to test product judgment, communication, and experimental rigor at the same time. One candidate in a recent coaching transcript passed a product analytics screen by flagging network effects before the interviewer asked. Another candidate in an Uber loop said the toughest follow-ups were not formulas. They were practical checks like what to verify in the results and what to do when the experiment appears to fail.

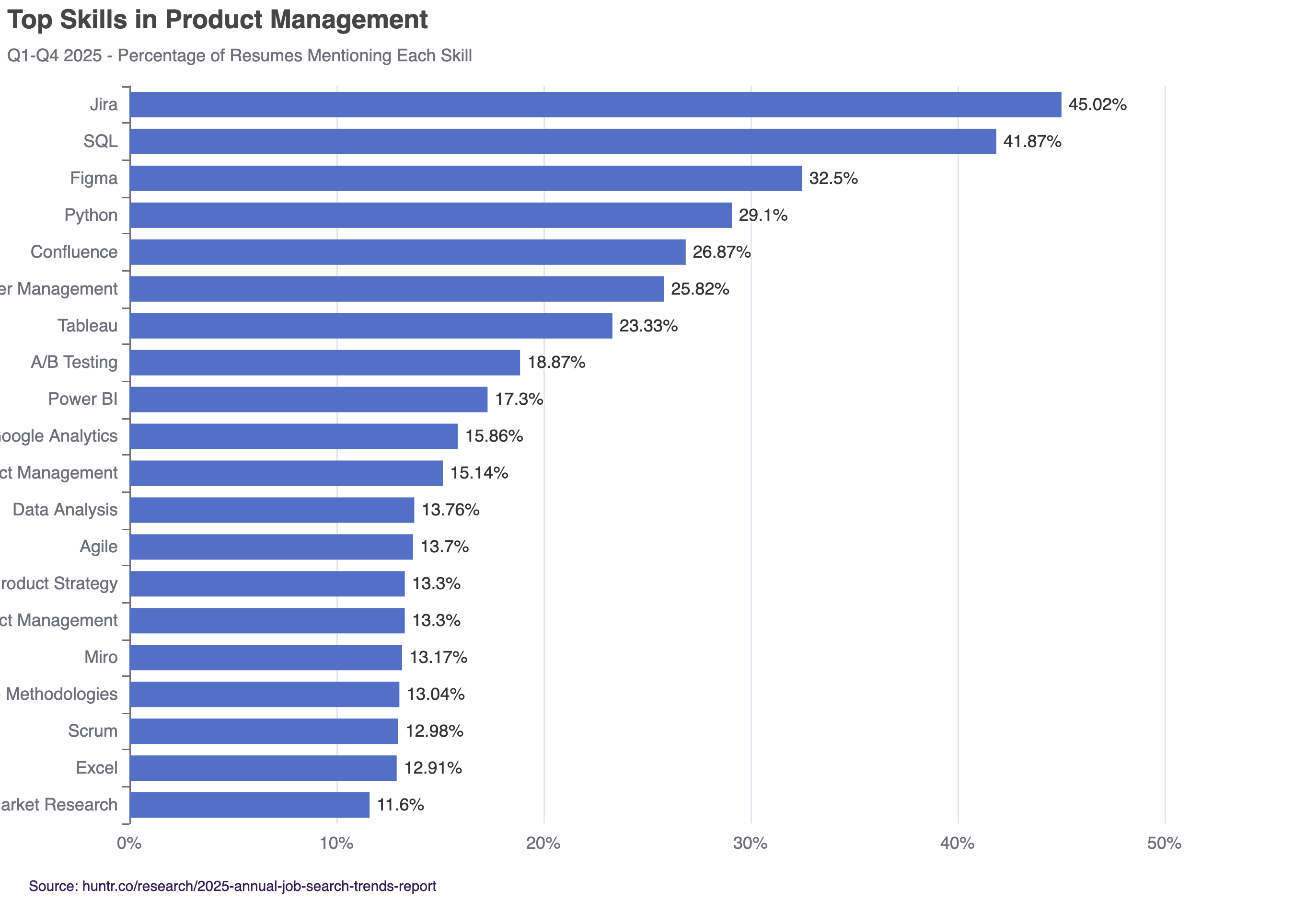

Source: Huntr’s 2025 Annual Job Search Trends Report

That matches the broader market too. Huntr’s 2025 Annual Job Search Trends Report found A/B testing in 18.87% of analytical skill mentions. It joins other analytical and experimentation skills like Google Analytics fluency in the middle tier, signifying the value of measurement and iteration in making business decisions.

That is why a loose answer usually falls apart. If you start with test statistics before you define the decision, your answer sounds backward. If you list metrics without explaining the user behavior behind them, your answer sounds shallow. And if you ignore spillover, novelty effects, or sample ratio mismatch, interviewers will assume you have only seen clean classroom examples.

If you want extra reps before your interview, practice with Interview Query’s Statistics and A/B Testing learning path so you can hear the same concepts in different phrasings.

Start with the Decision Behind the Experiment

Before you say anything about significance, clarify what product decision the company is trying to make. Is the team deciding whether to ship a feature, change ranking logic, increase ad load, or redesign onboarding? Your answer should begin with one sentence that frames the decision: what changed, who is affected, and what success should look like.

Then state the primary behavior you expect to move. For example:

- Messaging feature → message send rate or reply rate

- Marketplace feature → completed orders or successful deliveries

- Growth experiment → activation or retention.

This keeps the rest of your answer grounded in user value instead of abstract statistics.

Use a 6-Step Framework When Answering

- State the hypothesis. Say what you expect the treatment to change and why. Keep it directional and tied to user behavior.

- Choose one primary metric and two or three guardrails. Your primary metric measures success. Guardrails protect against damage such as lower retention, slower load time, worse quality, or higher cancellation.

- Pick the unit of randomization. User-level assignment is not always correct. If the feature has social interaction, shared inventory, or marketplace spillover, explain why you may need room-level, household-level, or region-level randomization.

- Define the population and experiment window. Mention who should be included, who should be excluded, and how long you need to run the test to capture enough behavior.

- Call out risks before the interviewer does. Common ones are sample ratio mismatch, novelty effects, logging bugs, seasonality, and contamination between treatment and control.

- End with the decision rule. Explain what result would make you ship, hold, rerun, or investigate further. Interviewers want to hear how you would act, not just how you would calculate.

If you want to practice saying this framework out loud, use the AI Interviewer for a timed mock so you can tighten your structure under pressure.

Walkthrough: Product Example from Meta

Imagine the interviewer asks:

Meta wants to change the group video call layout to improve call completion. How would you design an A/B test?

A strong answer starts by clarifying the goal. If the goal is higher call completion without hurting call quality, your primary metric could be completed call rate per room or per call session. Your guardrails might include call latency, crash rate, invite acceptance rate, and time to join.

Next, explain that user-level randomization may be risky because group calls create network effects. A treated user can influence the experience of a control user inside the same call. In that case, you would discuss randomizing at the room level or another cluster that reduces contamination. Then say how you would validate experiment health before reading the outcome: check traffic split, confirm logging, look for sample ratio mismatch, and compare pre-experiment covariates across groups.

Finally, close with the business decision. If call completion rises but latency gets worse enough to hurt user experience, you would not treat the result as a clean win. You would either hold the launch, investigate the quality regression, or rerun a refined version of the test. That last sentence matters because it shows you understand experimentation as a decision system, not a scorecard.

How to Handle Messy Follow-Ups

Most interviewers will push past the happy path. Be ready for questions like:

- What if the treatment lifts the primary metric but hurts a guardrail?

- What if the result is not significant?

- What if power was too low?

- What if treatment and control users interact?

If you encounter these follow-ups, do not panic and start naming every possible statistical concept you know. Answer by diagnosing the experiment in order: data quality first, design issues second, interpretation third, next action last.

For example, if the result is flat, say you would first verify logging and sample ratio, then check whether the test was underpowered, then look at segments only if there is a sound product reason.

Meanwhile, if the result is positive but contamination is high, say you would question whether the estimate is trustworthy before recommending rollout. This kind of sequencing is exactly what recent coaching signals showed interviewers rewarding.

If you want feedback on your follow-up handling, Interview Query Coaching is the fastest way to pressure-test your answer with someone who can push on weak spots.

FAQs

What are A/B testing interview questions?

A/B testing interview questions assess your ability to design experiments, choose the right metrics, analyze results, and make product decisions based on data.

How should I structure my answer to A/B testing interview questions?

Use a clear framework: define the goal, state a hypothesis, choose metrics, decide on randomization, identify risks, and explain the decision rule.

What metrics should I use in an A/B test interview question?

Choose one primary metric that reflects success, along with 2–3 guardrail metrics to ensure the experiment does not harm other parts of the product.

What are common mistakes in A/B testing interview questions?

Common mistakes include jumping straight to statistical tests, ignoring product goals, failing to define metrics clearly, and overlooking risks like sample ratio mismatch or contamination.

How do I handle follow-up questions in A/B testing interviews?

Answer systematically: check data quality first, evaluate experiment design next, interpret results carefully, then recommend clear next steps.

Why are A/B testing interview questions important for data science roles?

They test whether you can connect analysis to real business decisions, showing that you can design reliable experiments and make actionable recommendations.

Conclusion

The best answers to A/B testing interview questions are structured, practical, and tied to a real product decision. You do not need to sound like a statistics professor. You need to show that you can define success, design a credible experiment, spot failure modes, and recommend what the team should do next.

That is why this framework works. It keeps you focused on the order interviewers care about: decision, hypothesis, metrics, randomization, risks, and action. Practice that sequence enough times, and even difficult follow-ups start to feel manageable.