AI Drove 25% of March Layoffs, But MIT Says Workers Still Have Time to Adapt

AI Layoffs Continue in 2026

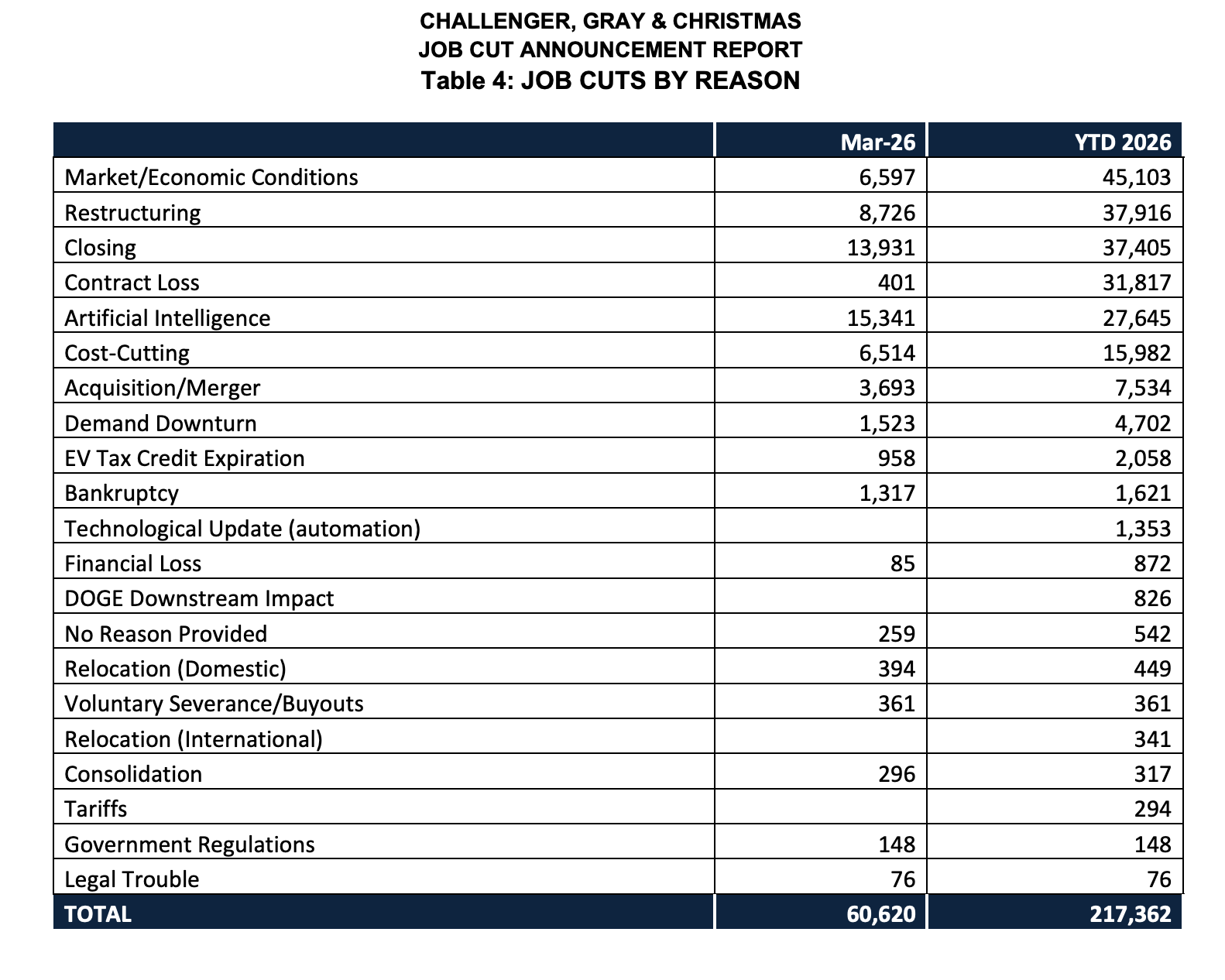

Tech’s worst Q1 for job cuts since 2023 just wrapped. According to coaching company Challenger, Gray & Christmas, US tech companies announced 52,050 job cuts in the first three months of 2026, up 40% from the same period last year. March alone accounted for 18,720 of those cuts. For the first time in the firm’s tracking history, AI ranked as the leading stated reason for workforce reductions, accounting for 25% of all job cuts across industries last month.

That’s the bad news. The less obvious piece came from MIT’s Computer Science and Artificial Intelligence Laboratory, which published new research on April 2nd arguing that AI’s workforce impact is more “rising tide” than “crashing wave.” Across 3,000 text-based work tasks drawn from the US Department of Labor’s Occupational Information Network, the study found AI capabilities are improving gradually rather than in sudden surges, and that most tasks studied could reach 80-95% AI success rates by 2029.

The gap between those two data points is where data scientists currently live. Companies are already cutting based on AI investment logic. But the academic evidence suggests a several-year window, not an overnight cliff. Understanding what that means practically shapes how you prep and how you compete.

What the Challenger Data Actually Shows

The 52,050 figure includes a pointed comment from Challenger: in technology specifically, “AI can replace coding functions,” and that’s already showing up in headcount decisions. This isn’t the usual layoff language about operational efficiency or macroeconomic headwinds. Companies are explicitly attributing cuts to AI substitution.

Atlassian cut 10% of its staff in March to restructure around AI. Block laid off 4,000 employees in February, roughly 40% of its company. Oracle is trimming thousands to fund a $56 billion AI data center buildout. Meta cut hundreds across Reality Labs. The pattern is consistent, as legacy headcount is reduced while AI infrastructure is being funded. The roles disappearing skew toward support, operations, and generalist technical functions that AI tooling now covers at acceptable quality.

The MIT Research Is More Nuanced Than the Headlines

MIT’s CSAIL tested AI models on thousands of real job tasks and found that capability gains are gradual, not sudden. The “crashing wave” scenario, where AI achieves a sudden competency jump that disrupts entire job categories at once, is the exception. Most of what AI is doing in the workforce looks more like incremental creep than vertical displacement.

The caveat is real. The study found most text-based tasks could hit 80-95% AI success rates by 2029, at what researchers call “minimally sufficient” quality. Minimally sufficient means good enough to get the job done, not good enough to impress. Workers have a window measured in years rather than quarters, and that window matters for how aggressively you need to reposition right now versus in the medium term.

The Workforce Shift That’s Actually Happening

Companies are not simply automating and leaving roles empty. A survey by consulting firm Robert Half found that 29% of 2,000 hiring managers said they’ve already reopened positions previously eliminated after implementing AI. More than half (55%) plan to increase contract or temporary workers in the first half of 2026, according to Business Insider.

The practical translation: a data scientist role that existed in 2024 may return in 2026 as a contract engagement, scoped more narrowly around AI-adjacent work, at a lower total cost to the company. In other words, the skills remain valuable, but the structure around them is changing. Senior DS candidates with strong product instincts are landing at a higher rate than mid-level generalists right now, and companies with open reqs are moving fast when they find the right person.

What This Means for Data Scientists Interviewing Right Now

Candidates navigating DS interview loops in this environment are finding processes more compressed. Timelines move faster, offer windows are shorter, and passing a technical screen no longer guarantees a team match, especially at companies running simultaneous hiring freezes and open reqs across different functions.

A few signals stand out across recent interview patterns. Causal inference is differentiating at marketplace companies, where establishing that a metric shift is real and not noise has become a baseline expectation. Product analytics fluency, specifically diagnosing metric drops and constructing root-cause hypotheses, carries more weight than raw model-building ability. Companies continue to move on when candidates delay timelines, even when technical performance was strong in earlier rounds.

The competitive bar hasn’t lowered because companies are investing in AI. The roles still on offer are the ones where judgment matters most. This is aligned with Anthropic’s study on AI exposure, noting that the jobs most exposed to AI involve digital information processing, but it doesn’t mean that the roles completely disappear. Rather, skills like judgment, oversight, and business context become more valuable.

The Bottom Line

Q1 2026’s layoff numbers are real, and the AI attribution is real. Companies are restructuring toward AI investment, and that is already costing jobs. What MIT’s research adds is a timeline. The displacement is happening gradually enough that candidates doing the work right now, building the analytical and product judgment that AI tools don’t yet replicate, will be the ones interviewing for the strongest roles when hiring opens back up.

The data scientists who land those roles will not necessarily be the ones with the deepest ML model portfolios. They’ll be the ones who reason clearly in ambiguous product scenarios, can defend a causal claim under pressure, and demonstrate structured thinking that AI workflows still struggle to produce consistently.

That’s still something you can build toward, and it’s where one-on-one coaching tends to make the clearest difference, particularly for candidates preparing for senior DS and ML roles that are still being filled even as broader headcount contracts.

Source:

Source: