Data Science Skills Test and Assessment

Overview

Ever feel like you destroyed a job interview, but ended up not getting the job? Or how about this: You completely bombed a technical screen and still passed onto the next round?

You’re not alone. Hiring standards are confusing at best, and for candidates, these confusing standards beg the question: How well am I performing in data science interviews in comparison to my peers?

A data science skills test offers insight into your performance. For candidates, a well-calibrated skills test provides a score of where they stand compared to other data science professionals (similar to SAT percentiles). For hiring managers, data science assessments can be used to narrow the applicant pool.

In short, assessments for data science job seekers are a powerful hiring tool. We’ve answered the most common questions about skills tests for data scientists, including:

What Is a Data Science Skills Assessment?

A data science skills test is an online test that’s used to measure a job applicant or data scientist’s knowledge of machine learning, statistics, Python, product sense, and SQL.

Compare your skills against other data scientists with Interview Query Challenges.

These assessments typically last 20-30 minutes, and they are used to determine the skill level of a candidate if the candidate is qualified for the role, and how their domain knowledge compares to other candidates.

You can visualize how a data science assessment works with this example:

Bob is a product manager at Company X and is hiring engineers using an external recruiting agency.

Bob has a problem though: Just 10% of the engineering candidates that the recruiting agency sends over pass the technical interview.

In other words, the recruiting agency is not calibrated to Bob’s hiring standards. The agency doesn’t understand what signals Company X is looking for in their candidates. And further, it is likely the agency’s initial phone screens aren’t challenging enough and that they don’t have a firm grasp of what tangible and intangible skills will make a candidate the right fit for the role.

A data science skills assessment calibrated for the role will help narrow the candidate pool and ensure that each candidate has the skills required for the job.

Using Skills Tests for Better Data Science Hiring

Every day, technical questions in data science interviews are used to assess whether a candidate will be a productive worker or not.

However, the hiring process for data science jobs is not always 100% accurate.

Even if our example recruiting agency only sent over A+ candidates who passed every interview, Company X’s process could still be flawed. The interview questions could be improperly calibrated for the role, e.g. too easy or off-base, which would result in the wrong hires for open roles.

Calibrated data science skills assessments help solve this problem by adding a layer of vetting to the interview process. Using carefully crafted interview skills tests, companies can narrow the candidate pool so that only the most qualified candidates make it to the technical interview stage.

Why You Should Take a Data Science Skills Test

Knowing where your skills stand against your peers is a powerful data science interview prep tool. On the one hand, if the test is well-calibrated, you’ll know your skill level. This ranking can help you to:

- Apply to the right jobs

- Determine what skills need work

- Help you determine your value to companies

For example, if you scored high on an intermediate data science skills test, you’re likely ready for mid-level data science roles. Yet, if you were to score poorly, you might focus on your technical development or look for more junior-level roles.

How Data Science Tests Are Calibrated

However, for the results to be accurate, the assessment must be calibrated correctly.

If the test isn’t sufficiently calibrated, the mean would be around 90% accuracy. Anyone taking the test would be a “rockstar” data scientist, ranking in the top 10%. Similarly, a skills test that is too hard would provide the opposite results; hardly anyone taking the test would be “qualified.”

Calibrating a skills test reveals the signal from the noise, but it’s difficult to get right. Interview Query has experimented with calibrating skills assessments in the past and applied our research to our Challenges, a series of skills tests for data scientists.

Calibrating Skills Assessments at Interview Query

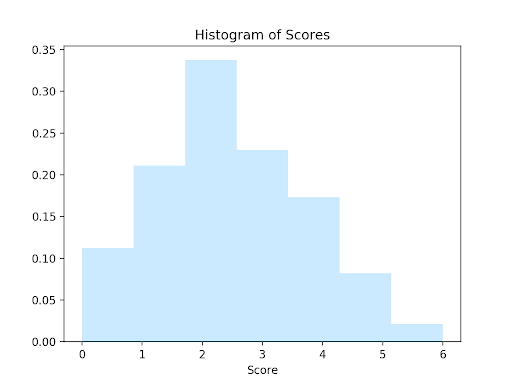

We launched our first multiple-choice data science tests in 2020, which more than 600 data scientists took. One thing we found after launching that first skills test was that the scores were pretty close to a normal distribution, an indicator of decent calibration.

Here’s what the distribution looked like from our initial skills tests:

However, from that first quiz, we did find a few areas that we could improve.

For one, we felt the quiz could be longer. With just six questions, most people got just two answers correct, with a mean of 2.35. If you answered 4 correctly, you were already in the top 20%, and if you answered all six correctly, you were in the top 2%.

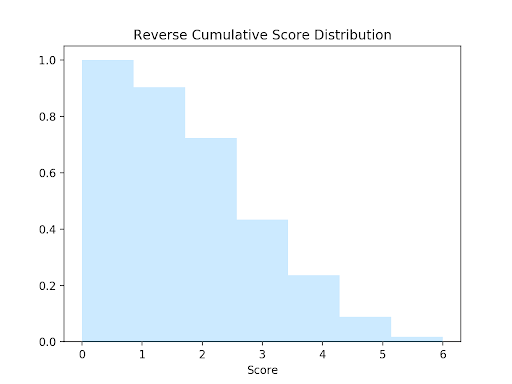

We felt that the sample size would need to be improved to further fine-tune the calibration. Here’s the distribution of cumulative scores:

Ultimately, running that first 6-question data science quiz taught us a few things. It gave us an idea of how calibrated the questions were. The data showed that the questions were decently calibrated but that we’d probably need a larger sample size.

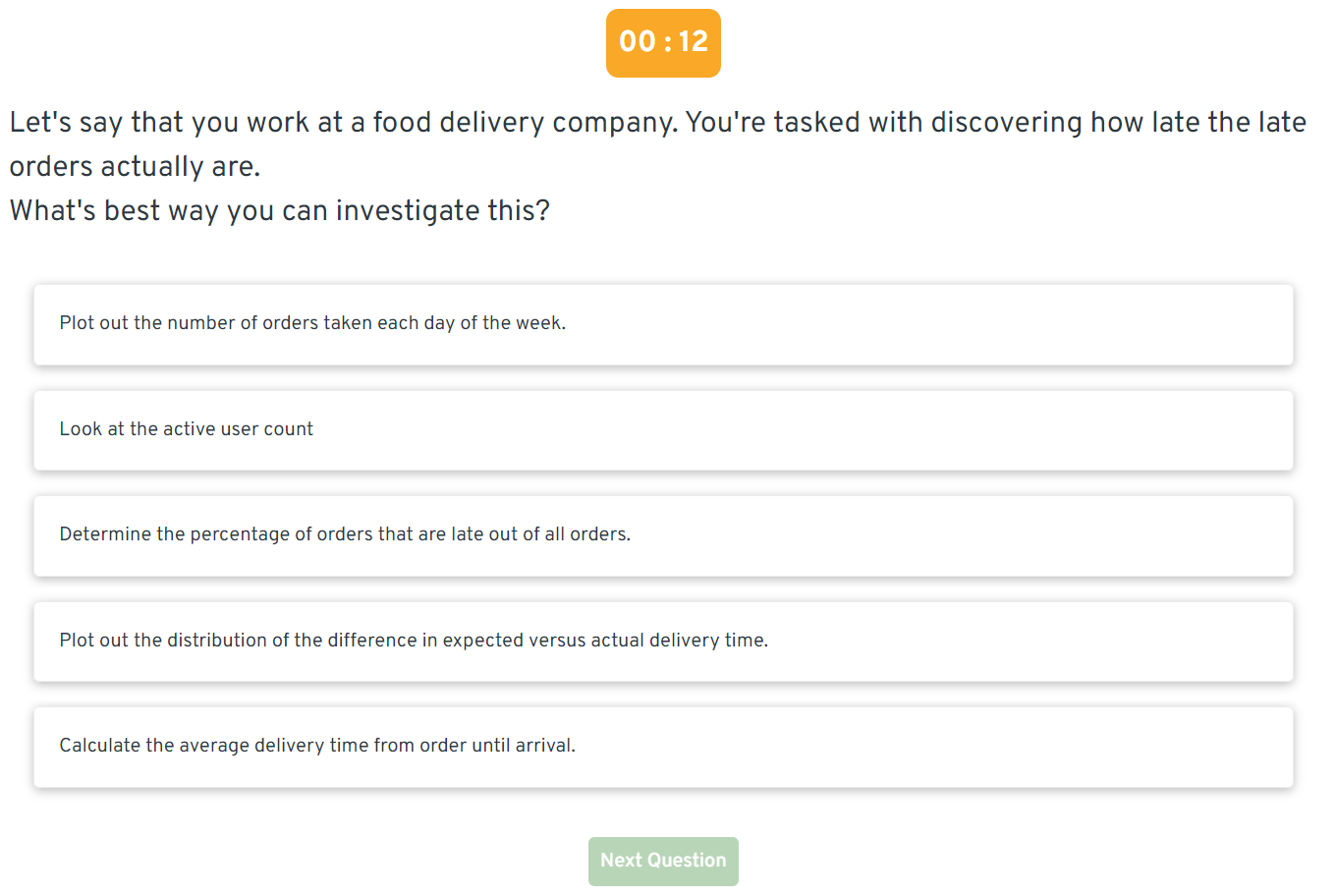

The thing is, multiple-choice questions for data scientists are difficult to write. You have to condense broad concepts and skills into short answers. One way we’ve learned to do that is by testing multiple concepts in a single question. A SQL question from that initial quiz required the test-taker to know how a LEFT JOIN worked, but also what the distribution of the query results should look like.

With Challenges, we’ve continued to refine and calibrate our questions to ensure they’re both challenging and provide a strong assessment of a test taker’s data science intuition.

How to Take a Data Science Skills Test

Currently, Interview Query offers six different skills tests for a variety of data science roles, including machine learning and data engineering assessments. Questions range in difficulty from easy to difficult, and each test requires about 20-30 minutes to complete.

Here’s an example of a question asked in our data science assessment:

Benchmark Your Skills with Challenges on Interview Query

Currently, we offer six Challenge assessments for data scientists, including:

- Data Science Challenge - A general data science assessment that covers skills like A/B testing, product sense, probability/statistics, Python, and machine learning.

- Data Engineering Challenge - A foundational assessment that tests your understanding of SQL, database design and architecture, and data modeling fundamentals.

- Facebook Data Science Challenge - A customized assessment designed specifically for data scientists at Facebook.

- Machine Learning Challenge - A medium-difficulty test focused on machine learning concepts and applications.

- Product and Business Case Study Challenge - A test designed to assess your ability to pull metrics for product and business case questions, as well as your intuition around product/business questions.

- Data Analytics Challenge - Our test that’s calibrated for data analysts, which covers statistics, analytics, and data visualization.

This is a members-only feature. Join Interview Query today to take a Challenge and benchmark your skills against other data scientists on the platform!