Introducing Performance Scores on Interview Query

Overview

Last month, our product development team rolled out a new feature called the “performance score.”

Performance scores are meant to rank your skills in comparison to all other data scientists on Interview Query.

How to Get Your Performance Score

To get ranked, all you need to do is complete one multiple-choice data science assessment. These quizzes take about 25 minutes to complete, and test data science skills like data analytics and machine learning. Here’s an in-depth look at the data science skills tests in challenges.

At the end of the test, we’ll give you a ranking based on your score on the quiz.

Why We’re Introducing Data Science Performance Scores

Here’s a common issue we hear from people from preparing for interviews: They have no reliable way to benchmark their skills and understand how they stack up against the competition.

Are they ready to apply for a data science job? Will they be a competitive candidate?

Employers do this. They narrow down the candidate pool through technical screens and recruiter screens, which give them insights into candidates’ skills. But for candidates it’s difficult to understand how they compare.

Performance score provides insights.

There are already companies doing similar assessments. Companies like HackerRank and CodeSignal provide standardized testing to figure out which data scientists “perform” the best. Employers use these companies to weed out candidates.

But the problem is, these assessments are black boxes for the test takers.

After taking a test, you never get any explanation on how well you performed, and if you got rejected, you never get any feedback on why you were rejected.

In other words, you don’t know if you just fell slightly behind the competition, or if you completely bombed. By allowing users to see their performance score and the questions they go right and wrong, they can being to benchmark skills and really narrow their studies.

Building Challenges on Interview Query

Building standardized tests for data scientists isn’t easy. But at Interview Query, we think we’re on the right track with Challenges.

We’ve designed six Challenges assessments, including:

- Data science

- Data analytics

- Data engineering

- Machine learning

- Product/business case

- Facebook data science

Each challenge assessment is centered around a specific subject matter, and is weighted on different question topics. For example, with our data science challenge, the assessment is weighted towards more A/B testing, analytics, and metrics-based questions, while our machine learning challenge is weighted towards more modeling, statistics, and ML concepts.

Additionally, each challenge is generated by giving the user a list of questions they haven’t seen yet. This way, after you complete a challenge, you can get an accurate assessment of your performance, and you can see which questions you got right or wrong and study up on the corresponding interview questions related to them.

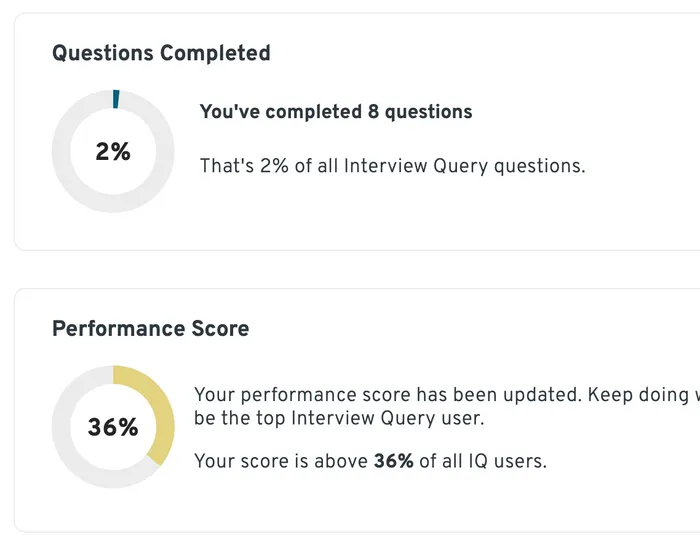

As you work on more challenges, our algorithm will change your “performance score” to show how well you’re scoring compared to other members.

Calculating Performance Scores

We’ve been refining how we calculate performance since 2020, when we launched our first six-question assessment on Typeform.

That started with expanding the questions, as well as the number of assessments available. One problem we’ve had to overcome: Comparing users that have completed a different number of questions.

For example, let’s say that we want to compare two people:

- Jerry has answered 10 questions out of 12 quiz questions correctly.

- Emily has answered 30 questions out of 40 questions correctly.

Which person should rank higher?

In this case, we’d like to make effort (number of questions attempted) independent of skill (percentage of questions you got right), but we also have to factor in if the user has IQ Premium, which would give them access to more interview questions. This is an effect we knew we had to try and normalize for.

However, a straightforward percentage, based on the number of questions answered correctly, also does not work. What if Jerry’s 12 questions are significantly easier than Emily’s 40? That will also bias their comparison.

This is ultimately how we then decided to formulate the score:

Performance formula score = (C / QA) * D

- C = Number of questions answered correctly

- QA = Number of questions attempted

- D = 1 - average success rate of all questions attempted

Therefore, Jerry answered 10 out of 12 questions correctly. The average number of times a user selected or answered the “correct option” was 75% for all 12 questions. He would then have a score of:

(10⁄12) * (1 - 0.75) = 0.21

Emily answered 30 out of 40 questions correctly. Her questions were harder with a 50% success rate. She would score:

(30⁄40) * (1-0.50) = 0.375

Emily would end up ranking higher, and the resulting score would eventually end up normalized based on everyone else’s performance score.

How to Use Performance Scores

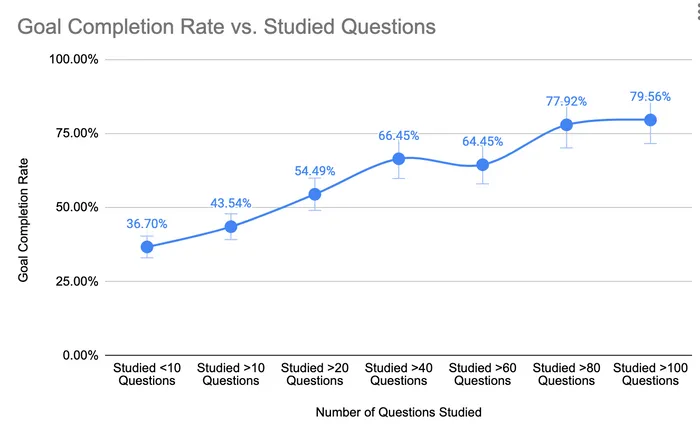

At Interview Query, we’ve long understood that there’s quite a balance between skill and effort in terms of success in the interview process. And while correlations between skills and success are difficult to measure, it’s pretty clear that the correlation between effort and success is much more well defined.

We’ve seen that the more questions you practice, the more likely you are to land a job and achieve your goal:

We hear a similar set of questions from Interview Query members who are prepping for interviews:

- Where am I compared to my peers in data science skill?

- What skills do I need to become a true data scientist/engineer?

- How do I figure out if I’m ready to pass the interview?

The Challenges assessments and performance score provide answers to these questions. With Challenges, you get practice (all of these questions are based on interview questions that you’re likely to hear). But you also can quickly benchmark your skills.

Performance score will help you:

- Understand what skills need practice and where you excel

- Provide a baseline so you can benchmark progress over time

- Determine how well you stack up against IQ members

This is all useful information for data-driven interview prep, and performance scores on Interview Query will continue to get better and offer more insights.

What Comes Next for Performance Scores

Our goal is to eventually segment this data to show how you stack up against different user groups.

If you’re just getting into data science, we’ll compare your score against other people just getting into data science. If you’re experienced and more focused on machine learning, we’ll try to group you against other people that are of a similar demographic.

And lastly, we’re planning to showcase how this metric is looking over time. Because this is a percentile score, as more people try challenges, the more your score is going to shift up or down depending on how other members perform.

Get Your Performance Score Now

Take a Challenge assessment today and get your performance score. You’ll find assessments on a rank of subjects including:

- Data Science

- Machine Learning

- Data Analytics

- Data Engineering

- Facebook Data Science

- Product/Business Case

Complete just one challenge to get your score. Or take a look at how we built the data science skills tests in Challenges.