63 Machine Learning Interview Questions (Updated for 2024)

Overview

Most machine learning interview questions assess, a) your past experience working with machine learning, and b) your capacity to memorize concepts and apply them towards a solution.

Many machine learning questions in interviews are therefore definition-based, theoretical questions. These questions test your ability to apply ML theory towards a business goal, with the most common machine learning interview question categories being:

- Algorithms and Theory - These questions assess your working knowledge of algorithm fundamentals. Often, they are posed as comparison questions.

- Machine Learning Case Studies - Machine learning case studies ask you to guide the interviewer through building a model and explain the various different tradeoffs you can make.

- Applied Modeling Questions - Applied modeling questions take machine learning concepts and ask how they could be applied to fix a certain problem.

- Machine Learning System Design - System design questions look at the design and architecture of recommendation systems, machine learning models, and concepts for scaling these systems

- Recommendation and Search Engines - These questions are often posed like case studies, but they are specific to recommendations and search engines.

- Algorithmic Coding Questions - These questions ask you to code machine learning algorithms from scratch without the use of Python packages.

Machine Learning Algorithms Interview Questions

Machine learning algorithms questions test your conceptual knowledge of machine learning. These questions are asked in one of three ways:

- Comparing differences in algorithms.

- Identifying similarities between algorithms.

- Definitions of algorithm terms.

1. Which model would be better to predict booking prices on Airbnb: linear regression or random forest regression?

Random forest regression is based on bagging, an ensemble learning technique. Here are the advantages of both models:

- Random forests perform better with categorical predictors and can handle missing values and cardinality well while avoiding sizable impact by outliers.

- Linear regression, on the other hand, is the standard regression technique in which relationships are modeled, e.g., y = Ax + B.

Ultimately, the best model for Airbnb comes down to the data distribution. Linear regression would work if you were predicting the price for a single geographic location. However, with a more complex dataset, random forest regression would provide the advantage of forming non-linear combinations into a model from a dataset that could hold one-bedrooms in Seattle as well as mansions in Croatia.

2. What is bias in a model?

Bias is the amount our predictions are systematically off from the target. Bias is the measure of how “inflexible” the model is.

3. What is variance in a model?

Variance is the measure of how much the prediction would vary if the model was trained on a different dataset drawn from the same population. It can also be thought of as the “flexibility” of the model.

4. What is regularization?

Regularization is the act of modifying our objective function by adding a penalty term to reduce overfitting.

5. What is gradient descent?

Gradient descent is a method of minimizing the cost function. The form of the cost function will depend on the type of supervised model.

When optimizing our cost function, we compute the gradient to find the direction of the steepest ascent. To find the minimum, we need to continuously update our Beta, proportional to the steps of the steepest gradient.

6. How do you interpret linear regression coefficients?

Interpreting linear regression coefficients is much simpler than logistic regression. The regression coefficient signifies how much the mean of the dependent variable changes, given a one-unit shift in that variable, holding all other variables constant.

7. What is maximum likelihood estimation?

Maximum likelihood estimation is where we find the distribution that is most likely to have generated the data. To do this, we have to estimate the parameter theta that maximizes the likelihood function evaluated at x.

8. What are some differences between classification and regression techniques in machine learning?

Hint. Think about the goal of each model. What do they want to predict? The difference in this is really the key to all differences in the model.

The key difference between regression and classification models is the nature of the data they want to predict and their output. In regression models, the output is numeric, whereas, in classification models, the output is categorical. The differences between these two types of data are as follows:

9. What is linear discriminant analysis?

LDA is a predictive modeling algorithm for multi-class classification. LDA will compute the directions that will represent the axes that maximize the separation between classes.

10. Compare bagging and boosting algorithms given an example of the tradeoffs between the two.

Recall: What proportion of actual positives was identified correctly?

Precision: What proportion of positive identifications ended up actually correct?

11. Compare bagging and boosting algorithms given an example of the tradeoffs between the two.

Hint. Bagging and boosting are both ensemble learning methods, where we train multiple estimators to combine to form a single model with superior performance. The main difference between bagging and boosting algorithms is that bagging estimators are independent while boosting estimators are dependent.

12. What is the intuition behind the F1 score?

The intuition is that we are taking the harmonic mean between precision and recall. In a scenario where classes are imbalanced, we are likely to have either our precision be extremely high or recall be extremely low, or vice-versa. As a result, this will be reflected in our F1 score, since the lower of the two metrics should drag the F1 score down.

13. Explain what GloVe (Global Vectors for Word Representation) embeddings are.

Rather than use contextual words, we calculate a co-occurrence matrix of all words. GloVe will also take local contexts into account, per a fixed window size, then calculate the covariance matrix. Then, we predict the co-occurrence ratio between the words in the neural network.

GloVe will learn this matrix and train word vectors that predict co-occurrence ratios. Loss is weighted by word frequency.

14. What’s the difference between Lasso and Ridge Regression?

Hint. Both Lasso and Ridge add a penalty term to the standard regression loss function to prevent overfitting. Lasso regression adds the 1-norm of the parameters to the loss function, scaled by α∈(0,1), while Ridge regression adds the 2-norm of the parameters to the loss function.

15. How would you prevent overfitting in a deep-learning model?

You can reduce overfitting by training the network on more examples or reduce overfitting by changing the complexity of the network.

The benefit of very deep neural networks is that their performance continues to improve as they are fed larger and larger datasets. A model with a near-infinite number of examples will eventually plateau in terms of what capacity the network is capable of learning.

16. How to extract semantics from a body of text?

You can use named entity recognition techniques or instead turn to specific packages to measure cosine similarity and overlap.

17. Your manager asks you to build a model with a neural network to solve a business problem. How would you justify the complexity of building a neural network?

Follow-up question. How would you explain the predictions to non-technical stakeholders?

See a step-by-step solution to this question on YouTube:

18. Describe a situation where you would use MSE as a measure of quality.

Mean Square Error (MSE) is defined as the mean or average of the square of the difference between actual and estimated values.

We would use MSE when looking at the accuracy of a regression model.

19. Would an additional feature improve GBM or Logistic Regression more?

Adding an additional feature does not necessarily improve the performance of GBM or Logistic regression because adding new features without a multiplicative increase in the number of observations will lead to a phenomenon whereby we have a complex dataset (a dataset with many features) and a small amount of observations.

20. How do you optimize model parameters during model building?

Model parameter optimization is a process of finding the best values that a model’s parameters take. Model parameters can be tuned by using the Grid Search algorithm or Random Search.

21. What is the relationship between PCA and LDA?

Both techniques are used for dimensionality reduction. PCA is unsupervised, while LDA is supervised.

22. What is the difference between supervised and unsupervised learning?

In supervised learning, input data is provided to the model along with the output. In unsupervised learning, only input data is provided to the model. The goal of supervised learning is to train the model so that it can predict the output when it is given new data.

23. How does the support vector machine algorithm work?

Support Vector Machine is a linear model for classification and regression problems. The idea is that the algorithm creates a line or a hyperplane that separates the data into different classes.

24. You are building a binary classifier on a dataset with 1,000 samples and 10,000 features. Would you use logistic regression?

Logistic regression would not be useful in this situation because the number of features is much greater than the number of observations.

25. What is the outcome of logistic regression on a perfectly separable data?

Say you are given a dataset of perfectly linearly separable data. What would happen when you run logistic regression?

Case Study Machine Learning Interview Questions

Case studies are a common type of problem machine learning scientists are required to solve on the job. Typically, case studies would ask the candidate to explain how they would build a model for a product that exists at the company.

For the machine learning lifecycle, we have roughly six different steps that we should touch on from beginning to end:

- Data Exploration & Pre-Processing

- Feature Selection & Engineering

- Model Selection

- Cross Validation

- Evaluation Metrics

- Testing and Roll Out

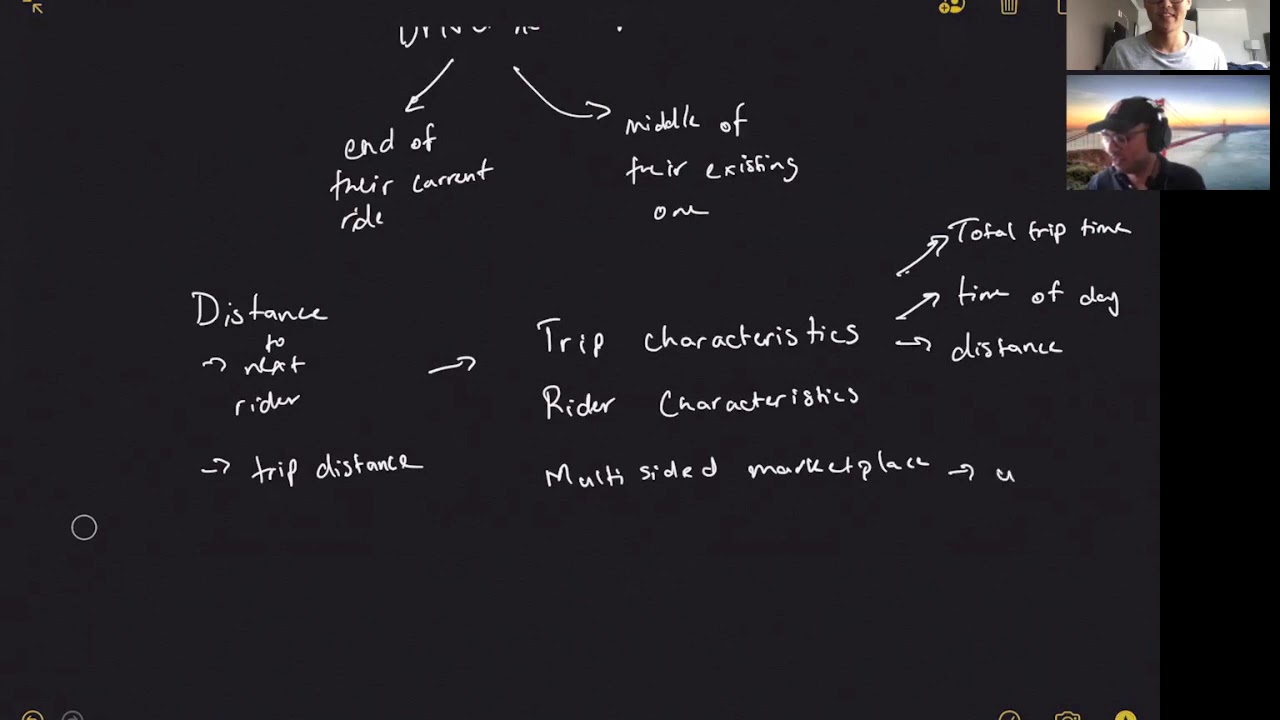

26. Describe how you would build a model to predict Uber Driver ETAs after a rider requests a ride.

Many times, this can be scoped down into a specific portion of the model-building process. For instance, taking the example above, we could instead reword the problem to:

- How would you evaluate the predictions of an Uber Driver ETA model?

- What features would you use to predict the Uber Driver ETA for ride requests?

The main point of these case questions is to determine your knowledge of the full modeling lifecycle and how you would apply it to a business scenario.

We want to approach the case study with an understanding of what the machine learning and modeling lifecycle should look like from beginning to end, as well as create a structured format to make sure we are delivering a solution that explains our thought process thoroughly.

27. How would you build a model to predict if a driver on Uber will accept a ride request?

Some questions to answer in your response include: What algorithm would you use to build this model? What are the tradeoffs between different classifiers?

You can see a full mock interview with a solution for this question on YouTube:

28. How would you build a bank fraud detection model?

More context: The bank wants to implement a text messaging service that will alert customers when the model detects a fraudulent transaction. From here, the customer can approve or deny the transaction with a text response. How would we build this model?

Since we are working with fraud, there has to be a case where there either is a fraudulent transaction or there is not. We should summarize our findings by building out a binary classifier on an imbalanced dataset.

A few considerations we have to make are:

- How accurate is our data? Is all of the data labeled carefully? How much fraud are we not detecting if customers do not even know they are being defrauded?

- What model works well on an imbalanced dataset? Generally, tree models come to mind.

- How much do we care about interpretability? Building a highly accurate model for our dataset may not be the best method if we can not learn anything from it. In the case that our customers are being compromised without us even knowing, then we run into the issue of building a model that we can’t learn from and waste feature engineering for in the future.

- What are the costs of misclassification? If we look at precision versus recall, we can understand which metrics we care about, given the business problem at hand.

29. You have a categorical variable with thousands of distinct values; how would you encode it?

This depends on whether the problem is a regression or classification model.

If it is a regression model, one way would be to cluster them based on the response by working backward.

You could sort them by the response variable and then split the categorical variables into buckets based on the grouping of the response variable. This could be done by using a shallow decision tree to reduce the number of categories.

Another way, given a regression model, would be to target encode them. Replace each category in a variable with the mean response given that category. Now you have one continuous feature instead of a bunch of categories.

For binary classification, you can target encode the column by finding the conditional probability of the response variable is a one, given that the categorical column takes a particular value. Then replace the categorical column with this numerical value. For example, if you have a categorical column of a city in predicting loan defaults, and the probability of a person who lives in San Francisco defaulting is 0.4, you would then replace “San Francisco” with 0.4.

30. How would you develop a machine learning model to handle acronyms so that SVM and Support Machine Learning would convey the same meaning and word?

One option would be to choose a noisy channel model, which is an algorithm that is used in spell checkers and machine translation. Here is an explanation of noisy channel models on Towards Data Science: “In the noisy channel model, each abbreviation is viewed as the result of a random distortion of the original phrase. To recover the original phrase, we need to answer two questions: which original phrases are likely, and which distortions are likely?”

31. You are tasked with building a pizza delivery model for a restaurant franchise. What features would you include in a model to try to predict a no-show?

Hint. When creating a model used to predict a binary classification such as the question above, it is useful to think of explanatory variables that may be important for explaining the phenomena. This process is called manual feature selection and requires expert knowledge in the field. In this case, the knowledge needed is running a pizza franchise.

From a business standpoint, there can be many features that explain someone not showing up to pick up an order. To organize our thoughts, we can think of high-level categories of features and then break them down into more specific features.

32. You want to build a way to estimate the month and day of people’s birthdays. What methods would you propose; what data would you use?

With this question, you could propose a variety of data, including birthdays that were submitted by users and happy birthday posts on users’ timelines.

One simple model for unlabeled data would be to look at users with no birthday listed but who received birthday messages during the last five years. This would help you target the likely month and day of the birthday.

For those without a listed birthday and no birthday posts, one option would be to build a model based on the features from the data set, like having a listed birth date. The idea is that on Facebook many people have friends of a similar age who graduated high school and college around the same time. Therefore, you could build a model based on the average age of a user’s friends to narrow the age at which the user likely falls.

33. How would you assess the success of a clustering algorithm if you did not have pre-labeled data in hand?

More context: Let’s say that a basketball team has hired you as a data scientist to scout for potential players. Your job is to identify similar basketball players, cluster them in smaller groups, and have them practice with each other based on their strengths and weaknesses. You train a clustering model to group similarly scouted players together.

34. How would you fix an algorithm that underprices products on an e-commerce site?

Let’s say you’re working on pricing different products on our e-commerce site. The online price is dependent on the availability of the product, the demand, and the logistics cost of providing it to the end consumer.

You discover our algorithm is vastly under-pricing a certain consumer product. What are the steps you take in diagnosing the problem?

View the video solution here:

[ )](https://www.youtube.com/watch?v=LHt0EFIGZNs

)](https://www.youtube.com/watch?v=LHt0EFIGZNs

35. k-Means from Scratch

Implement the k-means clustering algorithm in python from scratch, given the following:

- A two-dimensional NumPy array

data_pointsthat is an arbitrary number of data points (rows)nand an arbitrary number of columnsm. - Number of k clusters

k. - The initial centroids value of the data points at each cluster

initial_centroids.

Return a list of the cluster of each point in the original list data_points with the same order (as a integer).

Example

After clustering the points with two clusters, the points will be clustered as follows.

Note: There could be an infinite number of separating lines in this example.

Example

#Input

data_points = [(0,0),(3,4),(4,4),(1,0),(0,1),(4,3)]

k = 2

initial_centroids = [(1,1),(4,5)]

#Output

k_means_clustering(data_points,k,initial_centroids) -> [0,1,1,0,0,1]

Applied Modeling Interview Questions

Applied modeling questions take machine learning concepts and ask how they could be applied to fix a certain problem. These questions are a little more nuanced and require more experience but are great litmus tests of modeling and machine learning knowledge.

These types of questions are similar to case studies in that they are mostly ambiguous, require more contextual knowledge and information gathering from the interviewer, and are used to really test your understanding in a certain area of machine learning.

36. Build a model to provide a rejected applicant with a reason for why they got rejected for a loan.

More context. We have a binary classification model that classifies whether or not an application should be qualified to get a loan. However, we do not have access to feature weights. How would you provide each user with a rejection reason?

Example solution:

Pretend that we have three people: Alice, Bob, and Candace. They have all applied for a loan. Simplifying the financial lending loan model, let’s assume the only features taken into consideration are:

- Total number of credit cards.

- Dollar amount of current debt.

- Credit age.

In this example, Alice, Bob, and Candace all have the same number of credit cards and credit age but not the same dollar amount of current debt.

- Alice: 10 credit cards, 5 years of credit age, $20K of debt.

- Bob: 10 credit cards, 5 years of credit age, $15K of debt.

- Candace: 10 credit cards, 5 years of credit age, $10K of debt.

Alice and Bob get rejected for a loan, but Candace gets approved. We would logically assume that Candace’s 10K of debt was the deciding factor in the model approving her for a loan.

How did we reason this out? If the sample size analyzed was instead thousands of people who had the same number of credit cards and credit age with varying levels of debt, we could figure out the model’s average loan acceptance rate for each numerical amount of current debt.

Then we could plot these on a graph to model out the y-value, average loan acceptance, versus the x-value, dollar amount of current debt.

37. In your housing dataset, 20% of listings do not have square footage information. How would you deal with this missing data?

This is a pretty classic modeling interview question. Data cleanliness is a well-known issue within most datasets when building models. Real-life data is messy, missing, and almost always needs to be wrangled into a usable form.

The key to answering this interview question is to probe and ask questions to learn more about the specific context. For example, we should clarify if there are any other features missing data in the listings.

If we are only missing data within the square footage data column, we can build models of different sizes of training data. Now, what is the second method?

38. You have a dataset of 1 million rides in Seattle. You want to build a model to predict ETA for riders. Do we have enough data to make an accurate model?

This question assesses the candidate’s skill in being able to practically figure out how a candidate might approach a problem by evaluating a model.

Specifically, what other kinds of information should we look into when we are given a dataset and build a model with a “pretty good” accuracy rate?

If this is the first version of a model, how would we ever know if we should put any effort into the iteration of the model? And exactly how can we evaluate the cost of extra effort into the model?

There are a couple of factors to look into: 1. Look at the feature set size to training data size ratio. If we have an extremely high number of features compared to data points, then the model will be prone to overfitting and inaccuracy. 2. Create an existing model based on a portion of the data, also called the training set, and measure the performance of the model on the validation sets, otherwise known as using a holdout set. We hold back some subset of the data from the training of the model and then use this holdout set to check the model performance to get a baseline level.

39. What conclusions can be drawn if the area under the ROC curve is 0.5?

When AUC=0.5, then the classifier is not able to distinguish between positive and negative classifications. In other words, the classifier is either predicting a random class or a constant class for all the data points.

40. How would you interpret coefficients of logistic regression for categorical and boolean variables?

Hint: The magnitude of the coefficient is directly correlated to its effect on the outcome probability. The sign of the coefficient tells you whether the variable is directly or inversely correlated with the outcome probability (exponentially so in fact).

Pay attention to the coefficient magnitude in relation to the structure of the variable as all variables are not created equal (i.e., categorical vs. boolean vs. continuous variables)

41. If two predictors are highly correlated, what is the effect on the coefficients in the logistic regression?

Multicollinearity is a statistical phenomenon in which two or more predictor variables in a multiple logistic regression model are highly correlated or associated. Multicollinearity does not reduce the predictive power or reliability of the model as a whole as it only affects calculations regarding individual predictors.

42. You want to predict the probability of a flight delay, but there are flights with delays of up to 12 hours that are really messing up your model. How would you fix this issue?

- One way is to create groups for the output class.

- Delays less than 1 and 2 hours

- Between 2 to 10 hours

- Over 10+ hours.

That way the outliers are skewed out into a specific classification problem instead of regression. Another way would be to just filter them out from the analysis. Oftentimes statisticians will calculate the Interquartile Range (IQR) of the variable, multiply by 1.5, and add it to the Q3 in order to determine the upper range you should include values or designate them as outliers (you can also subtract from the Q1 to find the lower bound).

43. How would you test whether having more friends now increases the probability that a Facebook member is still an active user after six months?

When looking at the question, we can break down our analysis into two steps: analysis of data and using binary classification to understand feature importance.

Since we are interested in whether or not someone will be an active user in six months or not, we can test this assumption by first looking at the existing data. One way to do so is to put users into buckets delineated by the user’s friend size six months ago and then look at their activity over the next six months.

If we set a metric to define “active user”, such as if they logged in X number of times, posted once, etc., we can then just compute the averages on these metrics across the buckets to determine if having more friends is equivalent to higher engagement metrics.

What comes next for this problem?

44. You work for a bank that gives out personal loans. Your co-worker develops a model that takes in customer inputs and returns if a loan should be given or not.

Here are some follow-up questions to answer:

- What kind of model did the co-worker develop?

- Another co-worker thinks they have developed a better model to predict defaults on the loans. Given that personal loans are monthly installments of payments, how would you measure the difference between the two credit risk models within a timeframe?

- What metrics would you track to measure the success of the new model?

45. What causes different success rates in the same algorithm?

Why would the same machine learning algorithm generate different success rates using the same dataset?

Note: When they ask us an ambiguous question, we need to gather context and restate it in a way that’s clear for us to answer.

Machine Learning System Design Interview Questions

Machine learning system design interview questions ask you about the design and architecture of machine learning applications. Essentially, these questions test your ability to solve the problem of deploying a machine learning model that meets specific business requirements.

To answer machine learning system design questions, you should follow a framework:

- Setting the problem statement.

- Architecting the high-level infrastructure.

- Explaining how data moves from one stage to the next.

- Understand how to measure the performance of machine learning models.

- Deal with common problems around scale, reliability, and deployment.

46. How would you build a machine learning system to generate Spotify’s Discover weekly playlist?

Start with some follow-up questions to get clarity. You might ask:

- How will the search be assessed?

- How many podcast searches are text-based? How many podcasts are there?

- Is this a customer-facing tool?

- Is an ML solution really needed?

47. How would you build a sentiment analysis model based on a WallStreetBets subreddit dataset? What are potential problems that might arise?

With this type of data, some downfalls might be:

- Tone.

- Sarcasm.

- User sentiment bias.

- Polarity.

- Use of emojis, images, or negations.

Ultimately, with a question like this, you should have some tips and strategies for dealing with these common sentiment analysis problems.

48. How would you build an ML model to predict a hotel’s occupancy rate?

Follow-up questions: How would you design this model? What data would you use to train your model? How would you evaluate the performance of the model?

With this question, you would want to clarify some points including the definition of occupancy rate, if historical benchmark data exists, etc.

If you have historical data, could you use a regression model?

49. How would you create a system that can detect if a firearm is listed on a marketplace?

See a step-by-step solution to this problem on YouTube:

50. How would you build a dynamic pricing system for Airbnb based on demand and availability? What considerations would have to be made?

One approach would be to build a regression model to predict dynamic prices. You could start with a simple feature set, including details about the property (# of rooms, square footage), location (demographics, proximity to town center, amenities), and demand (# of hourly searches for a location, recent conversion rates, etc.).

A lot of Amazon machine learning questions relate to pricing predictions.

51. How do you come up with a machine learning system design to find good investors?

See a step-by-step solution to this problem on YouTube:

Recommendation and Search Engines Questions

Recommendation and search engines are questions that are technically a combination of case study questions and system design questions. But they are asked so frequently that it is important to conceptualize them into their own category.

52. You work at Facebook and are tasked with building a restaurant recommendation engine. What data would you use? How would you build it?

With a system design interview, remember to follow these steps:

- Data collection

- Feature set

- Model Selection

- Model Evaluation

- Model Rollout

In this problem, you could suggest data points to use, including a user’s location, demographic stats, friends, and any food- or restaurant-related data shared with the platform, e.g., a user liking a local restaurant’s page. Additionally, you could look at restaurants visited by friends, check-ins, etc. Ultimately, you could build a weighted model based on the different types of content available.

This is a common type of Facebook machine learning interview question.

53. How would you build a video recommendation system for YouTube? The main goal is to maximize user engagement.

You should start to answer this question by outlining metrics and design recommendations.

Offline Metrics:

- Precision (the fraction of relevant instances among the retrieved instances)

- Recall (the fraction of the total amount of relevant instances that were actually retrieved).

- Ranking Loss.

- Logloss.

Online Metrics:

- Use A/B testing to compare.

- Click Through Rates (CTR).

- Watch time.

- Conversation rates.

Training:

- User behavior is generally unpredictable and videos can become viral during the day. Ideally, we want to train many times during the day to capture temporal changes.

Inference:

- For every user to visit the homepage, the system will have to recommend 100 videos for them. The latency needs to be under 200ms, ideally sub 100ms.

- For online recommendations, it is important to find the balance between exploration vs. exploitation. If the model over-exploits historical data, new videos might not get exposed to users. We want to strike a balance between relevance and fresh new content.

54. How would you build a recommendation engine for job postings?

More context: You have access to all user LinkedIn profiles, a list of jobs each user applied to, and answers to questions that the user filled in about their job search. What would the job recommendation workflow look like?

One question to answer as you get started: Can we lay out the steps the user takes in the actual recommendation of jobs that allows us to understand what a potential dataset would first look like?

For this problem, we have to understand what our dataset consists of before being able to build a model for recommendations. More so we need to understand what a recommendation feed might look like for the user. For example, what we are expecting is that the user could go to a tab or open up a mobile app and then view a list of recommended jobs sorted by the highest recommended at the top.

We can either use an unsupervised or supervised model. For an unsupervised model, we could use the nearest neighbors or a collaborative filtering algorithm based on features from users and jobs. But if we want more accuracy, we would likely go with a supervised classification algorithm.

55. How would you build the recommendation algorithm for a type-ahead search for Netflix?

With this question, we can think about a simple use case to start out with. Let’s say that we type in the word “hello” at the beginning of a movie. If we typed in h-e-l-l-o, then a suitable suggestion might be a movie like “Hello Sunshine” or a Spanish movie named “Hola”.

Let’s now move on to a Minimum Viable Product (MVP) within the scope. We can begin to think of the solution in the form of a prefix table.

How a prefix table works is that your prefix (the input string) outputs your output string, one at a time to start with. For an MVP, we could input a string and output a suggestion string with added fuzzy matching and context matching.

But now, how do we recommend a certain movie?

For example, if you happened to enter “The Big” as your prefix, that could output any number of suffixes, like “The Big Short” or “The Big Lebowski”. How could you narrow the results?

56. You are given a model that predicts whether a piece of news is relevant or not when shared on Twitter. How would you evaluate the model?

One of the most effective strategies would be to use implicit/explicit feedback.

If using implicit signals (CTR, reading time at the article, dwell time), you must be careful about mixed signals. Are bots clicking on the article? Did someone open the page and just leave it open without viewing it? It can be hard to correctly identify true signals online. Explicit feedback from likes/ratings can be better but are not immune to this type of behavior as well.

With an A/B test measured against the implicit/explicit signal, you can see if the signals you are immediately capturing correlate with long-term metrics of engagement (or whatever the desired user activity metric is).

57. How would you scale the training of a model on the entire Netflix data of millions of users and movies?

More context: You are training the recommendation system for Netflix. You can train a model with a training size of thousands of movies and users.

58. How would you create a recommendation engine using housing data for any user looking for a new rental unit?

More context: You have a database about rent-seeking users regarding their demographic information and interests, with another database containing houses and apartments to be recommended. Lastly, you also have topic tags and metadata such as amenities, price, reviews, location, city, location features, etc.

Machine Learning Algorithm Coding Interview Questions

Python machine learning questions are increasingly common in ML interviews, especially for specialized areas like computer vision. These questions are framed around deriving machine learning algorithms encapsulated on scikit-learn or other packages from scratch.

The interviewer is mainly testing a raw understanding of coding optimizations, performance, and memory on existing machine learning algorithms. Additionally, this would be testing if the candidate REALLY understood the underlying algorithm and if they could build it without using anything but the Numpy Python package.

57. Write a function, search_list, that returns a boolean indicating if a value is in the linked_list.

Code:

def search_list(linked_list, target):

temp = linked_list.headval

while temp is not None:

if temp.dataval == target:

return True

temp = temp.nextval

return False

58. Given two strings A and B, write a function “can_shift” to return whether or not A can be shifted some number of places to get B.

This problem is relatively simple if we figure out the underlying algorithm that allows us to easily check for string shifts between strings A and B.

First off, we have to set baseline conditions for string shifting. Strings A and B must both be the same length and consist of the same letters. We can check for the former by setting a conditional statement for if the length of AA is equivalent to the length of B. Now we can think about the string shift. If B is reordered from A, then the condition has failed. But we can check the order if we continue to repeat B and then compare to see if A exists in B.

59. Given a dictionary with keys of letters and values of a list of letters, write a function “closest_key” to find the key with the input value closest to the beginning of the list.

Example:

Input:

dictionary = {

'a' : ['b','c','e'],

'm' : ['c','e'],

}

input = 'c'

Output:

closest_key(dictionary, input) -> 'm'

In this problem, c is at a distance of 1 from a and 0 from m. Hence, the closest key for c is m.

Hint: Is your computed distance always positive? Negative values for distance (for example between ‘c’ and ‘a’ instead of ‘a’ and ‘c’) will interfere with getting an accurate result.

60. Write a function “shortest_transformation” to find the length of the shortest transformation sequence from “begin_word” to “end_word” through the elements of “word_list”.

Note that only one letter can be changed at a time and each transformed word in the list must exist.

Example:

Input:

Input:

begin_word = "same",

end_word = "cost",

word_list = ["same","came","case","cast","lost","last","cost"]

Output:

def shortest_transformation(begin_word, end_word, word_list) -> 5

Since the transformation sequence would be:

'same' -> 'came' -> 'case' -> 'cast' -> 'cost'

which is five elements long.

61. Build a random forest model from scratch.

The model should have these conditions:

- The model takes as input a dataframe df and an array new_point with a length equal to the number of fields in the df.

- All values of both df and new_point are 0 or 1, i.e., all fields are dummy variables, and there are only two classes.

- Rather than randomly deciding what subspace of the data each tree in the forest will use like usual, make your forest out of decision trees that go through every permutation of the value columns of the data frame and split the data according to the value seen in new_point for that column.

- Return the majority vote on the class of new_point.

- You may use pandas and NumPy but NOT scikit-learn.

62. Given two strings, string1, and string2, write a function “max_substring” to return the maximal substring shared by both strings.

The idea is that we need to try every matching substring of string1 and string2.

So, for example, if we have string1 = abbc, and string2 = acc, we can take the first letter of string1 and look for a match in string2. Once we find one, we are left with the same problem with a smaller portion of the two strings. The remaining part of string1 will be bbc and string2 cc, and we then repeat the process.

- In the second iteration, we do not find a match _b_bc with cc.

- In the third iteration, we do not find a match b_b_c with cc.

- Finally, we have a match bb_c_ with _c_c.

- We finished string1, and the result is ac.

63. Given a sorted list of integers ints with no duplicates, write an efficient function nearest_entries that takes in integers N and k.

Additionally, it should do the following:

- Finds the element of the list that is closest to N.

- Then returns that element along with the k-next and k-previous elements of the list.

The question is prompting you to implement some sort of common search algorithm in base Python. Think about the special conditions placed on ints and how you can use these in selecting the algorithm to implement.

Hint. You may want to divide your function into several “parts”, each dealing with the unique conditions of the problem.

More Machine Learning Interview Resources

Interview Query offers a variety of resources to help you prep for machine learning and machine learning engineer interviews.

First, see our data science course, which features machine learning, modeling, and machine learning system design modules. Also see our lists of Python machine learning questions, Amazon machine learning interview questions, and Google machine learning interview questions.